How to Get Best Performance From the Oracle ZFS Storage Appliance

Best Practices for Oracle ZFS Storage Appliance and VMware vSphere 5.x: Part 1

Published July 2013 ,by Anderson Souza

How to configure disk storage, clustering, CPU and L1/L2 caching size, networking, and filesystems for optimal performance on the Oracle ZFS Storage Appliance.

This article is Part 1 of a seven-part series that provides best practices and recommendations for configuring VMware vSphere 5.x with Oracle ZFS Storage Appliance to reach optimal I/O performance and throughput. The best practices and recommendations highlight configuration and tuning options for Fibre Channel, NFS, and iSCSI protocols.

The series also includes recommendations for the correct design of network infrastructure for VMware cluster and multi-pool configurations, as well as the recommended data layout for virtual machines. In addition, the series demonstrates the use of VMware linked clone technology with Oracle ZFS Storage Appliance.

All the articles in this series can be found here:

Note: For a white paper on this topic, see the Sun NAS Storage Documentation page.

The Oracle ZFS Storage Appliance product line combines industry-leading Oracle integration, management simplicity, and performance with an innovative storage architecture and unparalleled ease of deployment and use. For more information, see the Oracle ZFS Storage Appliance Website and the resources listed in the "See Also" section at the end of this article.

Note: References to Sun ZFS Storage Appliance, Sun ZFS Storage 7000, and ZFS Storage Appliance all refer to the same family of Oracle ZFS Storage Appliances.

Overview of Example System Components

Table 1, Table 2, and Table 3 describe the hardware configuration, operating systems, and software releases used in the reference architecture described in this series of articles.

Table 1. Hardware Used in Reference Architecture

| Equipment | Quantity | Configuration |

|---|---|---|

| Storage | 1 cluster (2 controllers) | Oracle ZFS Storage 7420 cluster 256 GB DRAM per controller 2 x 512GB read cache SSD per controller 2 x 20 2TB SAS-2 disk trays 2 x dual-port 10GbE NIC 2 x dual-port 8Gbps FC HBA 2 x 17GB log device |

| Network | 2 | 10GbE network switch |

| Server | 2 | Sun Server X3-2 from Oracle 256GB DRAM 2 internal HDDs 1 x dual-port 10GbE NIC 1 x dual-port 8Gbps FC HBA |

Table 2. Virtual Machine Components Used in Reference Architecture

| Operating System | Quantity | Configuration |

|---|---|---|

| Microsoft Windows 2008 R2 (x64) | 1 | Microsoft Exchange Server |

| Oracle Linux 6.2 | 1 | ORION: Oracle I/O Numbers Calibration Tool |

Table 3. Software Used in the Reference Architecture

| Software | Version |

|---|---|

| Oracle ZFS Storage Appliance's Appliance Kit (AK) software | 2011.04.24.4.0,1-1.21 |

| Microsoft Exchange Server Jetstress verification tool | 2010 (x64) |

| ORION: Oracle I/O Numbers Calibration Tool | 11.1.0.7.0 |

| VMware vCenter Server | 5.1.0 (Build 880146) |

Controllers, Software Release, and Disk Pools

Virtual desktop infrastructures produce high random I/O patterns and need high storage performance as well as availability, low latency, and fast response time. To meet these demands, use a mirrored data profile. This configuration duplicates copies as well as produces fast and reliable storage by dividing access and redundancy, usually between two sets of disks. In combination with write SSDs' log devices and the Sun ZFS Storage Appliance architecture, this profile can produce a large amount of input/output operations per second (IOPS) to attend to the demand of critical virtual desktop environments.

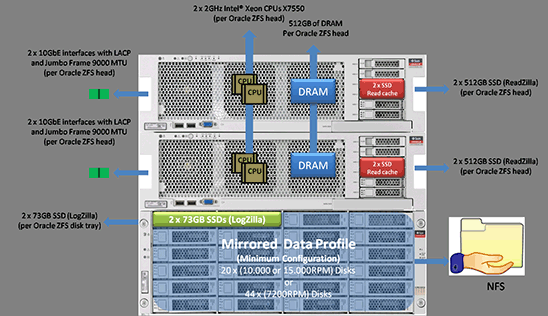

The recommended minimum disk storage configuration for VMware vSphere 5.x includes:

- A mirrored disk pool of (at least) 20 x 300GB, 600GB, or 900GB (10000 or 15000 RPM performance disks) or 44 x 3TB SAS-2 (7200 RPM capacity disk drives) with at least two 73GB SSD devices for LogZilla working with a stripped log profile

- A striped cache of at least 2 x 512GB for L2 cache (L2ARC)

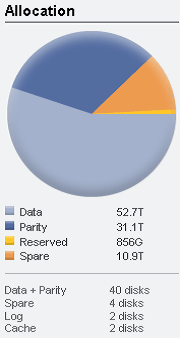

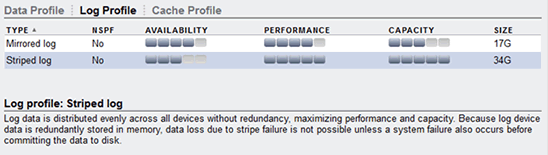

Note: The example shown in Figure 1 through Figure 3 demonstrates 44 x 3TB SAS-2 7200 RPM disks.

Figure 1. Oracle ZFS Storage Appliance—disk pools configuration

Figure 3. Oracle ZFS Storage Appliance—cache profile configuration

Note: For high availability and proper load balancing for a virtual desktop infrastructure, use an Oracle ZFS Storage Appliance model that supports clustering. Configure the cluster in active/active mode and use Oracle ZFS Storage Appliance software release 2011.1.4.2.x or greater.

If you are working with DE2-24C/P drive enclosure models, ensure that the system is working with Oracle ZFS Storage Appliance software release 2011.1.5.0.x or greater. Please refer to the following link for additional information:

https://wikis.oracle.com/display/FishWorks/ak-2011.04.24.5.0+Release+Notes

Also, additional information on an Oracle ZFS Storage Appliance cluster configuration can be found in the Sun ZFS Storage 7000 System Administration Guide at:

http://docs.oracle.com/cd/E26765_01/html/E26397/index.html

CPU, L1 Cache, and L2 Cache

The following combination and sizing of CPU, L1 cache (ARC) and L2 cache (L2ARC) is critical to meet the demands of compression and deduplication operations as well as overall performance in large deployments of a virtual desktop infrastructure. The minimum recommended configuration is:

- At least two 2GHz Intel Xeon X7550 CPUs per Oracle ZFS Storage Appliance head

- At least 512GB of DRAM memory (L1 cache) per head

- At least two 512GB SSD for ReadZilla cache (L2 cache) per head

Network Settings

Do the following to ensure that the network configuration where the NFS and iSCSI traffic will run has been designed to archive high availability and no single point of failure:

- Isolate the storage traffic from other networking traffic. You can configure this utilizing VLAN, network segmentation, or dedicated switches for NFS and iSCSI traffic only.

- On the Oracle ZFS Storage Appliance, configure at least two physical 10GbE (dual-port) NICs per head, bundled into a single channel using the IEEE 802.3ad Link Aggregation Control Protocol (LACP) with a large maximum transmission unit (MTU) jumbo frame (9000 bytes). If you are working with a cluster configuration, configure at least two 10GbE (dual-port) NICs per head, and also use an IP network multipathing (IPMP) configuration in combination with LACP.

- With IPMP configuration you will achieve network high availability, and with link aggregation you will obtain better network performance. These two technologies complement each other and can be deployed together to provide benefits for network performance and availability for virtual desktop environments.

- For picking an outbound port based on source and IP addresses, utilize LACP policy L3.

- For switch communication mode, use the LACP active mode, which will send and receive LACP messages to negotiate connections and monitor the link status.

- Use an LACP short timer interval between LACP messages, as seen in the configuration in Figure 4.

Note: Some network switch vendors do not support the LACP protocol. In this situation, set the LACP mode to "Off." Please refer to your switch vendor documentation for more information.

Figure 4. Figure 4. LACP, jumbo frame, and MTU configurations on Oracle ZFS Storage Appliance

NFS, Projects, and Shares

When working with an Oracle ZFS Storage Appliance that has more than one disk shelf, try to split the workload across different disk pools and use the "no single point of failure" (NSPF) feature. This design will provide you with more storage resources as well as better I/O load balancing, performance, and throughput for your virtualized environment.

The following example uses only one disk shelf, a mirrored storage pool, one project and six different NFS shares. Table 4 lists the pool's projects and filesystem shares.

Table 4. Projects and Filesystem Shares Created for Performance Test

| Pool Name | Projects | Filesystems |

|---|---|---|

Pool1 | winbootvswap ms-exchangedb ms-log linux-os oltp-db | /export/winboot/export/vswap /export/ms-exchangedb /export/ms-log /export/linux-os /export/oltp-db |

Figure 5 shows the share configuration and Figure 6 shows the filesystem and mountpoint configurations on the Oracle ZFS Storage Appliance browser user interface (BUI) for the performance tests. Details for the configuration choices follow.

Figure 5. Share configuration shown in Oracle ZFS Storage Appliance BUI

- Under Space Usage settings, Quota Reservation and even User or Group configuration details for the Oracle ZFS Storage Appliance side are beyond the scope of this article. For more information on these settings, please refer to the Oracle ZFS Storage Appliance documentation (URLs are listed in the "See Also" section at the end of this article). However, as a best practice that includes security considerations, set up NFS ACL permitting the NFS shares to be mounted only by VMware ESXi5.x hosts.

- Read-only: Leave unchecked.

- Update access time on read: Uncheck this option. This option is valid only for filesystems, and controls whether the access time for files is updated upon read. Under heavy loads consisting primarily of reads, and also over a large number of files, turning this option off may improve performance.

- Non-blocking mandatory locking: Do not select this option. This option is valid only for filesystems for which the primary protocol is SMB. SMB is not covered by this article.

- Data deduplication: Do not select this option.

- Data compression: Select the LZJB algorithm of data compression. Before writing data to the storage pool, shares can optionally compress data utilizing different algorithms of compression.

Note: The LZJB algorithm is considered the fastest algorithm and it does not consume much CPU. The LZJB algorithm is recommended for virtualized environments.

- Checksum: Select the Fletcher4 (Standard) checksum algorithm. This feature controls the checksum algorithm used for data blocks and also allows the system to detect invalid data returned from devices. Working with the Fletcher4 algorithm, which is the default checksum algorithm, is sufficient for normal operations and can help avoid additional CPU load.

- Cache device usage: The "All data and metadata" option is recommended. With this option, all files, LUNs, and any metadata will be cached.

- Synchronous write bias: To provide fast response time, select the Latency option.

- Database record size: Configure this setting according to the following table:

Table 5. Database Record Size for Performance Test

Pool Name Projects Filesystems Database Record Size Pool1vswap

ms-exchangedb

ms-log

linux-os

oltp-db

winboot/export/vswap

/export/ms-exchangedb

/export/ms-log

/export/linux-os

/export/oltp-db

/export/winboot64k

32k

128k

64k

8k

64k - Additional replication: To store a single copy of data blocks, select the Normal (Single Copy) option.

- Virus scan: Assuming that each virtual machine will have its own anti-virus software up and running, enabling virus scan here is not recommended. However, in a virtualized environment, this option can be enabled for an additional NFS share that hosts a Windows home directory or shared folder for all users.

Refer to the following document for more information if you want to enable this feature at the appliance level:

- Prevent destruction: By default this option is turned off. Enabling this option to prevent the NFS share from accidental destruction is recommended.

- Restrict ownership change: By default this option is turned on. Changing the ownership of virtual machines files is not recommended.

Figure 6. File systems and mountpoint configuration shown in Oracle ZFS Storage Appliance BUI

Figure 7 shows the minimum recommended configuration of Oracle ZFS Storage Appliance for VMware vSphere 5.x working with NFS protocol. Note that "Oracle ZFS head" in the graphic refers to the Oracle ZFS Storage Appliance head.

Figure 7. Oracle ZFS Storage Appliance—minimum recommended configuration for VMware vSphere 5 working with NFS protocol

See Also

Refer to the following websites for further information on testing results for Oracle ZFS Storage Appliance:

- Oracle ZFS Storage Appliance website: http://www.oracle.com/storage/nas/

- Sun ZFS Storage Appliance Administration Guide: http://download.oracle.com/docs/cd/E22471_01/index.html

- Sun ZFS Storage 7000 Analytics Guide: http://docs.oracle.com/cd/E26765_01/pdf/E26398.pdf

- Sun ZFS Storage 7x20 Appliance Installation Guide: http://docs.oracle.com/cd/E26765_01/pdf/E26396.pdf

- Sun ZFS Storage 7x20 Appliance Customer Service Manual: http://docs.oracle.com/cd/E26765_01/pdf/E26399.pdf

- VMware website: http://www.vmware.com

- VMware vSphere 5.1 documentation: http://www.vmware.com/support/pubs/vsphere-esxi-vcenter-server-pubs.html

- VMware Knowledge Base: "Multipathing policies in ESX/ESXi 4.x and ESXi 5.x": http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1011340

- VMware Knowledge Base: "Changing the queue depth for QLogic and Emulex HBAs": http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1267

- Cisco: http://www.cisco.com

About the Author

Anderson Souza is a virtualization senior software engineer in Oracle's Application Integration Engineering group. He joined Oracle in 2012, bringing more than 14 years of technology industry, systems engineering, and virtualization expertise. Anderson has a Bachelor of Science in Computer Networking, a master's degree in Telecommunication Systems/Network Engineering, and also an MBA with a concentration in project management.