Technical Article

Getting Started with Touchscreen UIs in Java Platform, Micro Edition

by Vikram Goyal , Published August 2010

Learn about the methods in the Canvas class that work well with devices that have touch interfaces and view an application that exercises the basic touch interface methods in Java ME.

Downloads |

|---|

My head is inside a big box, and I feel claustrophobic. I am not sure how I am going to get out. Suddenly, I hear and feel some banging on the outside of the box that goes right through my head and I wonder if this will be the last time I use the diaper boxes to play make-believe with my 2-year-old daughter. Probably not. In a world where everything is going crazy at the same time, a bit of horseplay with kids can give us a real sense of priorities. It can also give you ideas for your work, which is why I am telling you this story.

You see, I thought for a bit about how to introduce this article on touchscreen UIs in Java Platform, Micro Edition (Java ME). Our senses are our most important tools, and my daughter is using all her senses to the maximum these days. After using her fingers to draw, mold, and paint, she moved on to touching my computer screen to move the letters she typed. She could not understand why the letters didn't move. So I took her to see an Apple iPad. The delight on her face was instantaneous when she realized she could use her fingers to move the images and type and she did not need any interface other than her hands. The idea of touch was enough to enable her understand what she was doing. Now I have to figure out how to make her stop trying to do that at home on my computer screen (or buy her an iPad).

Since the launch of the evil "no Java Virtual Machine available" iPhone, almost every mobile manufacturer has released a mobile phone that has a touch interface. In this article, I will introduce you to the methods in the Canvas class that work well with devices that have touch interfaces. I will also create an application that will exercise the basic touch interface methods in Java ME and then build on the application to create an image scrolling application.

Touchscreens and Java ME

The idea of touchscreen interfaces is not new, of course. I used to own a Palm Pilot-type device back in 1999 that had a doodle with which to draw on the screen and make gestures. Touchscreen has come a long way since then, and Apple's iPhone, iPad, and iPod have brought about a revolution in screen manipulation using touch.

Java technology has, of course, provided the means to capture touch events from almost the beginning of Java ME (MIDP 1.0, when it was called J2ME) through the Canvas class.

The Canvas Class

The Canvas class is in the javax.microedition.lcdui package and provides low-level screen and graphic manipulation methods. The class itself is abstract and actual implementations must override methods that they are interested in. The most important of these methods is the paint(Graphics g) method, which does the actual drawing of the graphics. For a link to a full tutorial on the Canvas class, see the See Also section.

Instead of the paint(Graphics g) method, in this article, we are interested in five other methods that help us to build touchscreen interfaces. These methods are hasPointerEvents(), hasPointerMotionEvents(), pointerDragged(int x, int y), pointerPressed(int x, int y), and pointerReleased(int x, int y). The initial idea of these methods was to deal with interfaces that were pointer enabled, that is, the user interacted with the interfaces using a pointing device (hence, the use of pointer), but these methods work just as well in nonpointing devices that are touch-enabled.

Of course, all of this is Canvas class implementation-specific. A touchscreen device from Motorola, for example, might not respond very well to a user's fingers as the primary pointing device, but a device from Nokia might. You have to double-check with the device manufacturer's documentation to see what works best. By providing an empty implementation of the pointerDragged(int x, int y), pointerPressed(int x, int y), and pointerReleased(int x, int y) methods, the implementation of these methods has been left to your code. To make sure the implementation that you are working on supports touchscreen gestures, you can use the hasPointerEvents() and hasPointerMotionEvents() methods. The first of these methods returns true if the implementation supports the pointerPressed(int x, int y) and pointerReleased(int x, int y) methods, while the second method returns true if the pointerDragged(int x, int y) method is supported.

Getting Started with Pointer Events

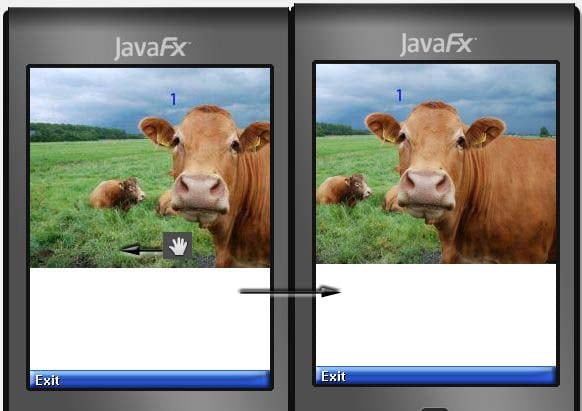

To get familiarized with these methods, let's create an echo MIDlet, which will tell us the location of pointer events when they happen. I am using Java ME SDK 3.0 and for the target phone, I have selected DefaultFxTouchPhone1. Figure 1 shows the MIDlet running in the emulator with the pointer pressed event captured, while Figure 2 captures the event when the pointer was released.

Figure 1: Capturing Pointer Events - Pointer Pressed

Figure 2: Capturing Pointer Events - Pointer Released

When you drag the pointer using your mouse, you will get the message pointer dragged event with the corresponding location information. The source code for the relevant Canvas class is shown here:

class EchoCanvas extends Canvas {

int lastX, lastY = 0;

String action = "";

protected void paint(Graphics g) {

// clear the screen

g.setGrayScale(255);

g.fillRect(0, 0, getWidth(), getHeight());

// able to draw a circle

g.setStrokeStyle(Graphics.SOLID);

g.setGrayScale(0);

g.drawArc(lastX - 2, lastY - 2, 4, 4, 0, -360);

// notify the location

g.drawString(

action + " At: (" + lastX + ", " + lastY + ")",

0, getHeight() - 20, Graphics.TOP|Graphics.LEFT);

}

protected void pointerPressed(int x, int y) {

setCoords(x, y, "Pressed");

}

protected void pointerReleased(int x, int y) {

setCoords(x, y, "Released");

}

protected void pointerDragged(int x, int y) {

setCoords(x, y, "Dragged");

}

// handles the various touch events and calls repaint

private void setCoords(int x, int y, String action) {

this.lastX = x;

this.lastY = y;

this.action = action;

repaint();

}

Simple enough, isn't it? We use the various methods that have been provided in the Canvas class for touch events to get to know the coordinates of the location of the last touch. In the case of pointerDragged(int x, int y), it gives us the location of the last drag. We use these coordinates to draw a round circle at that location and update the status bar at the bottom. The See Also section contains a link to the full MIDlet class.

Using Pointer Events to Create a Touchscreen Image Browser

Using the pointer events within the Canvas class, it is easy to extend the concept to touchscreen devices, as you saw in the previous section. Now, it just depends on how you utilize this information to create more-advanced applications. In this section, we will do just that to create a touchscreen photo browser, similar to the one found on the iPad and iPhone.

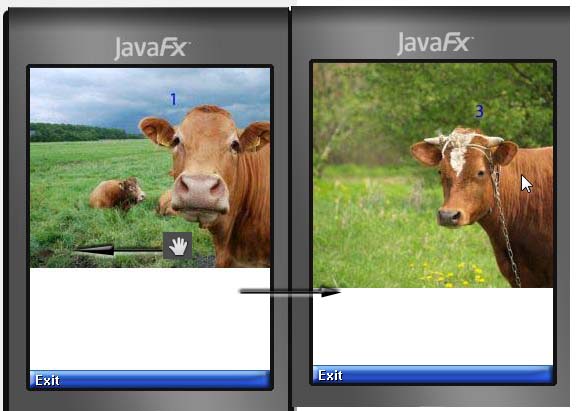

To start with, let's look at the finished screenshots to see what we are trying to achieve. Figure 3 shows how the MIDlet looks when it is loaded up and the effect of trying to move the loaded image slightly to the left.

Figure 3 - Moving the Loaded Image Less than 10 Clicks Reveals the Rest of the Image

Since I have chosen images that are wider than the width of my screen size, the effect that I wanted to create was to replicate scrolling. To that end, if you scroll less than 10 clicks left, the image will scroll to show the rest of the image. If you scroll more than 10 clicks, the next image in the library of images will load up. This is shown in Figure 4. The effect here is to replicate the flicking of images in a library of images by using our fingers to go through the images one by one.

Figure 4 - Moving the Image More than 10 Clicks Shows the Next Image in the Library

Knowing what our end result is makes it easier to figure out what we need to do to put it in action. The following listing shows the code for the actual Canvas class that does all the heavy lifting.

import java.io.IOException;

import javax.microedition.lcdui.Image;

import javax.microedition.lcdui.Canvas;

import javax.microedition.lcdui.Graphics;

public class PhotoCanvas extends Canvas {

private boolean scroll = false;

private int current_displayed_image = 0;

// our library of images

private Image[] images;

private int pressX, releaseX, dragX = 0;

public PhotoCanvas() {

// load up the images

loadImages();

}

protected void paint(Graphics g) {

// clear the screen

g.setGrayScale(255);

g.fillRect(0, 0, getWidth(), getHeight());

// are we scrolling?

if (scroll) {

g.drawImage(

images[current_displayed_image],

-dragX, 0,

Graphics.LEFT | Graphics.TOP);

scroll = false;

return;

}

// we are moving images

if (pressX < releaseX) { // move right

current_displayed_image++;

if (current_displayed_image == images.length) {

current_displayed_image = 0;

}

}

if (pressX > releaseX) { // move left

current_displayed_image--;

if (current_displayed_image < 0) {

current_displayed_image = (images.length - 1);

}

}

// draw the new moved image

g.drawImage(

images[current_displayed_image],

0, 0,

Graphics.LEFT | Graphics.TOP);

}

// store the location of the start of the touch

protected void pointerPressed(int x, int y) {

pressX = x;

}

// where did we release and have we moved far enough to justify new image?

protected void pointerReleased(int x, int y) {

if (scroll) {

return;

}

releaseX = x;

if (Math.abs(releaseX - pressX) > 10) {

repaint();

}

}

// did we drag the pointer just to scroll the image?

protected void pointerDragged(int x, int y) {

int deltaX = pressX - x;

if (Math.abs(deltaX) <= 10) {

scrollImage(deltaX);

}

}

// handles scrolling

private void scrollImage(int deltaX) {

int imageWidth = images[current_displayed_image].getWidth();

if (imageWidth > getWidth()) {

dragX += deltaX;

if (dragX < 0) {

dragX = 0;

} else if (dragX + getWidth() > imageWidth) {

dragX = imageWidth - getWidth();

}

}

scroll = true;

repaint();

}

// loads up the images at start

private void loadImages() {

images = new Image[3];

try {

images[0] = Image.createImage("/cow1.jpg");

images[1] = Image.createImage("/cow2.jpg");

images[2] = Image.createImage("/cow3.jpg");

} catch (IOException ex) {

ex.printStackTrace();

}

}

}

The pointerPressed, pointerReleased, and pointerDragged methods collectively set the various parameters that are used by paint() to justify scrolling or shifting images. In the pointerPressed method, we store the current location on the x axis of where the user has started the touch action. We then use the logic within the pointerReleased and pointerDragged methods to figure out how much the user's finger has moved along the image (deltaX). If the movement is over 10 clicks, we know we need to show the next image. Otherwise, we are content with scrolling the image along the x axis. This movement can happen in both directions (negative or positive x). I have deliberately ignored the movement along the y axis to keep things simple.

Conclusion

This article introduced you to the touch interface in the Canvas class for capturing pointer movements in graphical user interfaces. Another class, CustomItem, provides methods to capture similar pointer movements in forms, where you can mix them with standard items. Unfortunately, neither one of them provides multi-touch events at the moment, and it is hoped that this will be addressed in the future.

Java ME has always had the ability, via the Canvas interface, to cater to touch interfaces. It was the actual implementations of these interfaces that were provided by various manufacturers that did not keep pace with this ability. Finally, we are seeing improvements in this field. I hope this article has encouraged you to develop your own touch interface-enabled MIDlets. Good Luck!

About the Author

Vikram Goyal is the author of Pro Java ME MMAPI: Mobile Media API for Java Micro Edition, published by Apress. This book explains how to add multimedia capabilities to Java technology-enabled phones. Vikram is also the author of the Jakarta Commons Online Bookshelf, and he helps manage a free craft projects Web site.