How to Set Up a Load-Balanced Application Across Two Oracle Solaris Zones

Published June 2012

How to add high availability to your application by using Oracle Solaris Zones and the Integrated Load Balancer in Oracle Solaris 11.

This article describes how to combine the built-in Integrated Load Balancer (ILB) with Oracle Solaris Zones and the new network virtualization capabilities of Oracle Solaris 11 to set up a virtual server on a single system. This article starts with a brief overview of ILB and follows with an example of setting up a virtual Apache Tomcat server instance. You will need a basic knowledge of Oracle Solaris Zones and networking administration.

Overview of the Integrated Load Balancer

ILB provides Layer 3 and Layer 4 load-balancing capabilities—that is, it operates at the network (IP) and transport (TCP/UDP) layers—for the Oracle Solaris operating system installed on SPARC-based and x86-based systems. ILB intercepts incoming requests from clients, decides which back-end server should handle the requests based on load-balancing rules, and then forwards the requests to the selected server. ILB performs optional health checks and provides the data for the load-balancing algorithms to verify whether the selected server can handle the incoming requests.

Key Features

- Supports the stateless Direct Server Return (DSR) and Network Address Translation (NAT) modes of operation for both IPv4 and IPv6.

- Enables ILB administration through a command-line interface (CLI)

- Provides server monitoring capabilities through health checks.

Note: One reason for using the full-NAT mode is that no special routing needs to be set up outside of the ILB box. For example, if department A administers the ILB box but a different department owns the back-end servers and the network, department A can use full-NAT mode to do the load balancing. All requests first go to the ILB box; then ILB changes the destination IP addresses of the requests to the IP addresses owned by back-end servers, replaces the source IP addresses of the requests to an address owned by ILB, and forwards the requests to the back-end servers. The back-end servers will treat the requests as if they were sent from an internal system. So the back-end servers do not need any special set up and there is no need to set up special routing.

Major Components

ilbadmCLI: You can use this interface to configure load-balancing rules, perform optional health checks, and view statistics.libilbconfiguration library:ilbadmand other third-party applications can use the functionality implemented inlibilbfor ILB administration.ilbddaemon: This daemon performs the following tasks:- Manages persistent configuration

- Provides serial access to the ILB kernel module by processing the configuration information and sending it to the ILB kernel module for execution

- Performs health checks and provides the results to the ILB kernel module so that the load distribution is properly adjusted

Further information about ILB can be found in the Oracle Solaris Administration: IP Services guide.

Virtual Apache Tomcat Server Example

This example will use the half-NAT mode, which means that as traffic hits the ILB, the ILB re-writes the destination IP address of the real server; the source IP address is preserved. This mode is usually preferred for server logging (see "ILB Operation Modes" in the Oracle Solaris Administration: IP Services guide for more information).

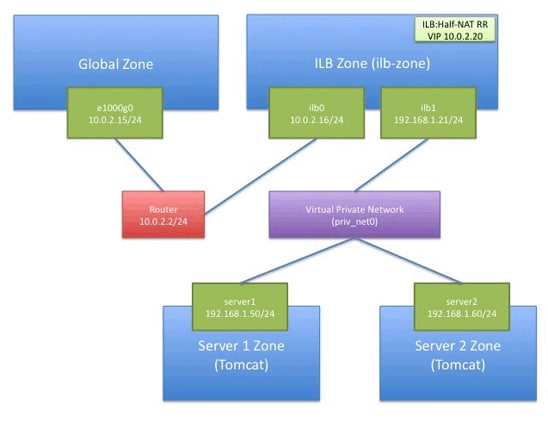

This example will load balance incoming traffic to the virtual server (IP address 10.0.2.20) across two zones, each running the Apache Tomcat server. The load balancer itself will be configured as a multihomed zone (ilb-zone), as shown in Figure 1. One interface of ilb-zone (10.0.2.16/24) is connected to the outside network. The other interface (192.168.1.21/24) is connected to the virtual network hosted in the same system. In ILB, the address 10.0.2.20 is referred to as the virtual IP address (VIP) of a load balancing rule.

Figure 1. Diagram of the Virtual Apache Tomcat Server Example

Step 1: Create the Virtual Network Interfaces (VNICS)

The first step is to create a series of virtual network interfaces (VNICs) and a virtual switch (etherstub) for the different zones:

root@solaris:~# dladm create-vnic -l e1000g0 ilb0

root@solaris:~# dladm create-etherstub priv_net0

root@solaris:~# dladm create-vnic -l priv_net0 ilb1

root@solaris:~# dladm create-vnic -l priv_net0 server1

root@solaris:~# dladm create-vnic -l priv_net0 server2

The etherstub priv_net0 acts as the virtual network inside the system connecting the ILB zone and the two zones running the Apache Tomcat servers. This means that the Apache Tomcat servers can be accessed only via the ilb-zone.

Step 2: Create the Zones

If you don't already have a file system for your zones, run the following command:

root@solaris:~# zfs create -o mountpoint=/zones rpool/zones

Then create the ILB zone, as shown in Listing 1:

root@solaris:~# zonecfg -z ilb-zone

ilb-zone: No such zone configured

Use 'create' to begin configuring a new zone.

zonecfg:ilb-zone> create

zonecfg:ilb-zone> set zonepath=/zones/ilb-zone

zonecfg:ilb-zone> add net

zonecfg:ilb-zone:net> set physical=ilb0

zonecfg:ilb-zone:net> end

zonecfg:ilb-zone> add net

zonecfg:ilb-zone:net> set physical=ilb1

zonecfg:ilb-zone:net> end

zonecfg:ilb-zone> verify

zonecfg:ilb-zone> exit

Listing 1. Creating the ILB Zone

Create the server zones, server1-zone and server2-zone, as shown in Listing 2:

root@solaris:~# zonecfg -z server1-zone

server1-zone: No such zone configured

Use 'create' to begin configuring a new zone.

zonecfg:server1-zone> create

zonecfg:server1-zone> set zonepath=/zones/server1-zone

zonecfg:server1-zone> add net

zonecfg:server1-zone:net> set physical=server1

zonecfg:server1-zone:net> end

zonecfg:server1-zone> verify

zonecfg:server1-zone> exit

root@solaris:~# zonecfg -z server2-zone

server2-zone: No such zone configured

Use 'create' to begin configuring a new zone.

zonecfg:server1-zone> create

zonecfg:server1-zone> set zonepath=/zones/server2-zone

zonecfg:server1-zone> add net

zonecfg:server1-zone:net> set physical=server2

zonecfg:server1-zone:net> end

zonecfg:server1-zone> verify

zonecfg:server1-zone> exit

Listing 2. Creating the Server Zones

Step 3: Install the ILB Zone (ilb-zone)

Now that we have configured the zones, we need to install them. We will use the default zone system configuration, which will interactively ask us a number of questions during first boot. Once we have done one zone installation (in this case, the ilb-zone), we can quickly clone the zone in a few seconds.

root@solaris:~# zoneadm -z ilb-zone install

A ZFS file system has been created for this zone.

Progress being logged to /var/log/zones/zoneadm.20111107T101755Z.ilb-zone.install

Image: Preparing at /zones/ilb-zone/root.

Install Log: /system/volatile/install.9968/install_log

AI Manifest: /tmp/manifest.xml.QBaGZe

SC Profile: /usr/share/auto_install/sc_profiles/enable_sci.xml

Zonename: ilb-zone

Installation: Starting ...

Creating IPS image

Installing packages from:

solaris

origin: http://pkg.oracle.com/solaris/release

...

...

Done: Installation completed in 605.138 seconds.

Next Steps: Boot the zone, then log into the zone console (zlogin -C) to

complete the configuration process

Log saved in non-global zone as /zones/ilb-zone/root/var/log/zones/zoneadm.20111107T101755Z.ilb-zone.install

Listing 3. Installing the Zones

Let's now boot up ilb-zone and check the status of the zones we've created:

root@solaris:~# zoneadm -z ilb-zone boot

root@solaris:~# zoneadm list -cv

ID NAME STATUS PATH BRAND IP

0 global running / solaris shared

1 ilb-zone running /zones/ilb-zone solaris excl

- server1-zone configured /zones/server1-zone solaris excl

- server2-zone configured /zones/server2-zone solaris excl

root@solaris:~# zlogin -C ilb-zone

[Connected to zone 'ilb-zone' console]

Once we log in to the zone, we are immediately prompted by the system configuration tool. This interactive tool walks through various system configuration tasks. In this example, we use the following configuration for the ilb-zone zone:

- The host name is

ilb-zone. - The network interface

ilb0has an IP address of10.0.2.16/24with a default route of10.0.2.2. - The network interface

ilb1has an IP address of192.168.1.21/24. - The name service is configured to use DNS with the same name servers configured in the global zone.

The system configuration tool supports providing configuration for only one network interface; in this case, we will configure ilb0. After the initial configuration has been applied, we have to log in to the zone to provide the additional configuration for ilb1, as follows:

root@ilb-zone:~# ipadm create-ip ilb1

root@ilb-zone:~# ipadm create-addr -T static -a local=192.168.1.21/24 ilb1/v4

Step 4: Install the First Server Zone (server1-zone)

We will create the first server zone, server1-zone, as a clone of the ilb-zone. After we clone the zone, we will configure server1-zone and create server2-zone as a clone of server1-zone. We will first shut down ilb-zone and clone it. Then we will log in to the newly cloned server1-zone to finish the final configuration for that zone:

oot@solaris:~# zoneadm -z ilb-zone shutdown

root@solaris:~# zoneadm -z server1-zone clone ilb-zone

A ZFS file system has been created for this zone.

Progress is being logged to /var/log/zones/zoneadm.20120428T004843Z.server1-zone

Log saved in non-global zone as /zones/server1-zone/var/log/zones/zoneadm.2020428T004843Z.server1-zone

root@solaris:~# zoneadm -z server1-zone boot

root@solaris:~# zlogin -C server1-zone

[Connected to zone 'server1-zone' console]

As we experienced previously, the system configuration tool is launched, and we can do the final configuration for server1-zone:

- The host name is

server1-zone. - The network interface

server1has an IP address of192.168.1.50/24with a default route of192.168.1.21. - The name service is set to

none.

ILB works as the forwarding agent for traffic between the outside network (via ilb0) and the private network (via ilb1). IP forwarding service is needed. This example uses IPv4 addresses, so only IPv4 forwarding needs to be enabled, as shown in Listing 4, in the ilb-zone non-global zone (you will need to boot the zone and log in):

root@ilb-zone:~# routeadm -u -e ipv4-forwarding

root@ilb-zone:~# routeadm

Configuration Current Current

Option Configuration System State

---------------------------------------------------------

IPv4 routing disabled disabled

IPv6 routing disabled disabled

IPv4 forwarding enabled enabled

IPv6 forwarding disabled disabled

Routing services "route:default ripng:default"

Routing daemons:

STATE FMRI

disabled svc:/network/routing/legacy-routing:ipv4

disabled svc:/network/routing/legacy-routing:ipv6

disabled svc:/network/routing/ripng:default

online svc:/network/routing/ndp:default

disabled svc:/network/routing/route:default

disabled svc:/network/routing/rdisc:default

Listing 4. Enabling IPv4 Forwarding

Step 5: Check Internet Access from the ILB Zone

Test whether you can ping the outside world from within the ILB zone, ilb-zone:

root@ilb-zone:~# ping www.oracle.com

www.oracle.com is alive

Step 6: Install Tomcat into the First Server Zone (server1-zone)

Apache Tomcat is the service we will load balance, so we need to install it into server1-zone using the package manager, pkg, as shown in Listing 5. We do not need network connectivity in server1-zone because the package manager proxies all connections through the global zone. If the software has been installed into other zones, it will be cached locally; otherwise, the software is downloaded through the global zone from configured package repositories on the network.

root@server1-zone:~# pkg install runtime/java tomcat tomcat-examples

Packages to install: 17

Create boot environment: No

Create backup boot environment: No

Services to change: 5

DOWNLOAD PKGS FILES XFER (MB)

Completed 17/17 3381/3381 56.3/56.3

PHASE ACTIONS

Install Phase 4269/4269

PHASE ITEMS

Package State Update Phase 17/17

Image State Update Phase 2/2

Loading smf(5) service descriptions: 1/1

Listing 5. Installing the Apache Tomcat Service

Once Apache Tomcat has been successfully installed, we need to enable the tomcat6 instance of the svc:/network/http SMF service as follows:

root@server1-zone:~# svcadm enable http:tomcat6

As a summary of our status, let's list the zones from the global zone:

root@solaris:~# zoneadm list -cv

ID NAME STATUS PATH BRAND IP

0 global running / solaris shared

1 ilb-zone running /zones/ilb-zone solaris excl

2 server1-zone running /zones/server1-zone solaris excl

- server2-zone configured /zones/server2-zone solaris excl

Step 7: Configure Routing to the First Server Zone (server1-zone) for Testing

From the global zone, we need to be able to reach server1-zone to verify that it is running properly, so we will add a new route. We use the route -p command to make a routing change that is persistent across network restarts, as follows:

root@solaris:~# route -p add 192.168.1.0 10.0.2.16

add net 192.168.1.0: gateway 10.0.2.16

add persistent net 192.168.1.0: gateway 10.0.2.16

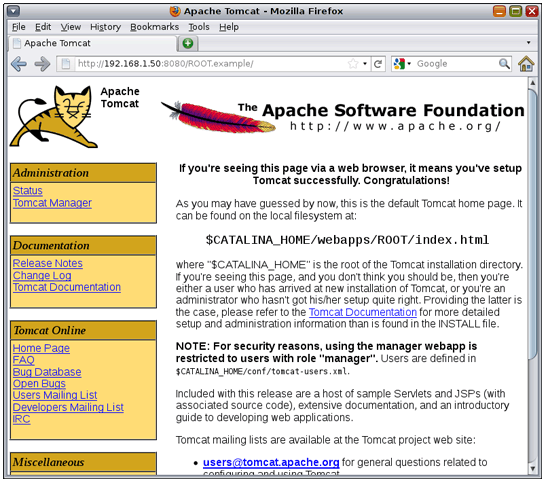

With this change, you should now be able to reach the Apache Tomcat service running in server1-zone from the global zone via ilb-zone, as shown in Figure 2.

Figure 2. Apache Tomcat Welcome Screen

Step 8: Cloning the Apache Tomcat Instance

Now that we have a successful instance of the Apache Tomcat server running in server1-zone, we can quickly create another instance in server2-zone. First, we will shut down server1-zone so we can clone it, and then we will boot both zones and log in to the new server2-zone, as shown in Listing 6:

root@solaris:~# zoneadm -z server1-zone shutdown

root@solaris:~# zoneadm -z server2-zone clone server1-zone

root@solaris:~# zoneadm -z server1-zone boot

root@solaris:~# zoneadm -z server2-zone boot

root@solaris:~# zoneadm list -cv

ID NAME STATUS PATH BRAND IP

0 global running / solaris shared

1 ilb-zone running /zones/ilb-zone solaris excl

2 server1-zone running /zones/server1-zone solaris excl

3 server2-zone running /zones/server2-zone solaris excl

root@solaris:~# zlogin -C server2-zone

[Connected to zone 'server2-zone' console]

Listing 6. Creating Another Apache Tomcat Instance

Once again, we use the system configuration tool to finish the configuration of server2-zone with the following:

- The host name is

server2-zone. - The network interface

server2has an IP address of192.168.1.60/24with a default route of192.168.1.21(interfaceilb1inilb-zone). - The name service is set to

none.

Within minutes, we have a new zone and Apache Tomcat is already up and running (confirmed using svcs from server2-zone):

root@server2-zone:~# svcs http:tomcat6

STATE STIME FMRI

online 9:05:44 svc:/network/http:tomcat6

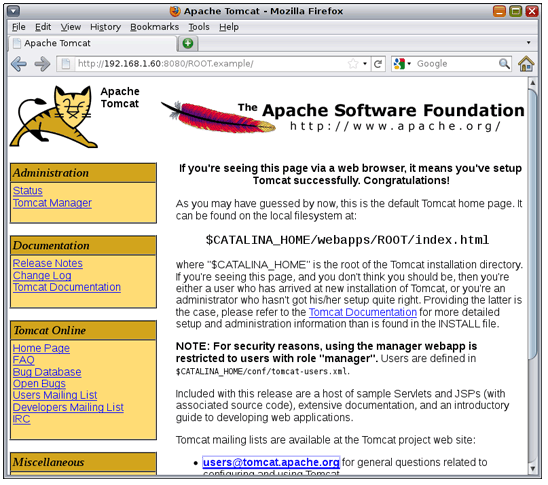

The Apache Tomcat instance can also be reached from the global zone, as shown in Figure 3.

Figure 3. Reaching Apache Tomcat from the Global Zone

It's easy to see how Oracle Solaris 11 can be used to efficiently deploy application instances in an instant, which is ideal for cloud-type environments.

Step 9: Configure Load Balancing for the Apache Tomcat Servers

Now that we have two instances of Apache Tomcat running in their own zones, let's set up load balancing to scale traffic between them. In the ILB zone, ilb-zone, we start by installing the ILB package using the package manager (pkg), as shown in Listing 7.

root@ilb-zone:~# pkg install ilb

Packages to install: 1

Create boot environment: No

Create backup boot environment: No

Services to change: 1

DOWNLOAD PKGS FILES XFER (MB)

Completed 1/1 23/23 02/0.2

PHASE ACTIONS

Install Phase 55/55

PHASE ITEMS

Package State Update Phase 1/1

Image State Update Phase 2/2

Load smf(5) service descriptions: 1/1

Listing 7. Installing the ILB Package

Let's now enable the ILB service and define a server group, tomcatgroup, for our two Apache Tomcat instances in server1-zone and server2-zone.

root@ilb-zone:~# svcadm enable ilb

root@ilb-zone:~# ilbadm create-servergroup -s

servers=192.168.1.50:8080,192.168.1.60:8080 tomcatgroup

root@ilb-zone:~# ilbadm show-servergroup

SGNAME SERVERID MINPORT MAXPORT IP_ADDRESS

tomcatgroup _tomcatgroup.0 8080 8080 192.168.1.50

tomcatgroup _tomcatgroup.1 8080 8080 192.168.1.60

The next step is to define the load balancing. This can often be the most complicated part of the process. To start with, let's define a simple rule called tomcatrule_rr that is enabled and persistent and matches incoming packets for virtual destination IP address and port number 10.0.2.20:80 using the "round robin" load balancing algorithm and a HALF-NAT network topology with a destination of the tomcatgroup server group.

root@ilb-zone:~# ilbadm create-rule -e -p -i vip=10.0.2.20,port=80 -m

lbalg=rr,type=HALF-NAT,pmask=32 -o servergroup=tomcatgroup tomcatrule_rr

root@ilb-zone:~# ilbadm show-rule

RULENAME STATUS LBALG TYPE PROTOCOL VIP PORT

tomcatrule_rr E roundrobin HALF-NAT TCP 10.0.2.20 80

We can show more details about the new rule that we created using the -f option, as shown in Listing 8.

root@ilb-zone:~# ilbadm show-rule -f

RULENAME: tomcatrule_rr

STATUS: E

PORT: 80

PROTOCOL: tcp

LBALG: roundrobin

TYPE: HALF-NAT

PROXY-SRC: --

PMASK: /32

HC-NAME: --

HC-PORT: --

CONN-DRAIN: 0

NAT-TIMEOUT: 120

PERSIST-TIMEOUT: 0

SERVERGROUP: tomcatgroup

VIP: 10.0.2.20

SERVERS: _tomcatgroup.0,_tomcatgroup.1

Listing 8. Details of the New Rule

Finally, we need to tell the outside world which packets destined for our VIP address, 10.0.2.20, should be sent to our network interface, ilb0. First, we must find the MAC address of ilb0 from the ilb-zone, as follows:

root@ilb-zone:~# dladm show-vnic ilb0

LINK OVER SPEED MACADDRESS MACADDRTYPE VID

ilb0 ? 1000 2:8:20:4e:fa:fd random 0

Once we have the MAC address, in this case, 2:8:20:4e:fa:fd, we must apply a published permanent internet-to-MAC address translation using arp.

root@ilb-zone:~# arp -s 10.0.2.20 2:8:20:4e:fa:fd pub permanent

Step 10: Verify the Load Balancing

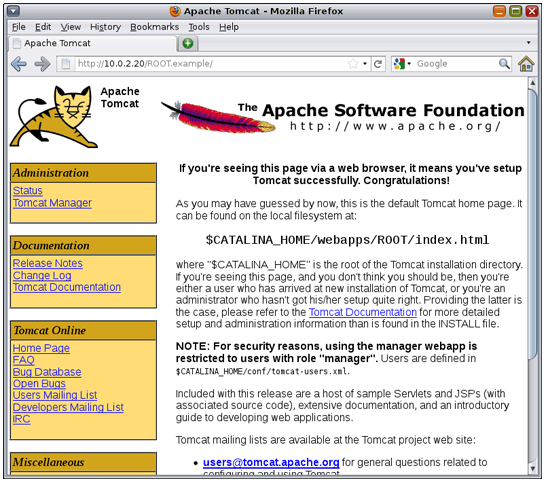

We can check to see whether our two Apache Tomcat instances have been load balanced by pointing our browser to virtual IP address 10.0.2.20 to see whether we get the Apache Tomcat welcome page, as shown in Figure 4.

Figure 4. Apache Tomcat Welcome Page

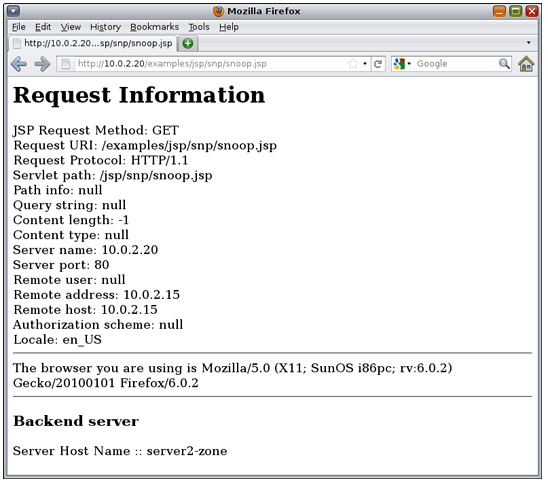

Very cool! But how do we know what instance it was served from? We can easily check this by making a quick change to one of the example scripts in the Apache Tomcat document root, /var/tomcat6/webapps/examples/jsp/snp/snoop.jsp, on both server1-zone and server2-zone. We'll add the following lines, which will print the server host name of the Apache Tomcat instance the request was served by:

<h3>Backend server</h3>

<%@page import="java.net.InetAddress;" %>

<%String ip = "";

InetAddress ina = InetAddress.getLocalHost();

out.println("Server Host Name :: "+ina.getHostName());%>

Once we make the changes, we can bring up the script on our virtual IP address, http://10.0.2.20/examples/jsp/snp/snoop.jsp, to see the result, as shown in Figure 5.

Figure 5. Checking the Server Host Name

Step 11: Perform Health Checks

The simple ILB rule did not include any health checks on the server, so it is of limited use since we do not want the load balancer to feed requests to a dead server. Numerous health checking options are available: ping probes, TCP probes, UDP probes, and user-defined scripts. Since we are concerned about the health of our Apache Tomcat instances, let's write a simple health checking script, as shown in Listing 9:

root@ilb-zone:~# mkdir /opt/ilb

root@ilb-zone:~# cat > /opt/ilb/hc-tomcat #!/usr/bin/bash result=`curl -s http://$2:8080`

if [ "$result" != "" ] && [ ${result:0:5} = "<meta" ]; then echo 0 else echo -1 fi

root@ilb-zone:~# chmod +x /opt/ilb/hc-tomcat

Listing 9. Health Checking Script

This script simply pings the server and checks to see whether it returns a <meta> header. Also notice in Listing 9 that there is a script variable $2. ILB provides a number of variables that can be used in scripts:

$1— VIP address (literal IPv4 or IPv6 address)$2— Server IP address (literal IPv4 or IPv6 address)$3— Protocol (either UDP or TCP)$4— The load-balance mode (DSR, NAT, HALF-NAT)$5— Numeric port$6— Maximum time in seconds the script should wait before returning failure

We can quickly double-check to make sure this runs OK by running it on server1-zone to make sure it returns a 0 for success, as follows:

root@ilb-zone:~# /opt/ilb/hc-tomcat 10.0.2.20 192.168.1.50

0

Once our script is running successfully, we can then associate the script with a health check rule using ilbadm, as follows:

root@ilb-zone:~# ilbadm create-healthcheck -h

hc-test=/opt/ilb/hc-tomcat,hc-timeout=2,hc-count=1,hc-interval=10 hc-tomcat

root@ilb-zone:~# ilbadm show-healthcheck

HCNAME TIMEOUT COUNT INTERVAL DEF_PING TEST

hc-tomcat 2 1 10 Y /opt/ilb/hc-tomcat

In this case, our script will run once every 10 seconds, waiting a maximum of 2 seconds for a response. Once we have created this health check, we need to modify our existing ILB rule. ILB doesn't currently support modifying existing rules, so we will quickly delete the existing rule and create the new rule, as shown in Listing 10:

root@ilb-zone:~# ilbadm delete-rule tomcatrule_rr

root@ilb-zone:~# ilbadm create-rule -e -p -i vip=10.0.2.20,port=80 -m

lbalg=rr,type=HALF-NAT,pmask=32 -h hc-name=hc-tomcat -o servergroup=tomcatgroup tomcatrule_rr

root@ilb-zone:~# ilbadm show-rule -f

RULENAME: tomcatrule_rr

STATUS: E

PORT: 80

PROTOCOL: tcp

LBALG: roundrobin

TYPE: HALF-NAT

PROXY-SRC: --

PMASK: /32

HC-NAME: hc-tomcat

HC-PORT: ANY

CONN-DRAIN: 0

NAT-TIMEOUT: 120

PERSIST-TIMEOUT: 0

SERVERGROUP: tomcatgroup

VIP: 10.0.2.20

SERVERS: _tomcatgroup.0,_tomcatgroup.1

Listing 10. Creating a New ILB Rule

Once the new rule has been created, the health check goes into immediate effect. We can quickly get a status of the health checking by using ilbadm show-hc-result, as follows:

root@ilb-zone:~# ilbadm show-hc-result

RULENAME HCNAME SERVERID STATUS FAIL LAST NEXT RTT

tomcatrule_rr hc-tomcat _tomcatgroup.0 alive 0 11:01:18 11:01:23 739

tomcatrule_rr hc-tomcat _tomcatgroup.1 alive 0 11:01:20 11:01:34 1111

As we can see above, everything is working well and both servers are responding. But what happens if one of the servers goes down? Let's quickly disable the Apache Tomcat instance in server1-zone and see what happens.

root@server1-zone:~# svcadm disable http:tomcat6

Let's check the status again from the ilb-zone.

root@ilb-zone:~# ilbadm show-hc-result

RULENAME HCNAME SERVERID STATUS FAIL LAST NEXT RTT

tomcatrule_rr hc-tomcat _tomcatgroup.0 dead 63 11:22:43 11:22:50 941

tomcatrule_rr hc-tomcat _tomcatgroup.1 alive 0 11:22:30 11:22:45 952

All requests are directed to the Apache Tomcat instance on server2-zone.

Summary

Integrated Load Balancer (ILB), a built-in feature of Oracle Solaris 11, provides Layer 3 and Layer 4 load-balancing capabilities. ILB can be a powerful way of adding resilience to your applications because it intercepts incoming requests from clients, decides which back-end server should handle the requests based on a set of load-balancing rules, and forwards the requests to the selected server.

See Also

See the Integrated Load Balancer product documentation.

Here are additional Oracle Solaris 11 resources:

- Download Oracle Solaris 11

- Access Oracle Solaris 11 product documentation

- Access all Oracle Solaris 11 how-to articles

- Learn more with Oracle Solaris 11 training and support

- See the official Oracle Solaris blog

- Check out The Observatory blog for Oracle Solaris tips and tricks

- Follow Oracle Solaris on Facebook and Twitter

Revision 1.0, 06/04/2012