Oracle AI Database Private Agent Factory

Build and deploy no-code AI agents in minutes. Use a visual interface to orchestrate data-centric workflows on-prem or in the cloud without ever sharing your data with third parties or model providers.

Private Agent Factory Quick Start

Install Private Agent Factory on OCI IaaS or on-prem to get started today

Build and deploy enterprise ready AI agents and workflow automations

Knowledge based AI Agents

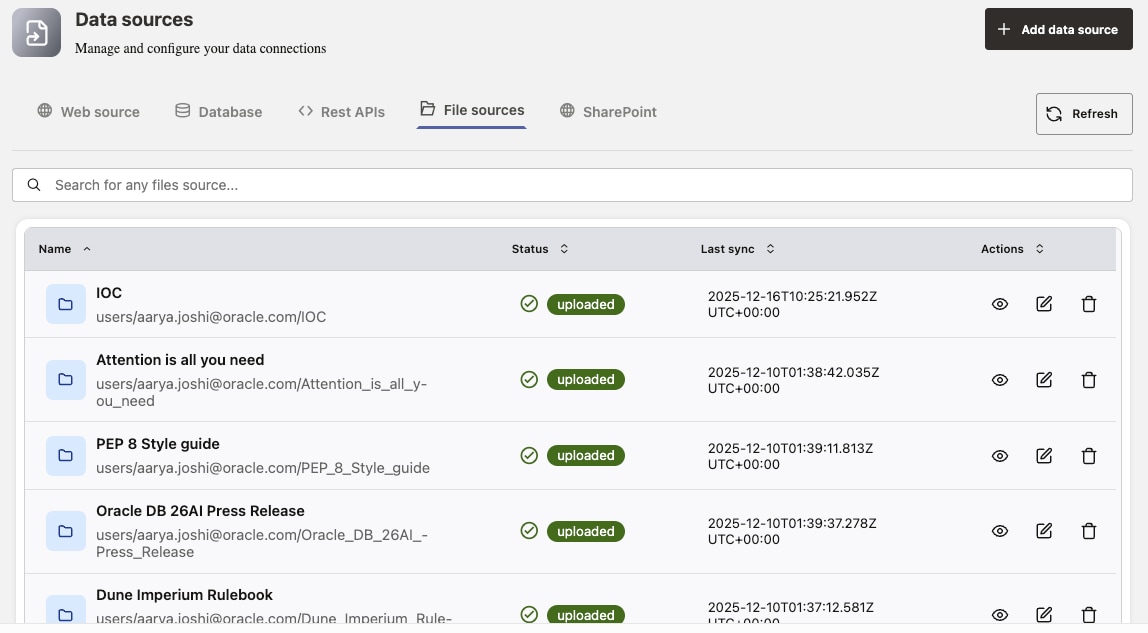

Augment AI Vector Search and LLM capabilities with enterprise data, enabling context-rich responses by retrieving relevant information from internal knowledge bases and documents, as well as external sources like SharePoint, websites, file systems, and Google Drive.

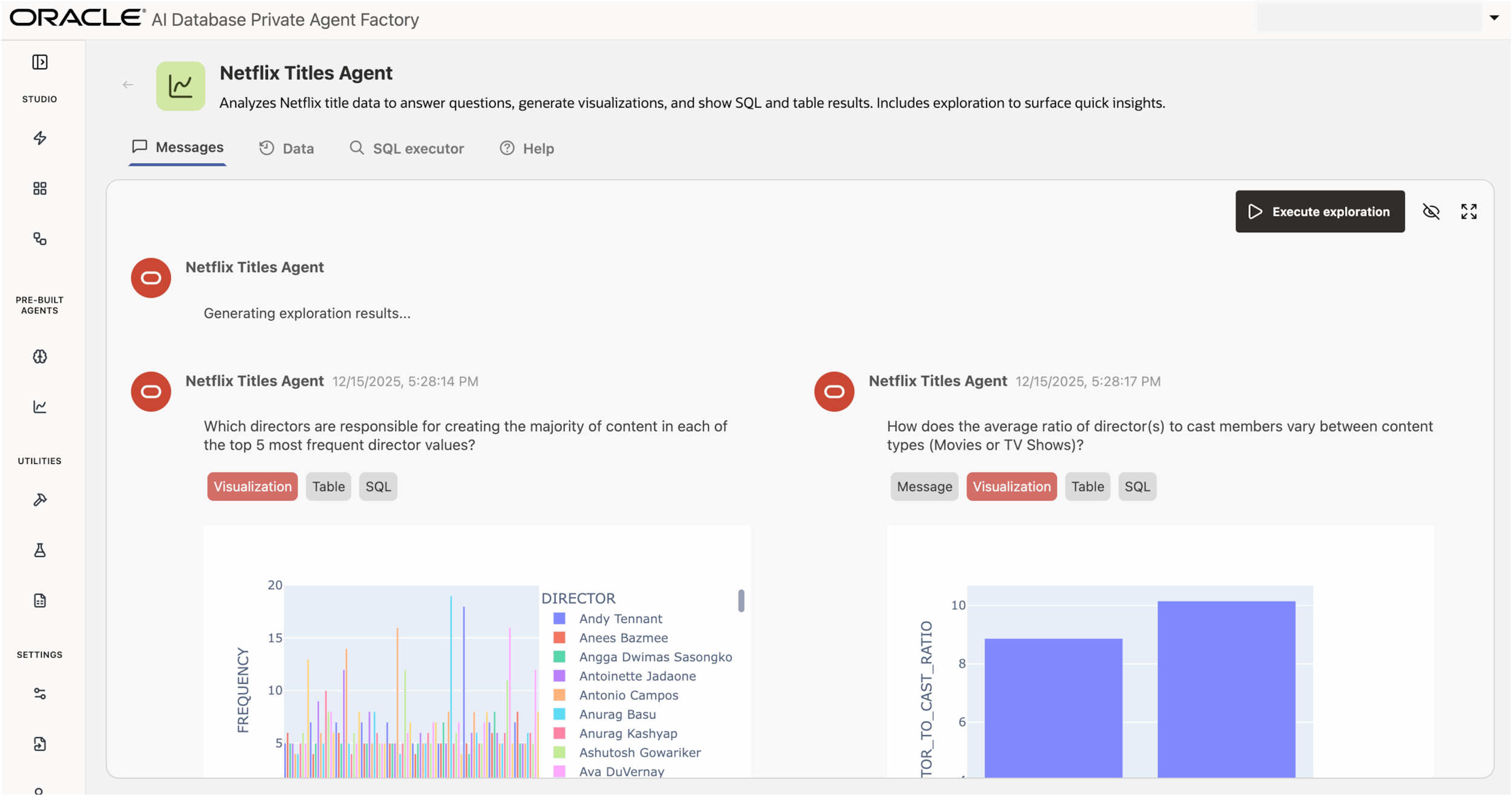

Data analysis Agent

Analyze schemas, generate questions and explanations, and auto‑build visualizations on Oracle AI Database 19c+ while the LLM reasons over metadata.

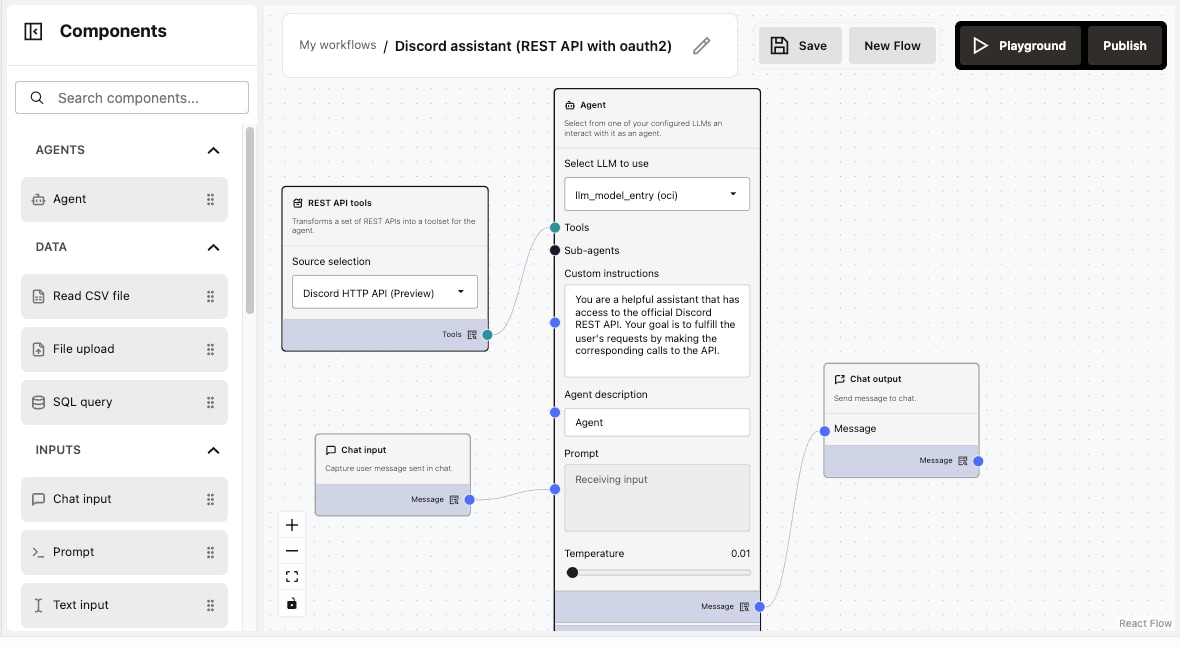

No‑code Agent Builder

Build AI agents from scratch without coding using drag‑and‑drop nodes, templates, live testing, and built‑in guardrails.

AI workflow automation

Orchestrate LLMs, SQL, vector search, and OpenAPI/MCP tools to automate end‑to‑end tasks, then expose agents via AgentURL and SDKs for easy app integration.

Security and governance

Protect access with SSO and role management, enforce prompt guardrails, and enable grounded, source‑linked responses with built‑in evaluation before production.

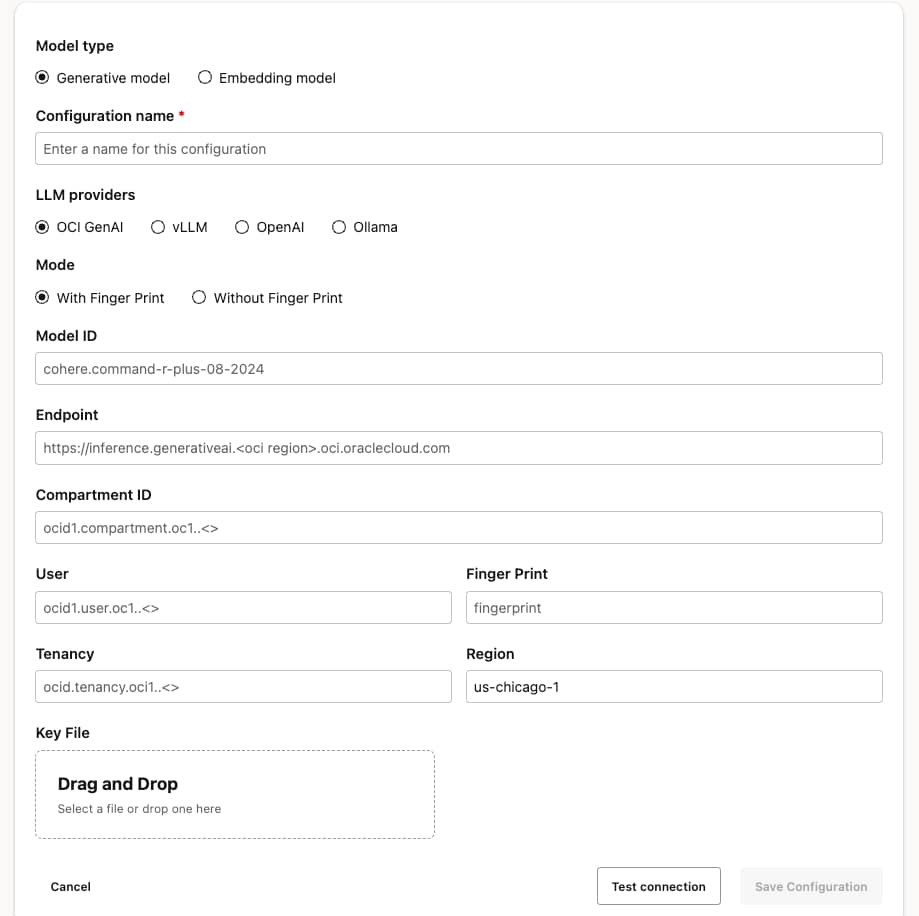

Models and interoperability

Choose your model (OCI GenAI, Llama, OpenAI, Cohere, Grok, Ollama, vLLM) and import/export with Agent Spec to reuse LangGraph, AutoGen, or CrewAI work.

In the high-stakes world of enterprise procurement, contract renewals are often a significant bottleneck. Manually reviewing outdated agreements, cross-referencing shifting market prices, and formatting data for Oracle E-Business Suite (EBS) interface tables can be a tedious, error-prone process.

With Private Agent Factory, you can build an agent that automates that flow end-to-end using existing purchase agreement documents as the source for line items, pulling latest unit prices from the EBS database via an MCP server, applying approved template language, and generating a ready to use CSV file for EBS.

Every Oracle database generates Automatic Workload Repository (AWR) reports — often 100+ pages of detailed performance diagnostics.

Traditionally, a DBA reviews reports manually, and only a subset are properly analyzed. This means that insights may arrive after performance degradation has already occurred, if at all. Now an AI agent can create this.

This is true operational leverage, however it also increases the surface area for an attack. Without enterprise tooling, a DBA might upload the report to some 3rd-party AI tool. If it’s pasted just once, that information could be leaked.

Generating Cloud test cases and fully executable test scripts on the fly from diverse inputs like development handoff to QA (DHQA) documents, reported bugs, internal knowledge bases, and ongoing user prompts is a hard problem that agents can help solve. Scripts need to run with user-provided inputs, execute pre-checks, validate critical operations, and capture outputs into structured logs are important for enterprise environments. Each request spans multiple cloud services, demands asynchronous tool execution, and must preserve enterprise compliance.

Features of Agent Factory

Knowledge Based AI Agents

Knowledge Agents deliver “RAG‑in‑a‑box” by retrieving trusted content from SharePoint, websites, file systems, and OCI Object Storage using Oracle AI Vector Search, so every answer is grounded and traceable.

Data Analysis Agent

This schema‑aware assistant for Oracle AI Database 19c+ generates relevant questions, explanations, and automatic visualizations while keeping raw data private from the LLM.

Agent Builder

The no‑code canvas lets teams create agents and workflow automations with LLM/agent nodes, SQL, file and CSV inputs, prompt and I/O nodes, MCP Server, and OpenAPI tool creation, plus Prompt Lab and Open Agent Spec import/export.

Data and Tools

Connect Oracle AI Database, SharePoint, websites, file systems, and OCI Object Storage; upload OpenAPI 2.0/3.0 JSON or integrate MCP servers to auto‑create tools and orchestrate SQL and REST in one flow.

Prompt Lab

Prompt Lab enables rapid prompt experimentation across models, side‑by‑side comparison, and prompt libraries, integrated with Agent Builder and governed by guardrails and roles. This helps accelerate quality, helps standardize best practices, and de‑risking deployments

Models

Select the best model per task or cost profile—OCI Generative AI, Meta Llama, Cohere Command, OpenAI gpt‑4o family, Grok, Ollama, or vLLM and compare outputs in Prompt Lab.

Security and Governance

Enterprise SSO and roles, prompt guardrails, grounded responses with source links, and embedded evaluation (LLM‑as‑a‑judge plus human review) help teams optimize quality before and after launch.

Embedded evaluation provides metrics and diagnostics to tune prompts, models, and workflows before production, improving reliability at scale

Why choose Oracle AI Database Agent Factory

Support mission-critical enterprise environments with security, governance, and trust requirements. Installs on-premises and in air-gapped environments, in addition to IaaS on cloud in your own tenancy. Run models locally with privacy within the enterprise.

Simple

A no‑code platform to build custom AI agents fast, with prebuilt Knowledge Agents and a Data Analysis Agent, templates, and live testing that reduce time to value

Flexible

Add OpenAPI and MCP tools; choose models including OCI GenAI, Llama, OpenAI, Cohere, Grok, Ollama, vLLM; import/export via Agent Spec; serve multiple types of AI agents across teams

Powerful

Leverage the full power of Oracle AI Database and bring AI to your data with AI Vector Search and built-in RAG pipelines, rich analytic SQL, Documents, Spatial, Graph, etc. This works with Oracle Database 19c data, and you can access data in repositories like Sharepoint, Google Drive, and others

Multilevel security and governance

Enterprise SSO and roles, prompt guardrails, grounded and traceable responses, and embedded evaluation (LLM‑as‑a‑judge + human) to help optimize before production

Performance at scale

In‑database vector search, optimized retrieval, and Oracle AI Database integration deliver low‑latency, resilient, scalable agent experiences. The agent factory deploys container in container with scalable runtimes managed by Kubernetes

Privacy

In‑database vector search, optimized retrieval, and Oracle AI Database integration deliver low‑latency, resilient, scalable agent experiences. The agent factory deploys containers with scalable runtimes managed by Kubernetes