Vector Embedding Service

The Private AI Services Container Vector Embedding Service generates vector embeddings.

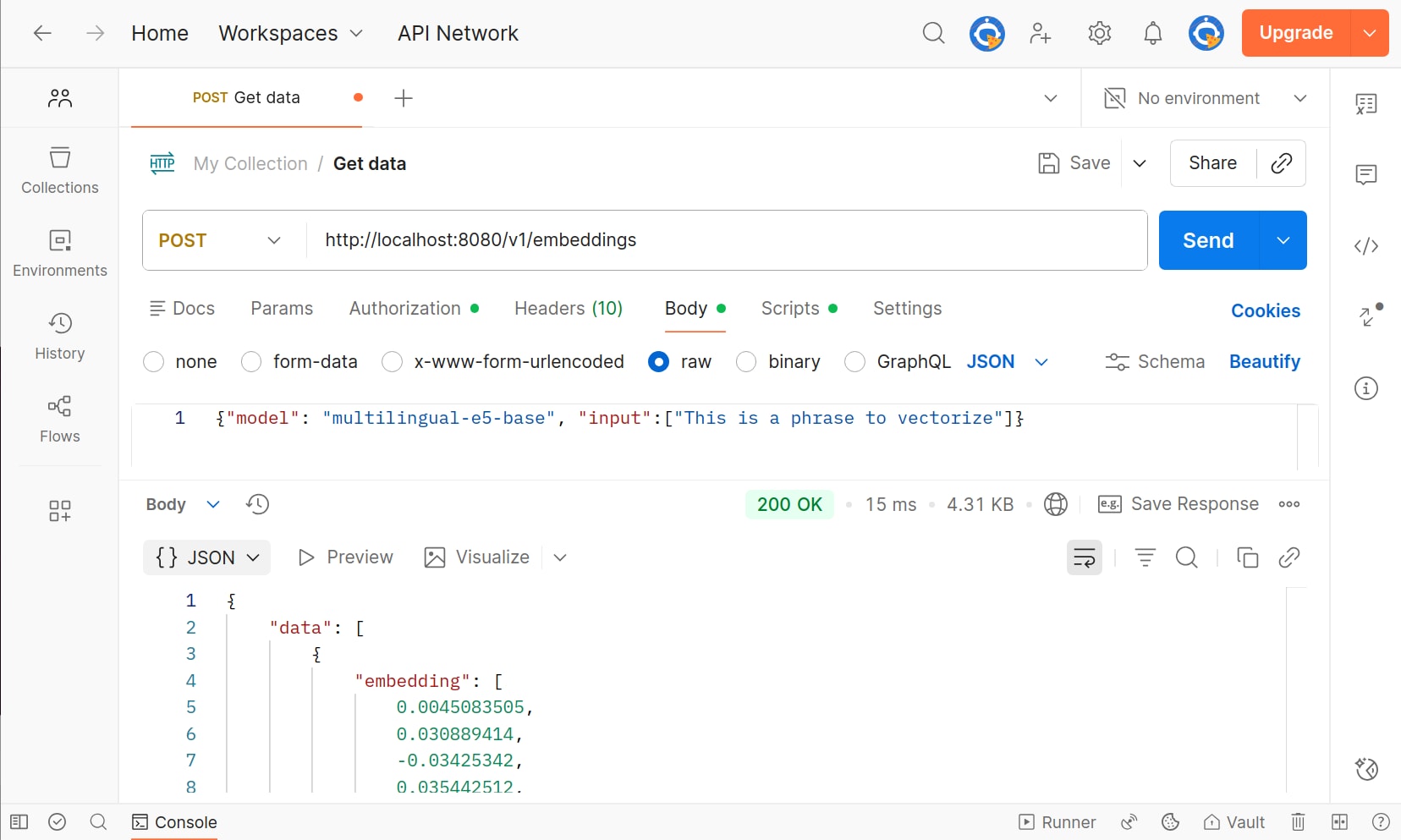

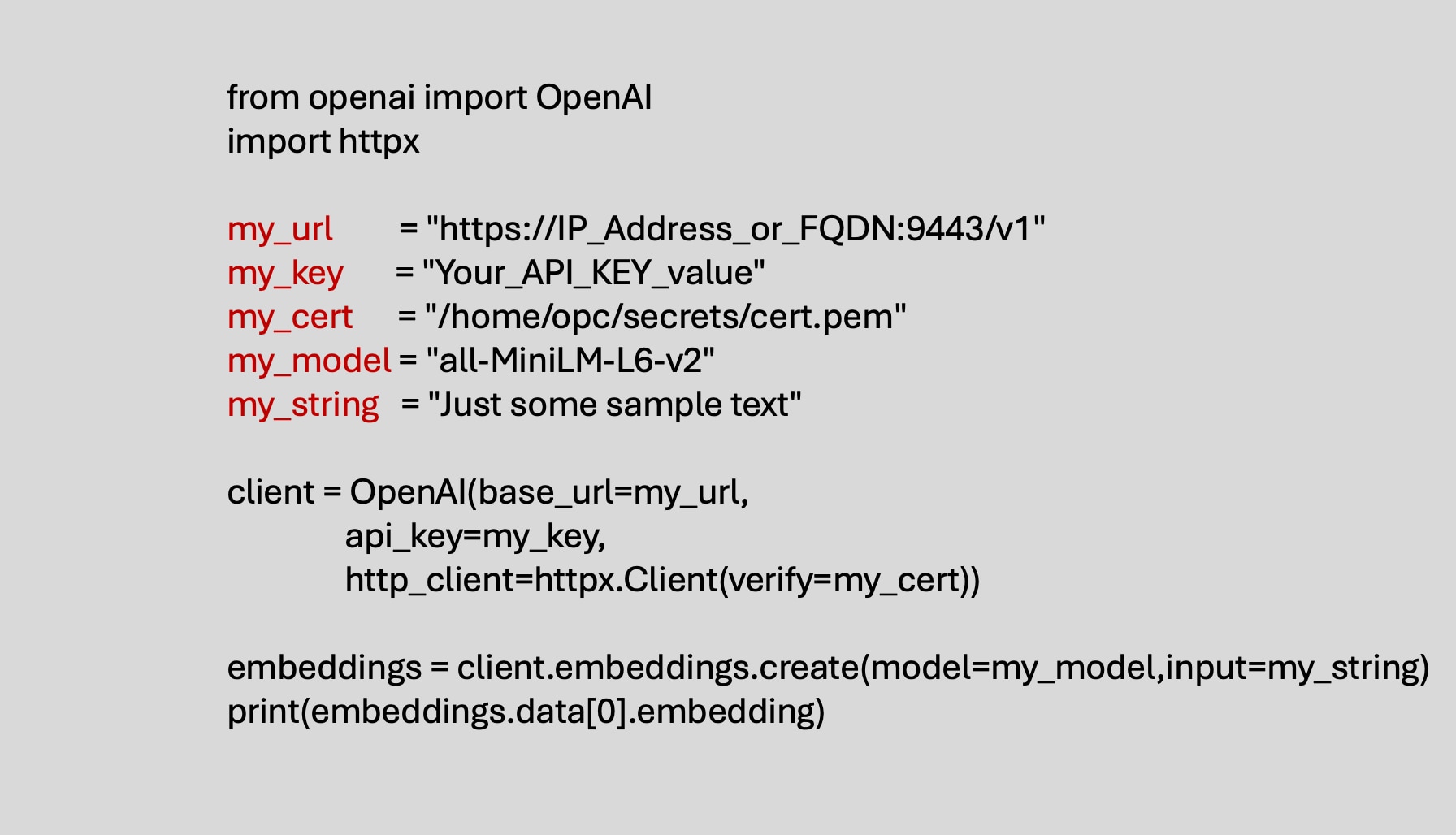

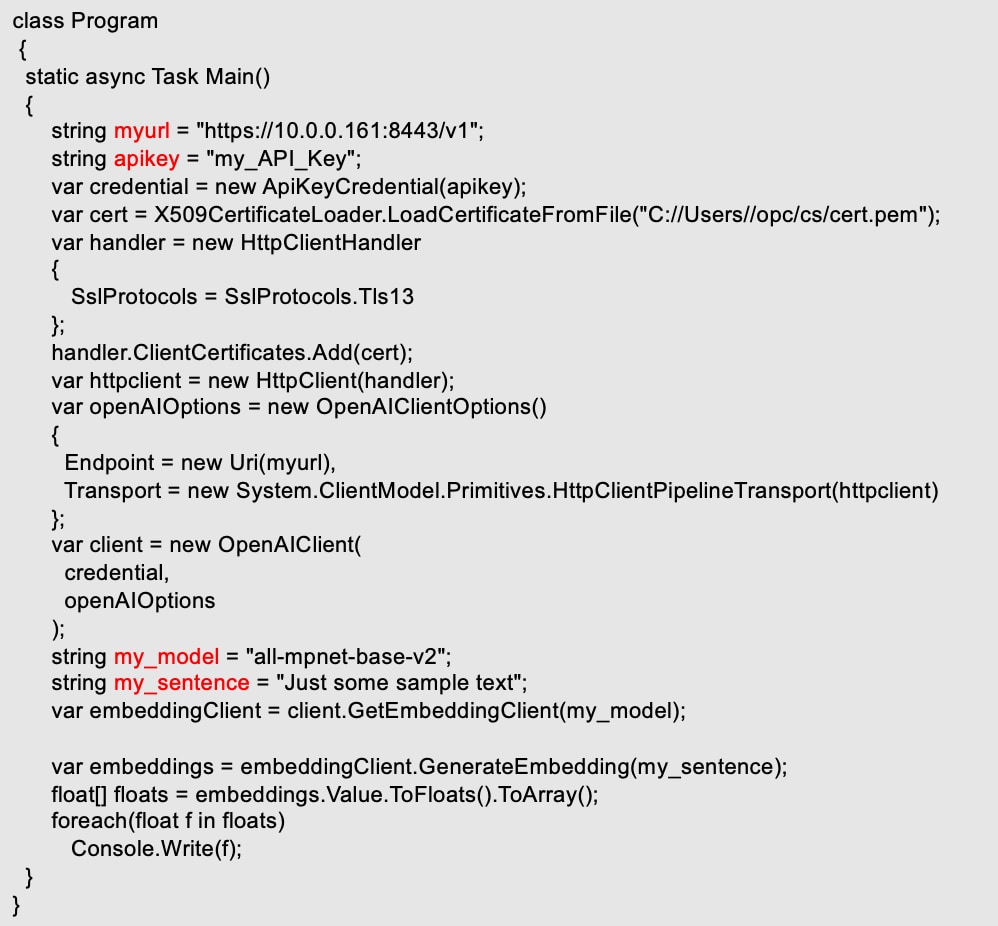

The container is a REST server which implements the OpenAI API protocol to create vectors for the /v1/embeddings endpoint. The endpoint, API Key and model name determine the type of vectors that will be created.

REST clients include Oracle AI Database 26ai, OpenAI API clients, Postman and curl.

Vector Embedding Models

What is a Vector

An embedding is an array of numbers. The numbers are usually floating point (float32 or float64), but they could also be integer (INT8) or binary. The numbers represent the meaning (or semantics) of the unstructured data, whether it is text, images, audio, video or DNA. The distance between two vectors measures their relatedness. Small distances suggest high relatedness and large distances suggest low relatedness.

What is a Vector Embedding Model

Vector embedding models are AI models which convert the unstructed data into a vector. The embedding models are pre-trained neural networks. Most vector embedding models are usually some form of transformer. Sentence Transformers and Vision Transformers are examples.

Models that ship with the Private AI Services Container

Must build Pipeline ONNX Model

Model Name

Description

Must build Pipeline ONNX Model

Must build Pipeline ONNX Model

Other models that you can use

Model Name

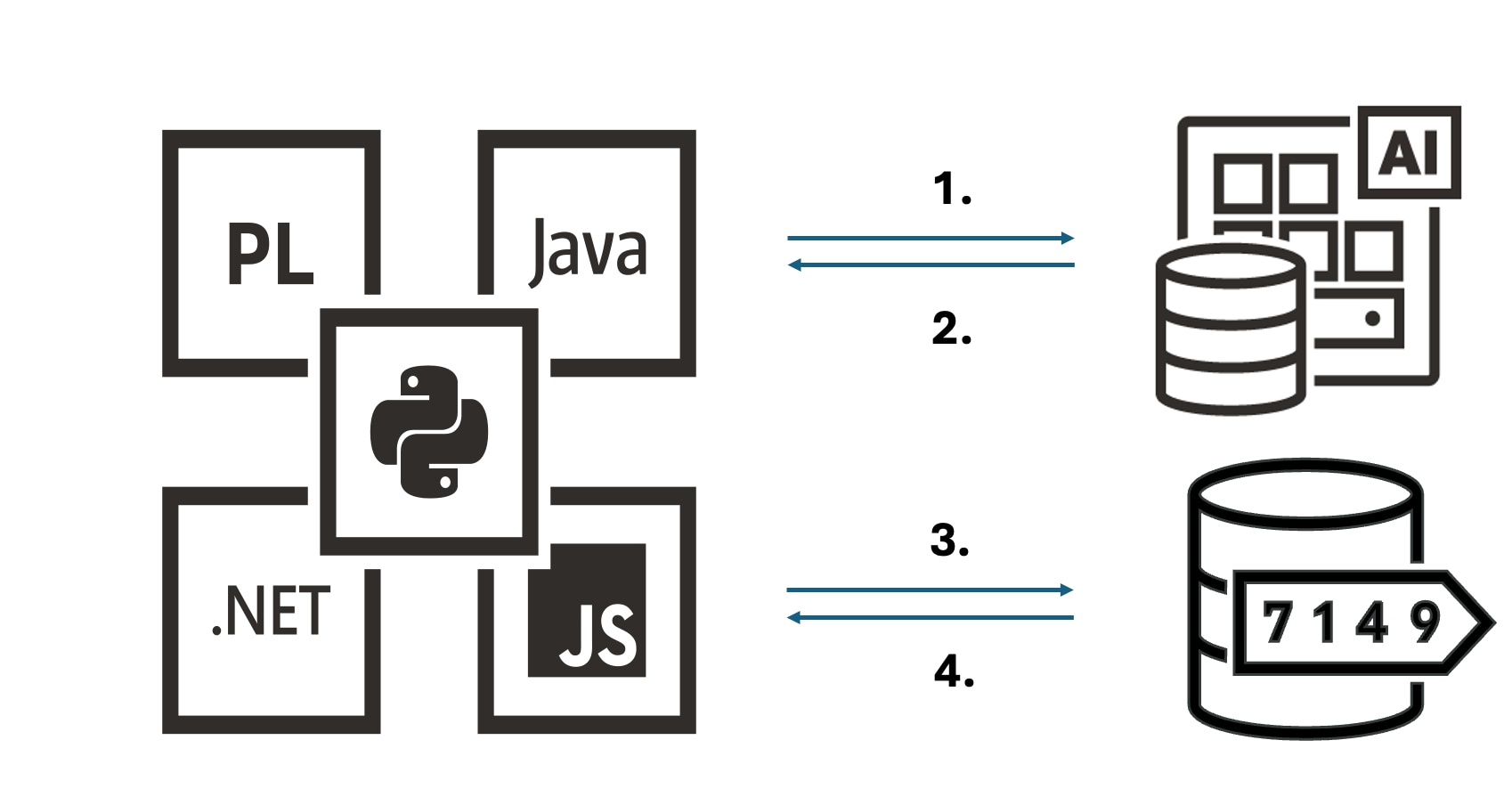

Clients using vector embedding models in the container

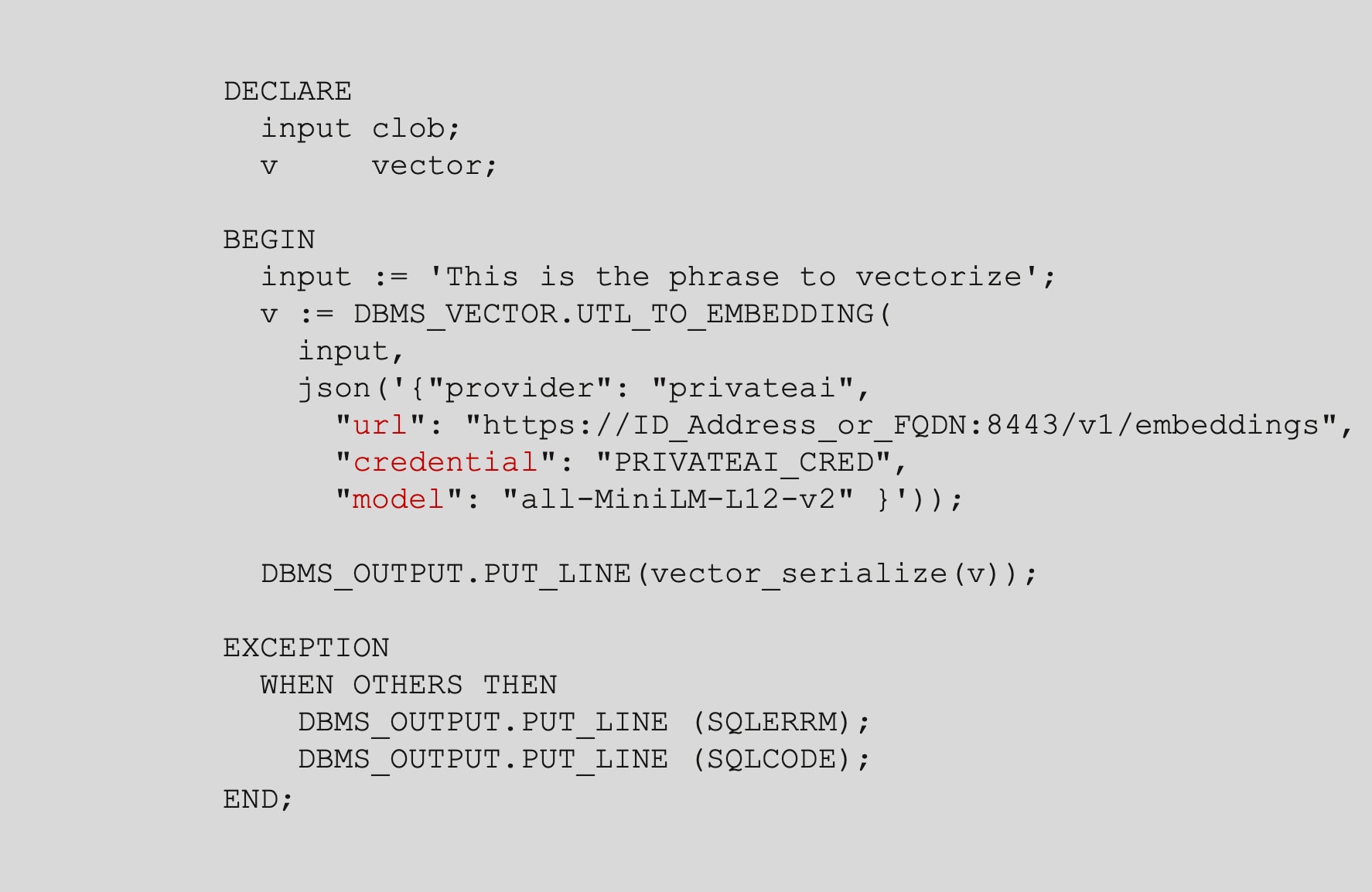

PLSQL REST call

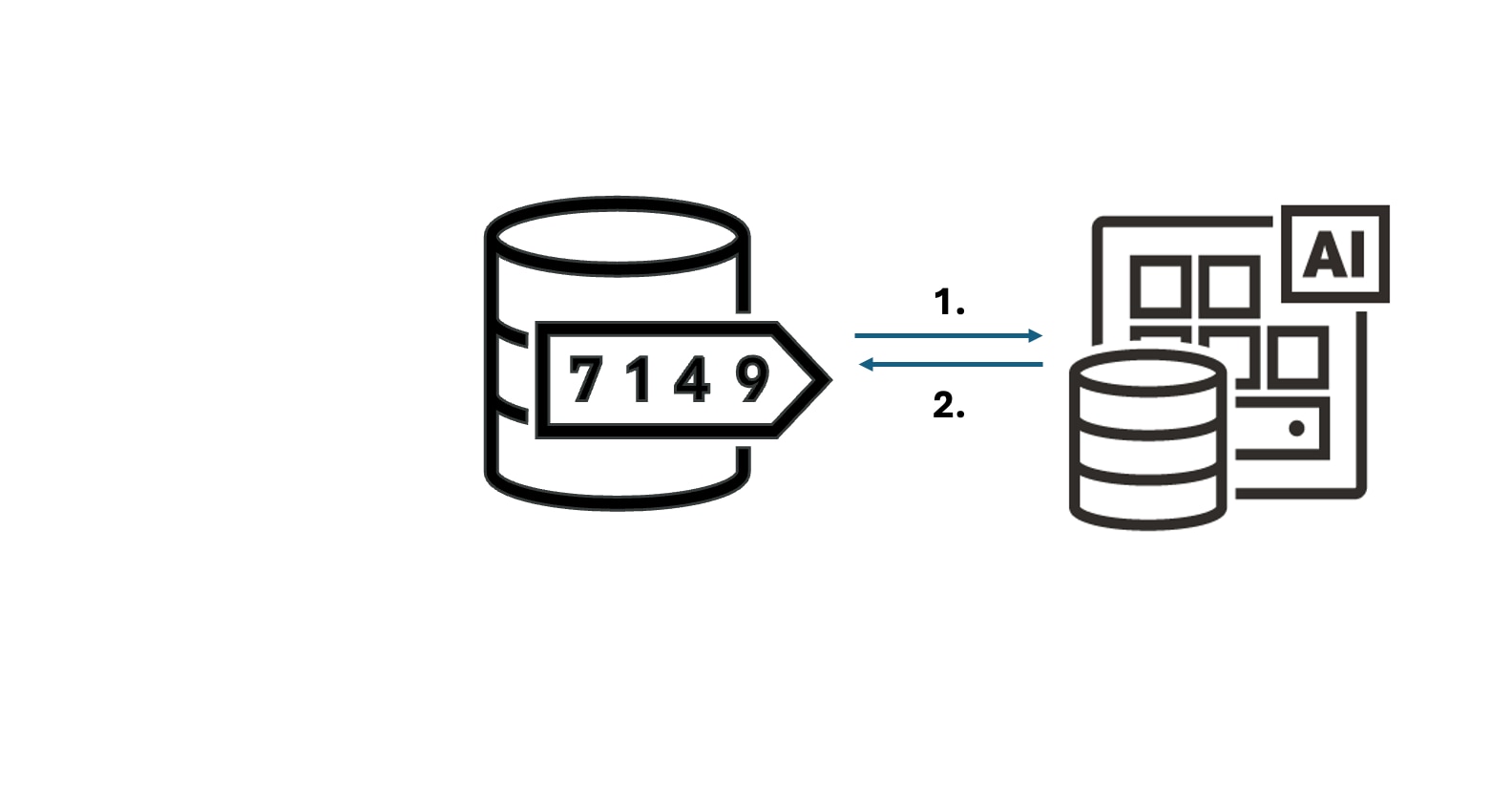

The PLSQL procedure UTL_TO_EMBEDDING from the DBMS_VECTOR package can make a REST call to an endpoint like openai.com, cohere.com, OCI GenAI Services, or the Private AI Services Container.

The URL, credential (API Key) and model determines the vector embedding model that will be used to create a set of vectors.

utl_to_embedding does an HTTPS POST to the /v1/embedding endpoint under the covers.

Use Cases

ONNX in the Database

Vector Embeddings are created in Oracle AI Database 26ai.

The SQL function vector_embedding is used to create the vectors.

The Private AI Services Container is not needed in this scenario.

There is increased CPU utilization in Oracle AI Database 26ai due to the overhead of creating the data and query vectors.