Oracle Private AI Services Container Use Cases

The Oracle Private AI Services Container is a robust, secure AI infrastructure that helps regulated organizations, and those with stringent security requirements, address common compliance and auditability challenges of AI model deployments. They can run private instances of AI models and avoid sharing data with third-party AI providers. The solution also mitigates performance bottlenecks, allowing customers to securely offload compute-intensive AI tasks—such as vector embedding generation—outside the database, helping keep all data secure within their environment. The container can be deployed within the customer’s tenancy in the public cloud, on private clouds, or on-premises, including in air-gapped environments.

Deployment Use Cases

Database Offload

Similarity search and RAG (Retrieval Augmented Generation) require vectors to work:

- Data vectors need to be created for all of your unstructured data of interest

- Query vectors need to be created for every SQL query

Creating a vector is a CPU intensive operation as large matrix multiply operations are needed. The time taken to create a vector can vary from a few milliseconds to seconds depending on the size of the vector.

Oracle AI Database 26ai can create vectors in the database using its CPU cores. If too many database connections create vectors at the same time, the database will become CPU bound and performance will suffer.

The Private AI Services Container can create vectors using multi-threading on multi-core CPUs (and on multiple containers). If data and query vectors can be created on the Private AI Services Container, the Oracle AI Database server can free up CPU cycles for database operations.

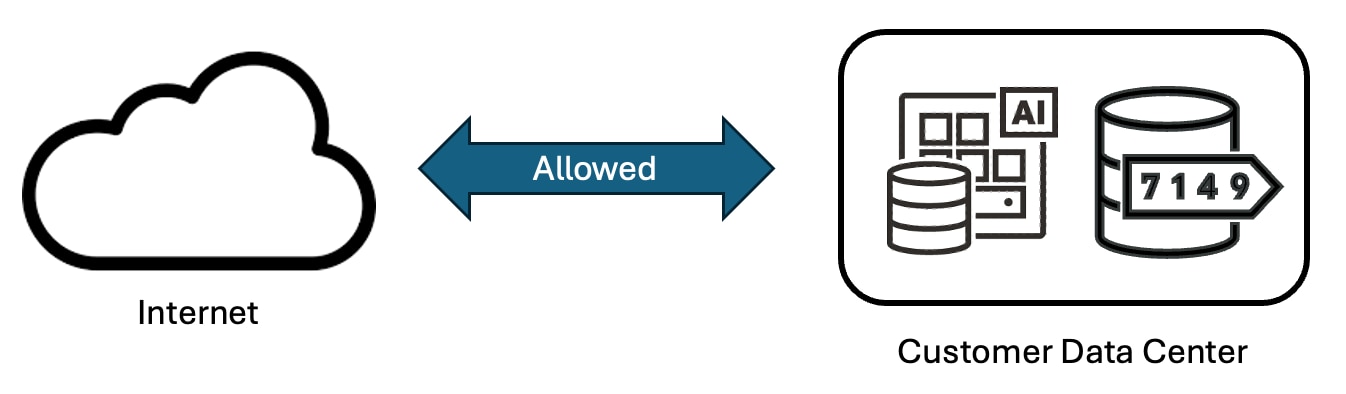

On Prem Deployment

Many customers require their data and applications to reside in their data centers. It is usually possible for applications within the data center to make network requests to the internet.

Oracle Private AI Services Container can run in a customer's data center. Network requests for additional embedding models or downloading software like the container runtime are allowed.

Once the container runtime, (Podman, Kubernetes or OpenShift), and any additional embedding models are installed, there is no need for the Private AI Services Container to access the internet.

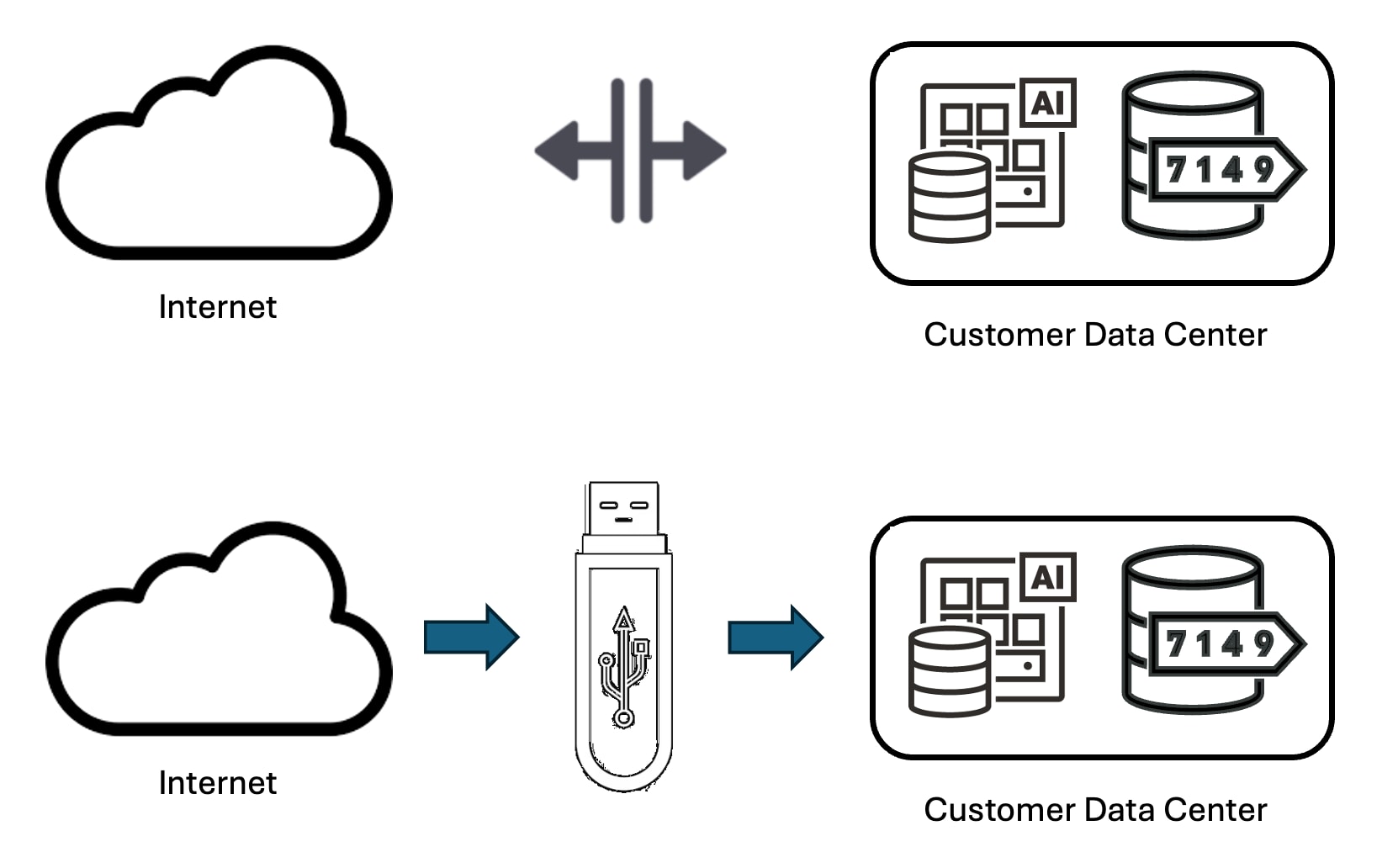

Air Gapped

In an air gapped environment, there is no network connectivity to the internet. This network isolation enables a greater level of security, but it makes it harder to install software within the air gapped system.

Adding new software to an air gapped system usually means that you need to use some form of removable media (like a USB drive) to physically copy the files into the air gapped system. To run Oracle Private AI Services Container in an air gapped system, you would need to copy in the files for:

- The Private AI Services Container (as a TAR file)

- The container runtime (Podman or Kubernetes)

- Any vector embedding models needed beyond the six models that ship with the container

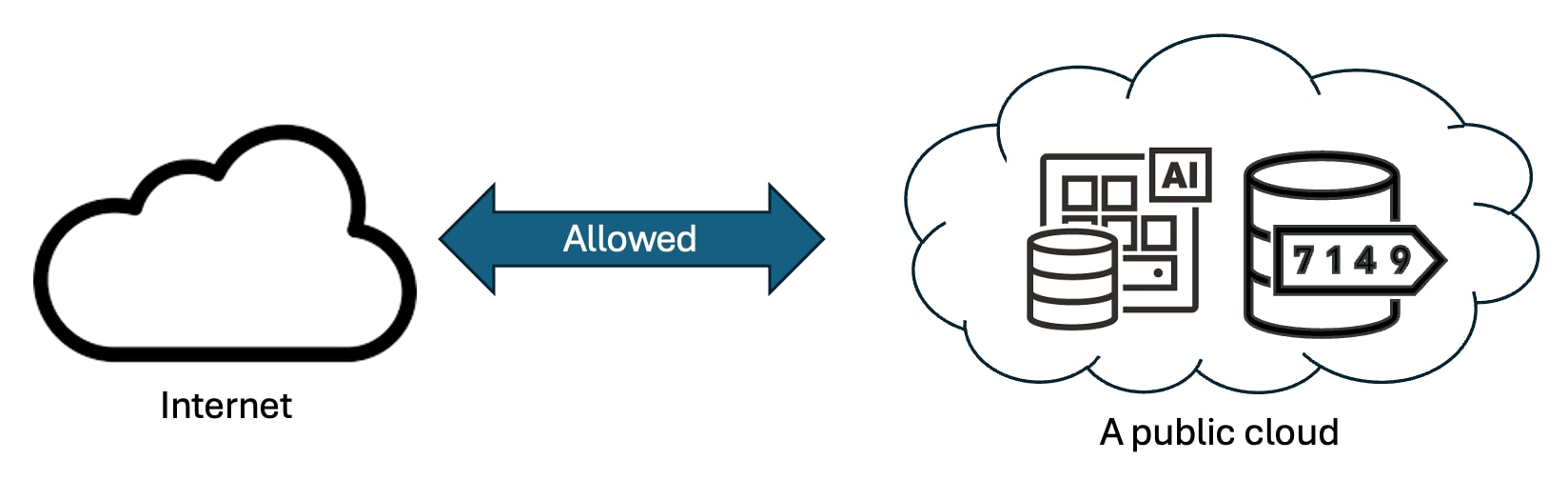

Public Cloud

The Private AI Services Container is an x8664 Linux container.

It can run on:

- Oracle Cloud Infrastructure

- Amazon Web Services

- Azure Cloud

- Google Cloud

However, most customers who are focused on their AI data security want to keep their data within their realm and hence prefer to deploy within their own data centers or air gapped systems.

Whether you run the Oracle Private AI Services Container on premises, in an air gapped environment, or on a public cloud is the customers choice.

PLSQL Application

Oracle AI Database 26ai can make REST calls to the Private AI Services Container via its provider endpoint to create vectors.

The DBMS_VECTOR and DBMS_VECTOR_CHAIN PLSQL packages enable HTTP and HTTP/SSL REST calls to the Private AI Services Container via the UTL_TO_EMBEDDING or UTL_TO_EMBEDDINGS procedures.

These REST calls to the container enable CPU offload from the database server.

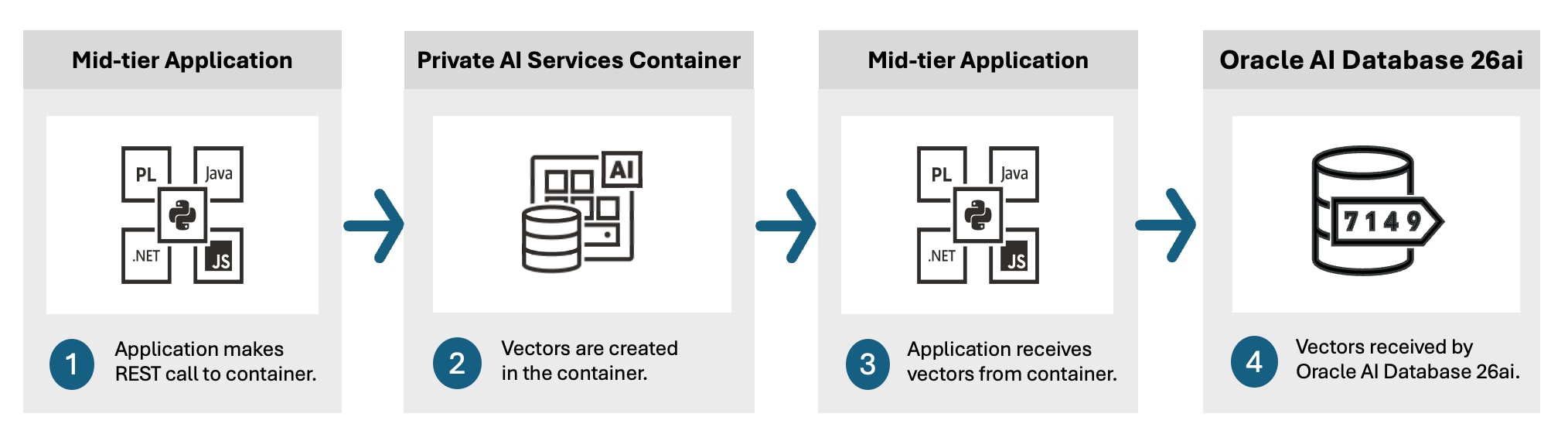

Mid-tier Application

An application can make REST calls to the Private AI Services Container to create vectors. The application continues to use the Oracle Client library to make SQL calls to Oracle AI Database 26ai using SqlNet.

Using REST calls from the application to the container means that the Oracle AI Database server does not need to consume CPU cycles to create vectors.

The application can make REST calls via the OpenAI SDK. The OpenAI SDK Libraries provide support for these clients:

- Python

- JavaScript

- Java

- .NET

- Go

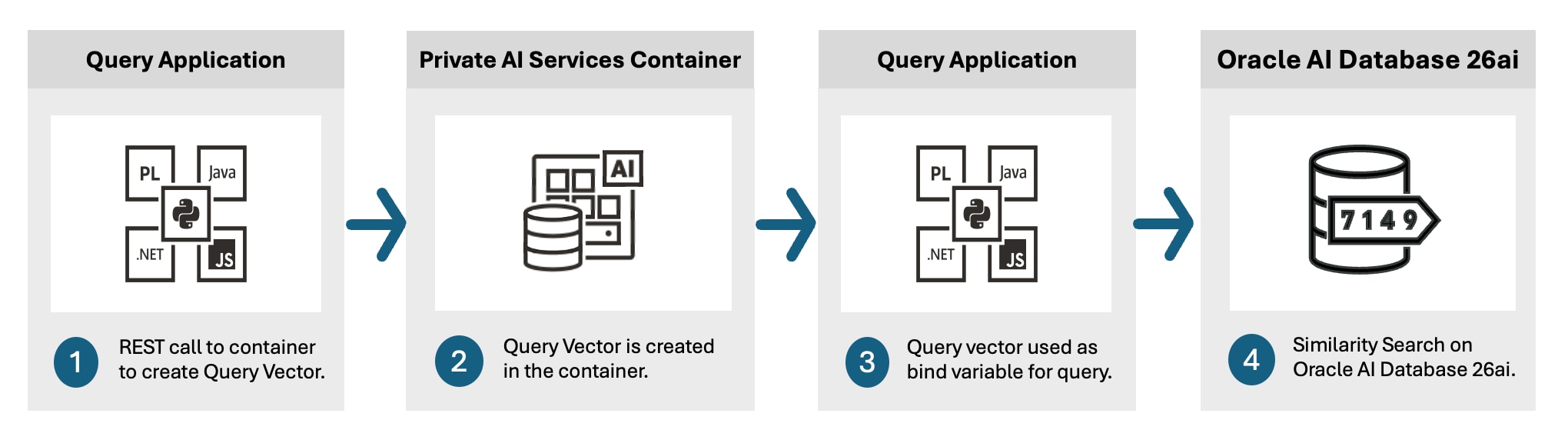

Create Query Vectors

Every time a similarity search using SQL is executed, a query vector needs to be created. This query vector is used as a bind variable for the vector_distance SQL function. For example:

SELECT * FROM houses_for_sale ORDER BY vector_distance(:query_vector, :house_data_vectors) FETCH FIRST 10 ROWS ONLY;

The query vector needs to create a vector from the relevant un-structured data (text, image, audio, video, or DNA).

If 100 database connections run similarity searches at the same time, then 100 query vectors need to be created at the same time. If the query vectors are created on the Oracle AI Database server, then the database's CPU can become CPU bound when the number of concurrent queries exceeds the number of CPU cores.

If the query vector is created on the Private AI Services Container, it is the container's CPU cores which are consumed rather than the Oracle AI Database Server's CPU cores.

Many containers can be used to create different query vectors in parallel.

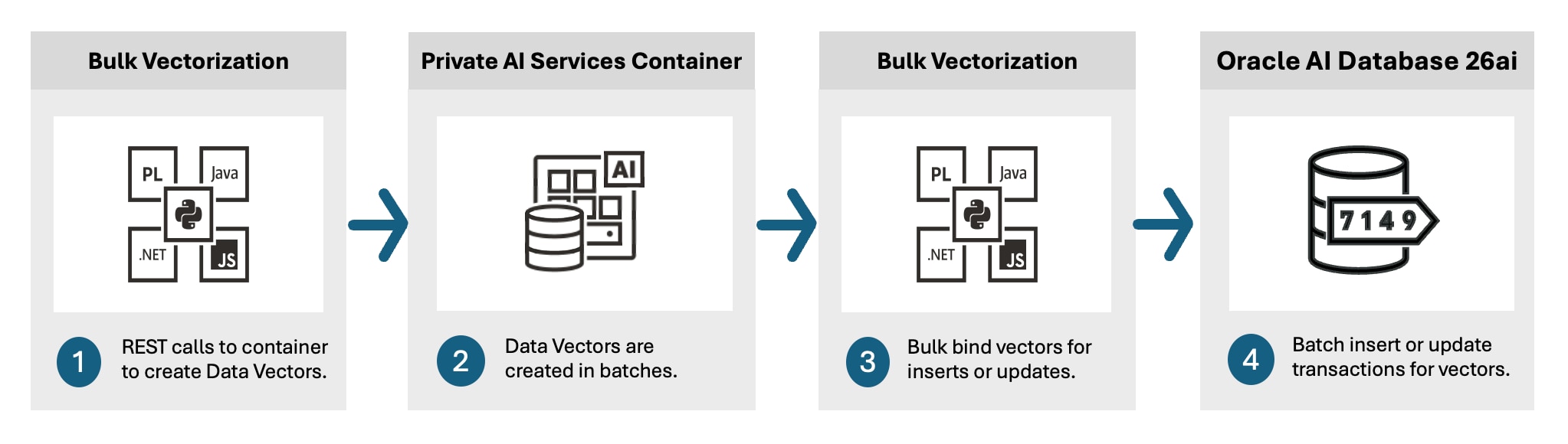

Create Data Vectors

To do a similarity search, you need a query vector, and a set of data vectors. The data vectors are for the unstructured data that you want to search. The data vectors should be created ahead of time rather than at query time.

You need to create a data vector for every row of unstructured data that you want to search and stored each data vector in a VECTOR column. This could be for millions or even billions of rows of unstructured data.

The time taken to create the set of data vectors is proportional to the number of rows and size of the vector. Creating a data vector is dominated by CPU utilization due to the matrix multiplication operations.

It you can offload the creation of the data vectors to the Private AI Services Container, then those container(s) will become CPU bound, rather than the Oracle AI Database server. To maximize throuhput, many different containers can be used concurrently to create data vectors for different subsets of the data vectors.

You still need to do SqlNet calls to insert or update the data vectors in the Oracle AI Database.