MySQL HeatWave GenAI

Oracle MySQL HeatWave GenAI provides integrated, automated, and secure generative AI with in-database large language models (LLMs); an automated, in-database vector store; scale-out vector processing; and the ability to have contextual conversations in natural language—letting you take advantage of generative AI without AI expertise, data movement, or additional cost. MySQL HeatWave GenAI is available in Oracle Cloud Infrastructure (OCI), Amazon Web Services (AWS), and Microsoft Azure.

MySQL Global Forum: Celebrating 30 Years of MySQL

Watch the recorded webcast at your convenience. Hear about MySQL best practices from community experts and learn about new enhancements for developer productivity, cloud services, GenAI, and more.

-

![]() Rich insights without complex data movement

Rich insights without complex data movement

NTT Solmare improved marketing campaigns and uncovered new revenue opportunities.

-

![]() Free MySQL HeatWave workshop

Free MySQL HeatWave workshop

Request a free expert-led workshop to evaluate MySQL HeatWave or get started with it.

-

![]() Fireside chat with SmarterD

Fireside chat with SmarterD

Learn how SmarterD fast-tracked its roadmap by 12 months and went from development to production in only one month using Oracle MySQL HeatWave GenAI.

-

![]() Accelerate Your GenAI/ML Journey with MySQL HeatWave

Accelerate Your GenAI/ML Journey with MySQL HeatWave

Explore real-world use cases of generative AI and machine learning with MySQL HeatWave.

Why use MySQL HeatWave GenAI?

-

Quickly use generative AI anywhere

Use in-database LLMs across clouds and regions to help retrieve data and generate or summarize content—without the hassle of external LLM selection and integration.

-

Easily get more accurate and relevant answers

Let LLMs search your proprietary documents to help get more accurate and contextually relevant answers—without AI expertise or moving data to a separate vector database. MySQL HeatWave GenAI automates embedding generation.

-

Get faster results at lower cost

For similarity search, MySQL HeatWave GenAI is less expensive and is 15X faster than Databricks, 18X faster than Google BigQuery, and 30X faster than Snowflake.

-

Converse in natural language

Get rapid insights from your documents via natural language conversations. The MySQL HeatWave Chat interface preserves context to help enable human-like conversations with follow-up questions.

Key features of MySQL HeatWave GenAI

In-database LLMs

Use the built-in LLMs in all Oracle Cloud Infrastructure (OCI) regions, OCI Dedicated Region, and across clouds and get consistent results with predictable performance across deployments. Help reduce infrastructure costs by eliminating the need to provision GPUs.

Integrated with other generative AI services

Access pretrained foundational models from Cohere and Meta via the OCI Generative AI service when using MySQL HeatWave GenAI on OCI and via Amazon Bedrock when using MySQL HeatWave GenAI on AWS.

MySQL HeatWave Chat

Have contextual conversations in natural language informed by your unstructured data in MySQL HeatWave Vector Store. Use the integrated Lakehouse Navigator to help guide LLMs to search through specific documents, helping you reduce costs while getting more accurate results faster.

In-database vector store

MySQL HeatWave Vector Store houses your proprietary documents in various formats, acting as the knowledge base for retrieval-augmented generation (RAG) to help you get more accurate and contextually relevant answers—without moving data to a separate vector database.

Automated generation of embeddings

Leverage the automated pipeline to help discover and ingest proprietary documents in MySQL HeatWave Vector Store, making it easier for developers and analysts without AI expertise to use the vector store.

Scale-out vector processing

Vector processing is parallelized across up to 512 MySQL HeatWave cluster nodes and executed at memory bandwidth, helping to deliver fast results with a reduced likelihood of accuracy loss.

Read the MySQL HeatWave GenAI technical brief.

Customer perspectives on MySQL HeatWave GenAI

-

![]()

“MySQL HeatWave GenAI makes it extremely simple to take advantage of generative AI. The support for in-database LLMs and in-database vector creation leads to significant reduction in application complexity, predictable inference latency, and, most of all, no additional cost to us to use the LLMs or create the embeddings. This is truly the democratization of generative AI, and we believe it will result in building richer applications with MySQL HeatWave GenAI and significant gains in productivity for our customers.”

—Vijay Sundhar, CEO, SmarterD

-

“We heavily use the in-database MySQL HeatWave AutoML for making various recommendations to our customers. MySQL HeatWave’s support for in-database LLMs and in-database vector store is differentiated and the ability to integrate generative AI with AutoML provides further differentiation for MySQL HeatWave in the industry, enabling us to offer new kinds of capabilities to our customers. The synergy with AutoML also improves the performance and quality of the LLM results.”

—Safarath Shafi, CEO, EatEasy

-

![]()

“MySQL HeatWave in-database LLMs, in-database vector store, scale-out in-memory vector processing, and MySQL HeatWave Chat are very differentiated capabilities from Oracle that democratize generative AI and make it very simple, secure, and inexpensive to use. Using MySQL HeatWave and AutoML for our enterprise needs has already transformed our business in several ways, and the introduction of this innovation from Oracle will likely spur growth of a new class of applications where customers are looking for ways to leverage generative AI on their enterprise content.”

—Eric Aguilar, Founder, Aiwifi

Who benefits from MySQL HeatWave GenAI?

-

Developers can deliver apps with built-in AI

Built-in LLMs and MySQL HeatWave Chat help enable you to deliver apps that are preconfigured for contextual conversations in natural language. There’s no need for external LLMs and GPUs.

-

Analysts can rapidly get new insights

MySQL HeatWave GenAI can help you easily converse with your data, perform similarity searches across documents, and retrieve information from your proprietary data.

-

IT can help accelerate AI innovation

Empower developers and business teams with integrated capabilities and automation to take advantage of generative AI. Easily enable natural language conversations and RAG.

Use cases for MySQL HeatWave GenAI

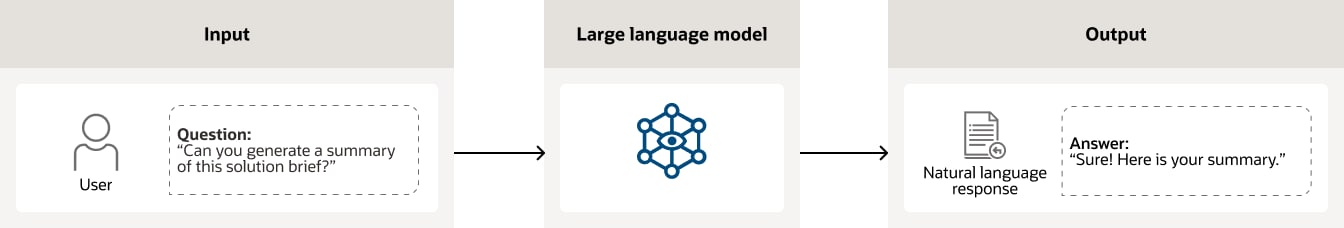

You can use the in-database LLMs to help generate or summarize content based on your unstructured documents. Users can ask questions in natural language via applications, and the LLM will process the request and deliver the content.

A user is asking a question in natural language “Can you generate a summary of this solution brief?”. The large language model (LLM) processes this input and generates the summary as output.

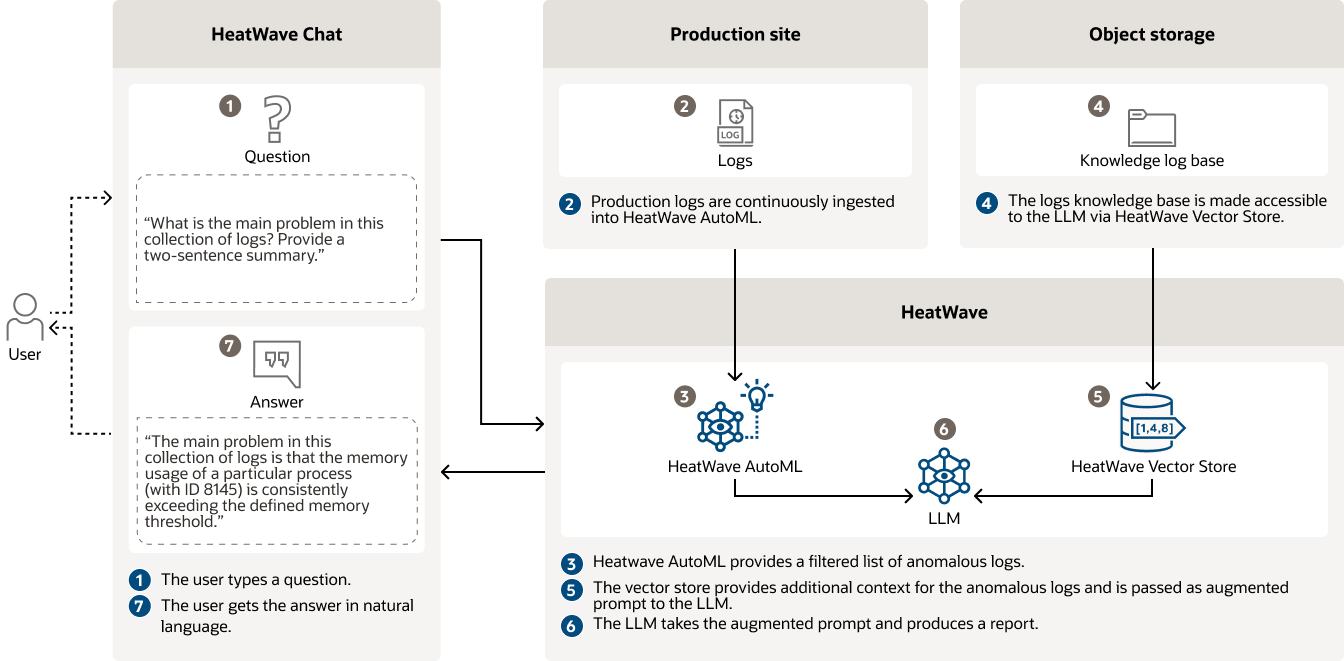

You can combine the power of generative AI with other built-in MySQL HeatWave capabilities, such as machine learning, to help reduce costs and obtain more accurate results faster. In this example, a manufacturing company does so for predictive maintenance. Engineers can use Oracle MySQL HeatWave AutoML to help automatically produce a report of anomalous production logs and MySQL HeatWave GenAI helps to rapidly determine the root cause of the issue by simply asking a question in natural language, instead of manually analyzing the logs.

A user asks via MySQL HeatWave Chat “What is the main problem in this collection of logs? Provide a two-sentence summary.”. First, MySQL HeatWave AutoML produces a filtered list of anomalous logs based on all the production logs that it continuously ingests. Then MySQL HeatWave Vector Store provides additional context to the LLM based on the logs knowledge base. The LLM takes that augmented prompt, produces a report, and provides the user with a detailed answer explaining the issue in natural language.

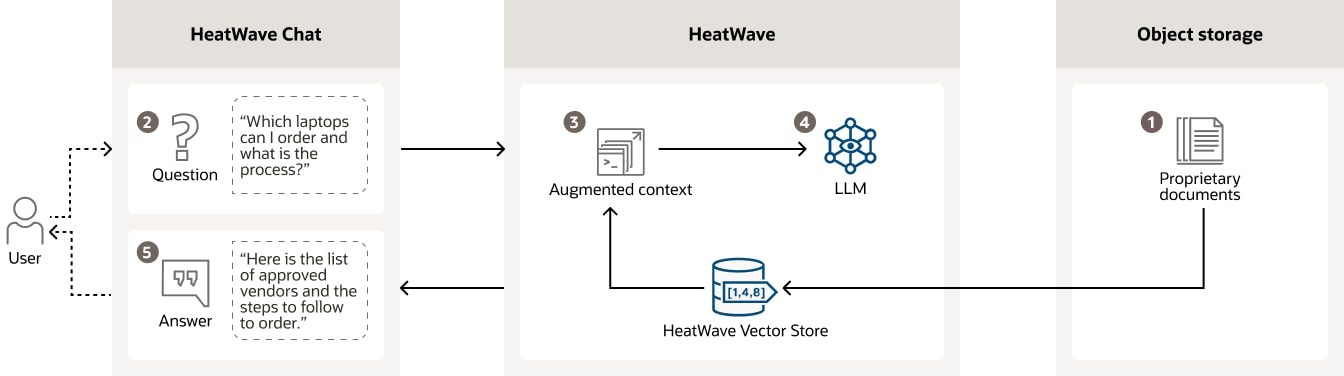

Chatbots can use RAG to, for example, help answer employees’ questions about internal company policies. Internal documents detailing policies are stored as embeddings in MySQL HeatWave Vector Store. For a given user query, the vector store helps to identify the most similar documents by performing a similarity search against the stored embeddings. These documents are used to augment the prompt given to the LLM so that it provides an accurate answer.

A user asks via MySQL HeatWave Chat “Which laptops can I order and what is the process?”. MySQL HeatWave processes the question by accessing internal policy documents housed in MySQL HeatWave Vector Store. It then provides an augmented prompt to the LLM that can generate the response “Here is the list of approved vendors and the steps to follow to order.”

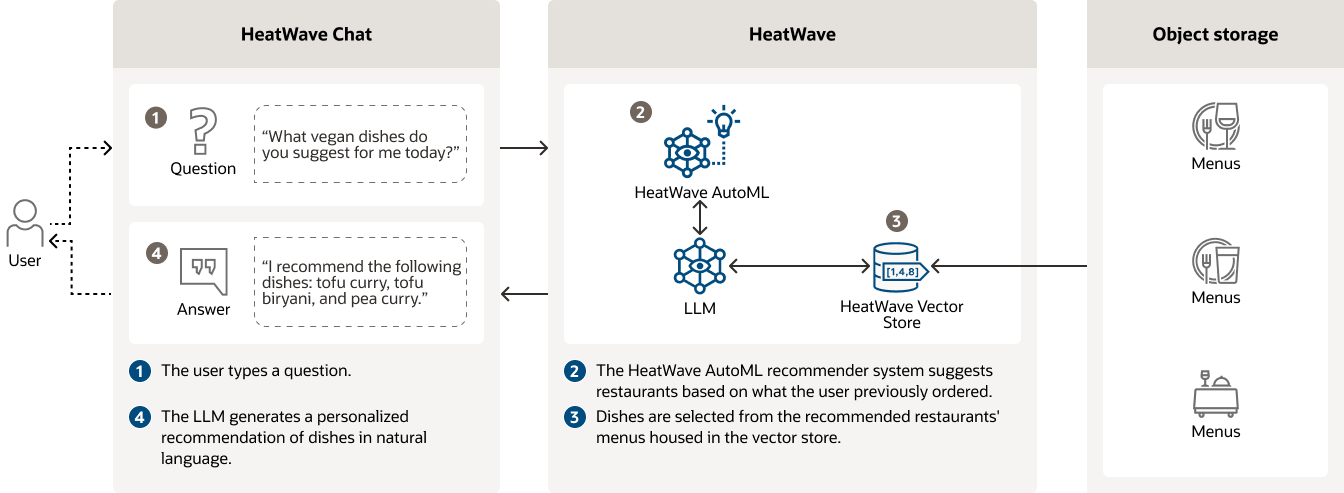

Developers can build applications leveraging the combined power of built-in ML and generative AI in MySQL HeatWave to deliver personalized recommendations. In this example, the application uses the MySQL HeatWave AutoML recommender system to help suggest restaurants based on the user’s preferences or what the user previously ordered. With MySQL HeatWave Vector Store, the application can help additionally search through restaurants’ menus in PDF format to suggest specific dishes, providing greater value to customers.

A user asks via MySQL HeatWave Chat “What vegan dishes do you suggest for me today?”. First, the MySQL HeatWave AutoML recommender system suggests a list of restaurants based on what the user previously ordered. Then, MySQL HeatWave Vector Store provides an augmented prompt to the LLM based on the restaurants’ menus that it houses. The LLM can then generates a personalized recommendation of dishes in natural language.

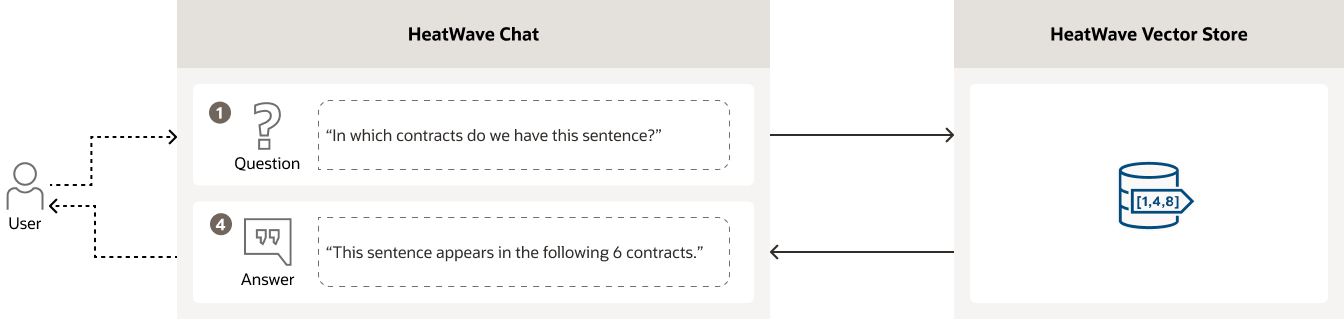

Similarity search focuses on finding related content based on semantics. Similarity search goes beyond simple keyword searches by considering the underlying meaning instead of only searching the applied tags. In this example, a lawyer wants to quickly identify a potentially problematic clause in contracts.

A lawyer asks via MySQL HeatWave Chat “In which contracts do we have this sentence?”. MySQL HeatWave Vector Store performs a similarity search and provides the answer “This sentence appears in the following 6 contracts.”

See what top industry analysts say about MySQL HeatWave GenAI

-

![NAND Research logo]()

“With in-database LLMs that are ready to go and a fully automated vector store that’s ready for vector processing on day one, MySQL HeatWave GenAI takes AI simplicity—and price performance—to a level that its competitors such as Snowflake, Google BigQuery and Databricks can’t remotely begin to approach.”

Steve McDowell

Principal Analyst and Founding Partner, NAND Research -

![Constellation Research logo]()

“MySQL HeatWave’s engineering innovation continues to deliver on the vision of a universal cloud database. The latest is generative AI done ‘MySQL HeatWave style’—which includes the integration of an automated, in-database vector store and in-database LLMs directly into the MySQL HeatWave core. This enables developers to create new classes of applications as they combine MySQL HeatWave elements.”

Holger Mueller

Vice President and Principal Analyst, Constellation Research -

![The Futurum Group logo]()

“MySQL HeatWave GenAI has delivered vector processing performance that is 30X faster than Snowflake, 18X faster than Google BigQuery and 15X faster than Databricks—at up to 6X lower cost. For any organization serious about high performance generative AI workloads, spending company resources on any of these three or other vector database offerings is the equivalent of burning money and trying to justify it as a good idea.”

Ron Westfall

Senior Analyst and Research Director, The Futurum Group -

![dbInsight logo]()

“MySQL HeatWave is taking a big step in making generative AI and Retrieval-Augmented Generation (RAG) more accessible by pushing all the complexity of creating vector embeddings under the hood. Developers simply point to the source files sitting in cloud object storage, and MySQL HeatWave then handles the heavy lift.”

Tony Baer

Founder and CEO, dbInsight

Learn more about other MySQL HeatWave solutions for your different workloads

Get started with MySQL HeatWave GenAI

Access the documentation

Easily build GenAI applications

Follow step-by-step instructions and use the code we provide to quickly and easily build applications powered by MySQL HeatWave GenAI.

Sign up for the service

Sign up for a free trial of MySQL HeatWave GenAI. You’ll get US$300 in cloud credit to try its capabilities for 30 days and get access to numerous MySQL HeatWave capabilities for free for an unlimited time.

Contact sales

Interested in learning more about MySQL HeatWave GenAI? Let one of our experts help.