Articles

Articles

Server and Storage Administration

Server and Storage Administration

- Application Development Framework

- Application Express

- Big Data

- Business Intelligence

- Cloud Computing

- Communications

- Database Performance & Availability

- Data Warehousing

- Database

- .NET

- Dynamic Scripting Languages

- Embedded

- Digital Experience

- Enterprise Architecture

- Enterprise Management

- Identity & Security

- Java

- Linux

- Mobile

- Service-Oriented Architecture

- Solaris

- SQL & PL/SQL

- Systems - All Articles

- Virtualization

How to Get Started Creating Oracle Solaris Kernel Zones in Oracle Solaris 11

by Duncan Hardie

This article demonstrates three methods for creating, configuring, and managing Oracle Solaris Kernel Zones, a feature introduced in Oracle Solaris 11.2 that provides all the flexibility, scalability, and efficiency of Oracle Solaris Zones while adding the ability to have zones that have independent kernels.

Published July 2014 (Updated July 2018)

|

Introduction

About Oracle Solaris Zones and Kernel Zones

Benefits of Each Installation Method

Prerequisites

Creating Your First Kernel Zone Using the Direct Installation Method

Updating a Kernel Zone to a Later Oracle Solaris Release

Installing a Kernel Zone from an ISO Image

Converting a Native Zone to a Kernel Zone

Conclusion

See Also

About the Author

Introduction

Oracle Solaris 11 is a complete, integrated, and open platform engineered for large-scale enterprise environments. Its built-in Oracle Solaris Native Zones technology provides application virtualization through isolated, encapsulated, and highly secure environments that run on top of a single, common kernel. As a result, native zones provide a highly efficient, scalable, zero-overhead virtualization solution that sits at the core of the platform.

With the inclusion of the Kernel Zones feature, Oracle Solaris 11.2 provides a flexible, cost-efficient, cloud-ready solution that is perfect for the data center.

This article describes how to create kernel zones in Oracle Solaris 11, as well as how to configure the kernel zone to your requirements, install it, and boot it.

You will learn about the two main methods of installing a kernel zone: direct installation and installation via an ISO image. In addition, you will learn about a third installation method that enables you to convert a native zone to a kernel zone. You will learn how to update a kernel zone so that it uses a different Oracle Solaris release than the release that is running in the host machine's kernel.

The examples in this article will leave you familiar with the basic procedures for installing, configuring, and managing kernel zones in Oracle Solaris 11.3 and 11.4.

Note: This article demonstrates how to update a kernel zone from Oracle Solaris 11.3 to Oracle Solaris 11.4. This shows the power of kernel zones and how you can use them to move between different patch-levels and updates of Oracle Solaris within the kernel zone with no restriction on the version of Oracle Solaris in the Global Zone.

About Oracle Solaris Zones and Kernel Zones

Oracle Solaris Zones let you isolate one application from others on the same operating system (OS), allowing you to create a user-, security-, and resource-controlled environment suitable to that particular application. Each Oracle Solaris Zone can contain a complete environment and also allows you to control different resources such as CPU, memory, networking, and storage.

The system administrator who owns the host system can choose to closely manage all the Oracle Solaris Zones on the system. Alternatively, the system administrator can assign rights to other system administrators for specific Oracle Solaris Zones. This flexibility lets you tailor an entire computing environment to the needs of a particular application.

Kernel zones, the newest type of Oracle Solaris Zones, provide all the flexibility, scalability, and efficiency of Oracle Solaris Zones while adding the capability to have zones with independent kernels. This capability is highly useful when you are trying to coordinate the updating of multiple zone environments belonging to different owners.

With kernel zones, the updates can be done at the level of an individual kernel zone at a time that is convenient for each owner. In addition, applications that have specific version requirements can run side by side on the same system and benefit from the high consolidation ratios that Oracle Solaris Zones provide.

Another advantage of using kernel zones is that you can use them to live migrate the zones to another system without the need to stop the zone and the applications running in it. This aspect of kernel zones will not be covered in this article. For more information on Live Migration please refer to the Oracle product documentation on Migrating an Oracle Solaris Kernel Zone.

Benefits of the Each Installation Method

In this article, we will create three kernel zones using different methods:

- The first method will show how quickly and easily you can create a new kernel zone using a direct installation—that is, an installation based on the OS running on the host system. This is an extremely useful method for getting additional kernel zone environments up and running quickly in response to a new application or user demands.

- Using the second method, you will learn how to create a kernel zone from an ISO image. This is useful when it is desirable to deploy a specific kernel version to support an application or environment.

- Using the final method, you will learn how to convert a native zone to a kernel zone. This is useful when you want to update an application or service to run on a later kernel version without affecting the other services running on the system.

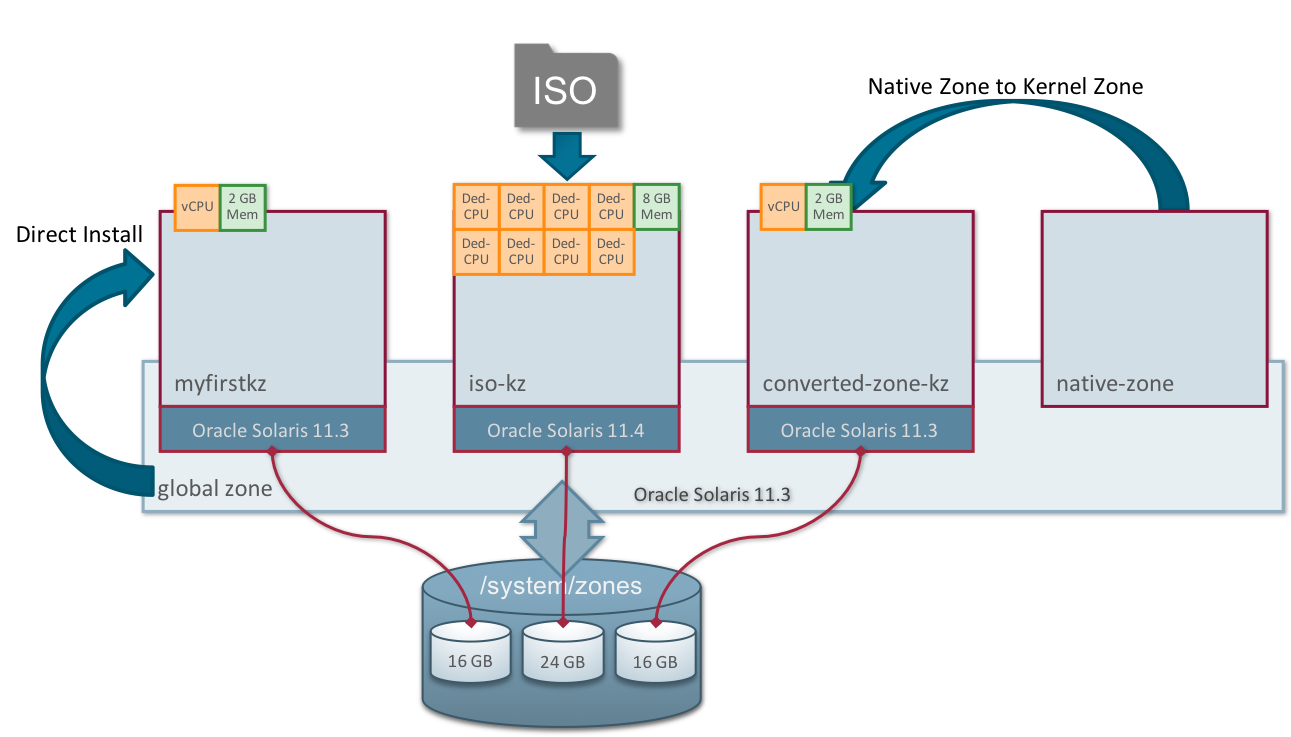

Figure 1 summarizes what we will do:

Figure 1. Illustration of the three methods for creating kernel zones

Prerequisites

There are a couple of tasks that need to be completed before we create our first kernel zone. We need to check that the hardware is capable of running kernel zones, and we also need to provide a hint to the system about application memory usage.

Checking the Hardware Capabilities

Kernel zones will run only on certain types of hardware, as follows:

- Intel CPUs with CPU virtualization (VT-x) enabled in BIOS and with support for Extended Page Tables (EPT), such as Nehalem or newer CPUs

- AMD CPUs with CPU virtualization (AMD-v) enabled in BIOS and with support for Nested Page Tables (NPT), such as Barcelona or newer CPUs

- sun4v CPUs with a "wide" partition register, for example, Oracle's SPARC T4 or SPARC T5 processors running a supported firmware version and Oracle's SPARC M5, SPARC M6, or newer processors

- sun4v CPUs on Fujitsu M10/SPARC M10 systems running at least XCP Firmware 2230 and Solaris 11.2 SRU4 or later

You can easily check that the system is capable of running kernel zones by using the virtinfo command, as shown in Listing 1:

root@global:~# virtinfo NAME CLASS logical-domain current non-global-zone supported kernel-zone supported logical-domain supported

Listing 1

You can see from the output in Listing 1 that kernel zones are supported.

There are some other hardware prerequisites, and for Oracle Solaris 11.4 certain older systems have been retired from the list of supported system; for a full list of requirements for kernel zones, see the Oracle Solaris Kernel Zones documentation.

Providing Information About Application Memory Usage

When using kernel zones, is it necessary to provide a hint to the system about application memory usage. This information is used to limit the growth of the ZFS Adaptive Replacement Cache (ARC) so that more memory stays available for applications and, in this case, for the kernel zones themselves.

Providing this hint is achieved by setting the user_reserve_hint_pct parameter. A script is provided for doing this, and the current recommendation is to set the value to 80.

root@global:~# ./set_user_reserve.sh -f 80 Adjusting user_reserve_hint_pct from 0 to 80 Adjustment of user_reserve_hint_pct to 80 successful.

You can find this script and more information by visiting the My Oracle Support website and then accessing Doc ID 1663862.1. Note that to make this persistent over reboots you will need to also edit /etc/system .

Creating Your First Kernel Zone Using the Direct Installation Method

For a full discussion on all the steps involved in creating a kernel zone and configuring all its attributes, please see Creating and Using Oracle Solaris Kernel Zones. This article will concentrate on a subset of the steps to demonstrate how to quickly get a kernel zone instance up and running.

Prerequisites

First, check the status of the ZFS file system and the network, as shown in Listing 2:

root@global:~# zfs list | grep zones rpool/VARSHARE/zones 16.5G 348G 32K /system/zones root@global:~# dladm show-link LINK CLASS MTU STATE OVER net5 phys 1500 unknown -- net3 phys 1500 unknown -- net6 phys 1500 unknown -- net7 phys 1500 unknown -- net8 phys 1500 unknown -- net0 phys 1500 unknown -- net1 phys 1500 up -- net9 phys 1500 unknown -- net10 phys 1500 up -- net4 phys 1500 unknown -- net2 phys 1500 unknown --

Listing 2

Note: In Listing 2, there are no ZFS datasets associated with any specific zones. We will see later how these are created for you as you install zones. Also note that there are no virtual network interface card (VNIC) devices.

Let's also check the Oracle Solaris version of the global zone, as shown in Listing 3, because we will use this information later:

root@global:~# uname -a SunOS dcsw-t52-1 5.11 11.3 sun4v sparc sun4v

Listing 3

In Listing 3, we can see the version is Oracle Solaris 11.3.

Note: In this article, we will use uname as a quick way of showing the kernel version of the system. However, that is not the recommended way to check the system version. The recommended way is to query the entire package, as shown in Listing 4, which also indicates that the version is Oracle Solaris 11.3. (See "Understanding Oracle Solaris 11 Package Versioning" for an explanation about how to decipher the output when you query the entire package.)

root@global:~# pkg list entire NAME (PUBLISHER) VERSION IFO entire 0.5.11-0.175.3.33.0.5.0 i--

Listing 4

We can also see that the system has a publisher set up:

root@global:~# pkg publisher PUBLISHER TYPE STATUS P LOCATION solaris origin online F http://pkg.oracle.com/solaris11/support/

Step 1: Create the Kernel Zone

Let's start by creating our first kernel zone using the command line, as shown in Listing 5:

root@global:~# zonecfg -z myfirstkz create -t SYSsolaris-kz

Listing 5

In Listing 5, note that all we need to supply is the zone name (myfirstkz) and the kernel zone brand (SYSsolaris-kz).

By default, all Oracle Solaris Zones are configured to have an automatic VNIC called anet, which gives us a network device automatically. We cannot see this network device, but it is automatically created upon booting the zone (and also automatically destroyed upon shutdown). We can check this by using the dladm command:

root@global:~# dladm show-link LINK CLASS MTU STATE OVER net5 phys 1500 unknown -- net3 phys 1500 unknown -- net6 phys 1500 unknown -- net7 phys 1500 unknown -- net8 phys 1500 unknown -- net0 phys 1500 unknown -- net1 phys 1500 up -- net9 phys 1500 unknown -- net10 phys 1500 up -- net4 phys 1500 unknown -- net2 phys 1500 unknown --

We can also see that, as of yet, no storage has been created for our kernel zone:

root@global:~# zfs list | grep zones rpool/VARSHARE/zones 31K 411G 31K /system/zones

We can verify that the kernel zone is now in the configured state by using the zoneadm command:

root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared - myfirstkz configured - solaris-kz excl

Let's take a look at the default settings for the kernel zone that we have created. We can do this by passing the info option to the zonecfg command, as shown in Listing 6:

root@global~# zonecfg -z myfirstkz info zonename: myfirstkz brand: solaris-kz autoboot: false autoshutdown: shutdown bootargs: pool: scheduling-class: hostid: 0x2065233a tenant: anet: lower-link: auto allowed-address not specified configure-allowed-address: true defrouter not specified allowed-dhcp-cids not specified link-protection: mac-nospoof mac-address: auto mac-prefix not specified mac-slot not specified vlan-id not specified priority not specified rxrings not specified txrings not specified mtu not specified maxbw not specified bwshare not specified rxfanout not specified vsi-typeid not specified vsi-vers not specified vsi-mgrid not specified etsbw-lcl not specified cos not specified pkey not specified linkmode not specified evs not specified vport not specified iov: off lro: auto id: 0 device: match not specified storage: dev:/dev/zvol/dsk/rpool/VARSHARE/zones/myfirstkz/disk0 id: 0 bootpri: 0 virtual-cpu: ncpus: 4 capped-memory: physical: 4G pagesize-policy: largest-available

Listing 6

From the output in Listing 6, we can see that the zone is called myfirstkz, that it is a kernel zone (brand: solaris-kz), that we have a boot disk (and its location is dev:/dev/zvol/dsk/rpool/VARSHARE/zones/myfirstkz/disk0) and, finally, that we have 4 GB of physical memory assigned to this kernel zone.

We also see the amount of CPU resources we have for this kernel zone. When nothing is specified, the default is to have 4 virtual CPUs assigned. We'll see how to verify this later when we boot the kernel zone.

Step 2: Install the Kernel Zone

Now that the kernel zone has been created, we need to install it.

For this first installation, we are going to use what is called a direct installation. With a direct installation, the installer runs on the host. It will create and format the kernel zone's boot disk and install Oracle Solaris packages on that disk, using the host's package publishers. Since the installer is running on the host, the installer can install only the exact version of Oracle Solaris that it is actively running on the host.

This installation method makes use of the Oracle Solaris 11 Image Packaging System. You will need to make sure you have access to your Image Packaging System repository; in this case, we have network access to our repository. For more details on the Image Packaging System, see "Introducing the Basics of Image Packaging System (IPS) on Oracle Solaris 11."

Run the following command to install the myfirstkz kernel zone:

root@global:~# zoneadm -z myfirstkz install

Progress being logged to /var/log/zones/zoneadm.20180703T020944Z.myfirstkz.install

pkg cache: Using /var/pkg/publisher.

Install Log: /system/volatile/install.4364/install_log

AI Manifest: /tmp/zoneadm3908.35FyId/devel-ai-manifest.xml

SC Profile: /usr/share/auto_install/sc_profiles/enable_sci.xml

Installation: Starting ...

Creating IPS image

Installing packages from:

solaris

origin: http://pkg.oracle.com/solaris11/support/

The following licenses have been accepted and not displayed.

Please review the licenses for the following packages post-install:

consolidation/osnet/osnet-incorporation

Package licenses may be viewed using the command:

pkg info --license DOWNLOAD PKGS FILES XFER (MB) SPEED Completed 445/445 67036/67036 715.9/715.9 0B/s PHASE ITEMS Installing new actions 90660/90660 Updating package state database Done Updating package cache 0/0 Updating image state Done Creating fast lookup database Done Installation: Succeeded Done: Installation completed in 284.090 seconds. We can check on the status of the myfirstkz kernel zone using the zoneadm command:

root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared - myfirstkz installed - solaris-kz excl

Note: A kernel zone needs a boot disk on which it is installed; by using the command shown in Listing 7, we can see that this boot disk has been created for us:

root@global:~# zfs list | grep zones rpool/VARSHARE/zones 16.5G 393G 32K /system/zones rpool/VARSHARE/zones/myfirstkz 16.5G 393G 31K /system/zones/myfirstkz rpool/VARSHARE/zones/myfirstkz/disk0 16.5G 407G 2.84G -

Listing 7

You can see in Listing 7 that the /myfirstkz/disk0 dataset has been created automatically for you.

Step 3: Boot the Kernel Zone and Complete the System Configuration

The final step in getting myfirstkz up and running is to boot it and set up the system configuration. We will boot the zone and then access its console using one command at the command line, as shown in Listing 8, so the majority of the console output can be seen:

root@global:~# zoneadm -z myfirstkz boot; zlogin -C myfirstkz [Connected to zone 'myfirstkz' console] NOTICE: Entering OpenBoot. NOTICE: Fetching Guest MD from HV. NOTICE: Starting additional cpus. NOTICE: Initializing LDC services. NOTICE: Probing PCI devices. NOTICE: Finished PCI probing. SPARC T5-2, No Keyboard Copyright (c) 1998, 2015, Oracle and/or its affiliates. All rights reserved. OpenBoot 4.38.1, 4.0000 GB memory available, Serial #543499066. Ethernet address 0:0:0:0:0:0, Host ID: 2065233a. Boot device: disk0 File and args: SunOS Release 5.11 Version 11.3 64-bit Copyright (c) 1983, 2018, Oracle and/or its affiliates. All rights reserved. Loading smf(5) service descriptions: 180/180 Configuring devices.

Listing 8

Note: The -C option to zlogin shown in Listing 8 lets us access the zone console; the command will bring us into the zone and let us work within the zone.

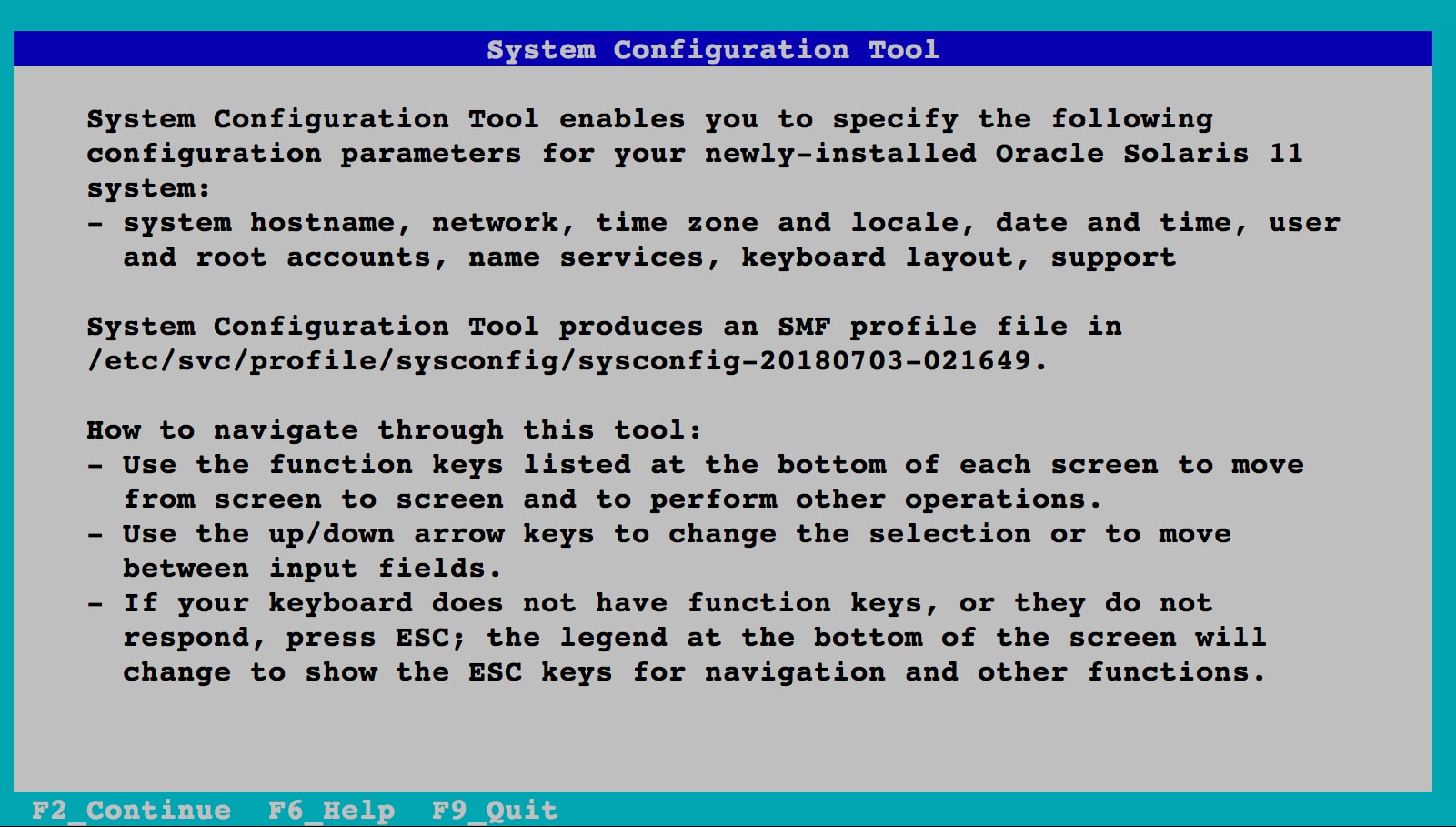

Because no system configuration files are available, the System Configuration Tool starts up, as shown in Figure 2.

Figure 2. Initial screen of the System Configuration Tool

Press F2 to continue.

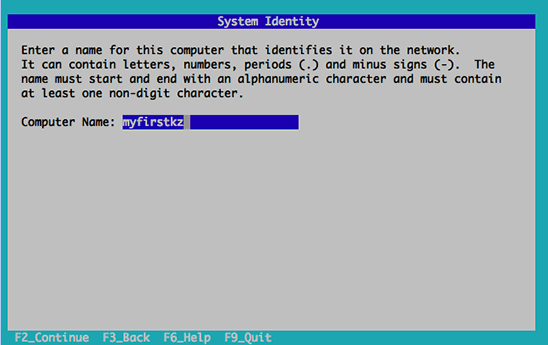

In the System Identity screen (shown in Figure 3), enter myfirstkz as the computer name, and then press F2 to continue.

Figure 3. System Identity screen

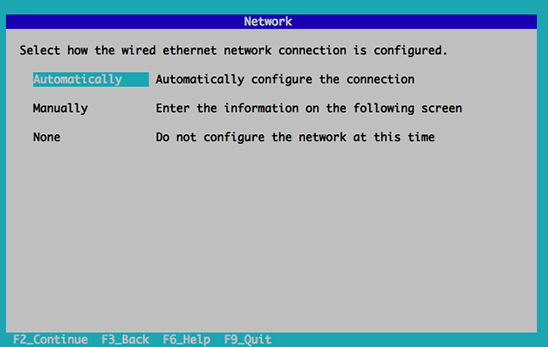

In the Network screen (shown in Figure 4), Enter the network settings appropriate for your network and then press F2. Here we will select Automatically.

Figure 4. Network screen

In the Time Zone: Regions screen (shown in Figure 5), choose the time zone region appropriate for your location. In this example, we chose Europe. Then press F2.

Figure 5. Time Zone: Regions screen

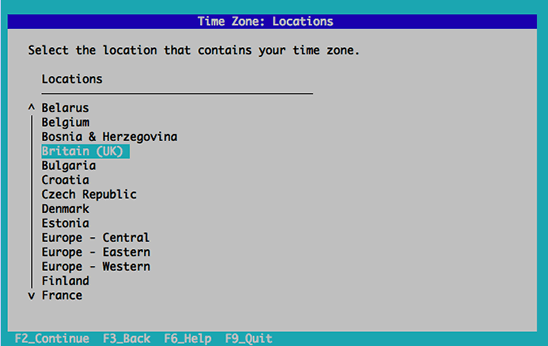

In the Time Zone: Locations screen (shown in Figure 6), choose the time zone location appropriate for your location, and then press F2.

Figure 6. Time Zone: Locations screen

In the Time Zone screen (shown in Figure 7), choose the time zone appropriate for your location, and then press F2.

Figure 7. Time Zone screen

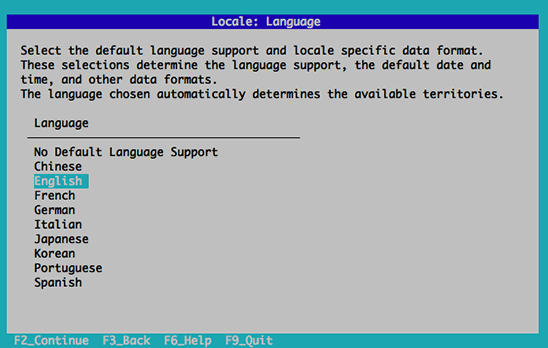

In the Locale: Language screen (shown in Figure 8), choose the language appropriate for your location, and then press F2.

Figure 8. Locale: Language screen

In the Locale: Territory screen (shown in Figure 9), choose the language territory appropriate for your location, and then press F2.

Figure 9. Locale: Territory screen

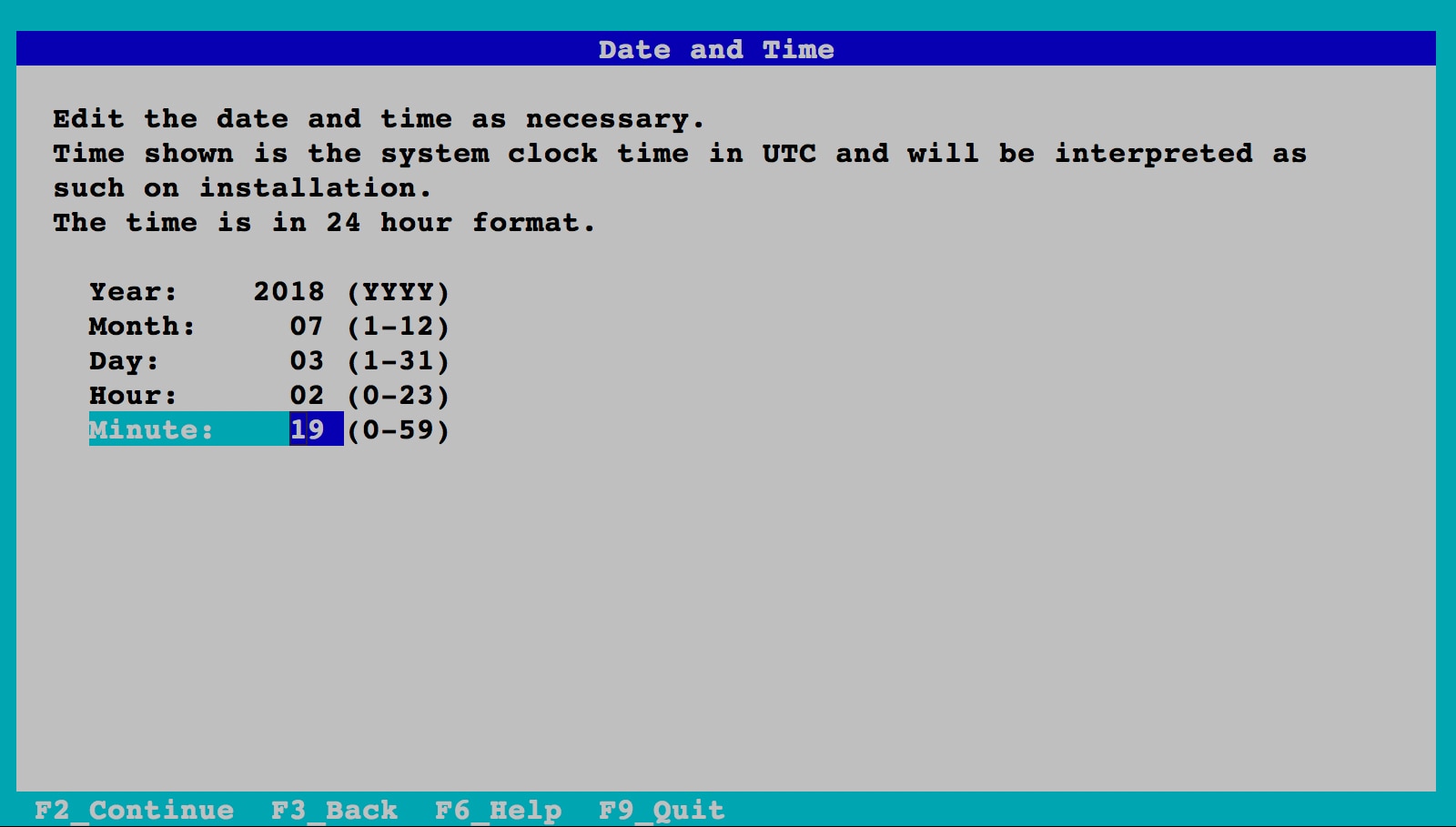

In the Date and Time screen (shown in Figure 10), set the date and time, and then press F2.

Figure 10. Date and Time screen

In the Keyboard screen (shown in Figure 11), select the appropriate keyboard, and then press F2.

Figure 11. Keyboard screen

In the Users screen (shown in Figure 12), choose a root password and enter information for a user account. Then press F2.

Figure 12. Users screen

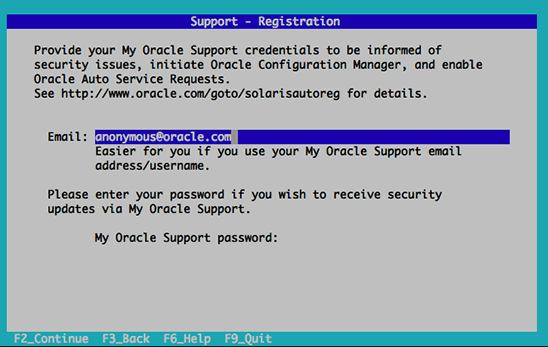

In the Support — Registration screen (shown in Figure 13), enter your My Oracle Support credentials. Then press F2.

Figure 13. Support — Registration screen

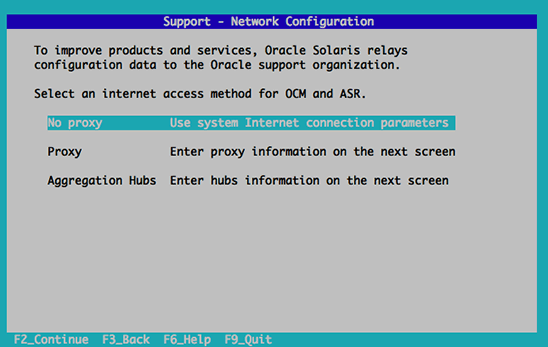

In the Support — Network Configuration screen (shown in Figure 14), choose how you will send configuration data to Oracle. Then press F2.

Figure 14. Support — Network Configuration screen

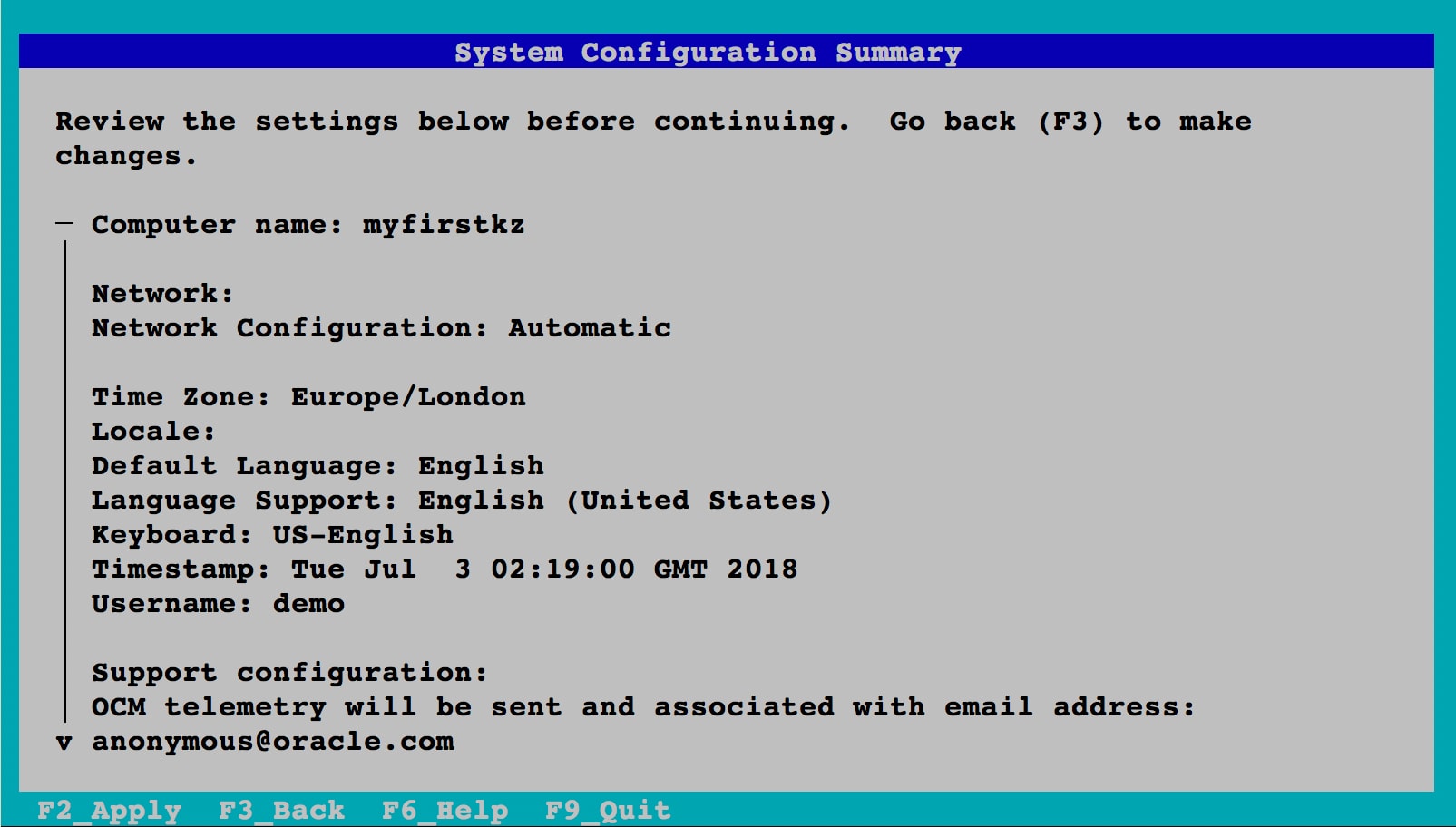

In the System Configuration Summary screen (shown in Figure 15), verify that the configuration you have chosen is correct and apply the settings by pressing F2.

Figure 15. System Configuration Summary screen

The zone will continue booting, and soon you will see the console login:

SC profile successfully generated as: /etc/svc/profile/sysconfig/sysconfig-20180703-021649/sc_profile.xml Exiting System Configuration Tool. Log is available at: /system/volatile/sysconfig/sysconfig.log.310 Loading smf(5) service descriptions: 2/2 Hostname: myfirstkz Jul 3 03:21:42 myfirstkz sendmail[1589]: My unqualified host name (myfirstkz) unknown; sleeping for retry Jul 3 03:21:42 myfirstkz sendmail[1593]: My unqualified host name (myfirstkz) unknown; sleeping for retry myfirstkz console login: ~. [Connection to zone 'myfirstkz' console closed]

The zone is now ready to be logged in to. For this example, we will now exit the console using the "~." escape sequence.

You can check that your zone is booted and running using the zoneadm command:

root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared 2 myfirstkz running - solaris-kz excl

As promised, a VNIC was automatically created for us when the zone was booted. We can verify this by using the dladm command shown in Listing 9:

root@global:~# dladm show-link LINK CLASS MTU STATE OVER net5 phys 1500 unknown -- net3 phys 1500 unknown -- net6 phys 1500 unknown -- net7 phys 1500 unknown -- net8 phys 1500 unknown -- net0 phys 1500 unknown -- net1 phys 1500 up -- net9 phys 1500 unknown -- net10 phys 1500 up -- net4 phys 1500 unknown -- net2 phys 1500 unknown -- myfirstkz/net0 vnic 1500 up net1

Listing 9

In Listing 9, we can see the VNIC is listed as myfirstkz/net0. Note that this is running over net1 in the Global Zone. This means that Internal to the kernel zone the VNIC appears as net0, and on the outside in the Global Zone it's actually running over the NIC net1.

Step 4: Log In to Your Kernel Zone

The last step is to log in to your zone and have a look. You can do this from the global zone using the zlogin command, as shown in Listing 10:

root@global:~# zlogin myfirstkz [Connected to zone 'myfirstkz' pts/2] Oracle Corporation SunOS 5.11 11.3 May 2018 root@myfirstkz:~# uname -a SunOS myfirstkz 5.11 11.3 sun4v sparc sun4v root@myfirstkz:~# ipadm NAME CLASS/TYPE STATE UNDER ADDR lo0 loopback ok -- -- lo0/v4 static ok -- 127.0.0.1/8 lo0/v6 static ok -- ::1/128 net0 ip ok -- -- net0/v4 dhcp ok -- 10.134.79.210/24 net0/v6 addrconf ok -- fe80::8:20ff:feda:d150/10 root@myfirstkz:~# dladm show-link LINK CLASS MTU STATE OVER net0 phys 1500 up -- root@myfirstkz:~# zfs list NAME USED AVAIL REFER MOUNTPOINT rpool 4.68G 10.9G 73.5K /rpool rpool/ROOT 2.68G 10.9G 31K legacy rpool/ROOT/solaris-2 2.68G 10.9G 2.35G / rpool/ROOT/solaris-2/var 335M 10.9G 334M /var rpool/VARSHARE 2.65M 10.9G 2.55M /var/share rpool/VARSHARE/pkg 63K 10.9G 32K /var/share/pkg rpool/VARSHARE/pkg/repositories 31K 10.9G 31K /var/share/pkg/repositories rpool/VARSHARE/zones 31K 10.9G 31K /system/zones rpool/dump 1.00G 10.9G 1.00G - rpool/export 97.5K 10.9G 32K /export rpool/export/home 65.5K 10.9G 32K /export/home rpool/export/home/demo 33.5K 10.9G 33.5K /export/home/demo rpool/swap 1.00G 10.9G 1.00G - root@myfirstkz:~# zonename global root@myfirstkz:~# exit logout [Connection to zone 'myfirstkz' pts/2 closed]

Listing 10

Note: In Listing 10, we did not use the -C option for the zlogin command, which means we are not accessing the zone via its console. This is why we can simply exit the shell at the end to leave the zone.

Let's look at the output shown in Listing 10 to see what we have:

- The output of the

unamecommand shows that we are running on Oracle Solaris 11.3—the same kernel version used in the global zone in which ourmyfirstkzkernel zone is running. - The output of the

ipadmcommand shows the IP address formyfirstkz. There are four entries: two loopback devices (IPv4 and IPv6), our IPv4net0device with an IP address of10.134.79.210and, finally, an IPv6net0device. - The output of the

dladmcommand shows our automatically creatednet0VNIC. - The output of the

zfs listcommand shows our ZFS dataset. - Finally, the output of the

zonenamecommand shows that our zone name isglobal. With native zones, this would be the actual zone name. However, a kernel zone actually runs a full kernel instance, so users running inside the kernel zone have their own instance of a global zone. This also means you can nest native zones inside this kernel zone.

If you want to determine the zone name from inside the kernel zone, you can use the virtinfo command:

root@global:~# zlogin myfirstkz [Connected to zone 'myfirstkz' pts/2] Last login: Tue Jul 3 03:36:00 2018 on kz/term Oracle Corporation SunOS 5.11 11.3 May 2018 root@myfirstkz:~# virtinfo -c current get zonename NAME CLASS PROPERTY VALUE kernel-zone current zonename myfirstkz root@myfirstkz:~# virtinfo NAME CLASS kernel-zone current logical-domain parent non-global-zone supported root@myfirstkz:~# exit logout [Connection to zone 'myfirstkz' pts/2 closed]

Note: From within myfirstkz, we cannot see any information about the global zone; we can see only the attributes of our own zone.

You have now verified that myfirstkz is up and running. You can give the login information to your users to allow them to complete the setup of their team's kernel zone as if it were a single system.

Updating a Kernel Zone to a Later Oracle Solaris Release

One of the main features of kernel zones is the ability to run your kernel zone with a different kernel version from that of the host global zone.

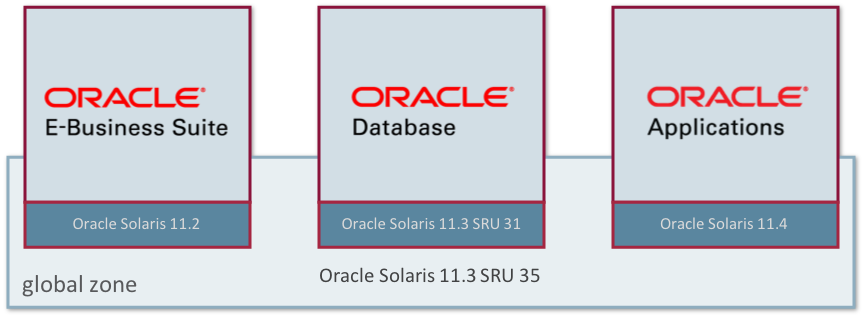

Starting with Oracle Solaris 11.2, kernel zones support both backwards and forwards compatibility. What that means in practice is that you can not only have a kernel zone running Oracle Solaris 11.2 on a host running a later Oracle Solaris version, say Oracle Solaris 11.3, but you can also have a kernel zone running a later Oracle Solaris version, say Oracle Solaris 11.4, on a host running Oracle Solaris 11.3. This of course also holds for different patch levels. So for example if the you could be running Oracle Solaris 11.3 SRU 35 in the global zone, and a kernel zone with Oracle Solaris 11.3 SRU 31. This could be for example because for the application you may want to run the quarterly Critical Patch Update (CPU) in sync with the application patch level and for the global zone you want to run the latest SRU. Figure 16 illustrates this capability.

Figure 16. Example of forward and backward compatibility of kernel zones

Updating myfirstkz to a Later Oracle Solaris Release

Let's update our kernel zone to use a later Oracle Solaris release rather than the release running on the host (Oracle Solaris 11.3). This update could be a later SRU, or a newer update. In this case we'll update to Oracle Solaris 11.4. This illustrates how you could update kernel zones to a newer version of Oracle Solaris while the hosting global zone still runs the older update.

First, let's use the command shown in Listing 11 to look at what boot environments we have from the host global zone:

root@global:~# beadm list BE Flags Mountpoint Space Policy Created -- ----- ---------- ----- ------ ------- initial-install - - 105.32M static 2018-05-18 00:32 solaris - - 101.96M static 2018-05-17 23:16 solaris-1 NR / 8.42G static 2018-07-02 18:05

Listing 11

In Listing 11, we could see a list of native zone boot environments, if there were any. However, we will not see kernel zone boot environments listed, because a kernel zone has its own boot disk.

Let's check what our current publisher is and point the kernel zone to an internal publisher that has a newer kernel. We start by logging in to myfirstkz, as shown in Listing 12:

root@global:~# zlogin myfirstkz [Connected to zone 'myfirstkz' pts/2] Last login: Tue Jul 3 03:41:54 2018 on kz/term Oracle Corporation SunOS 5.11 11.3 May 2018 root@myfirstkz:~# pkg publisher PUBLISHER TYPE STATUS P LOCATION solaris origin online F http://pkg.oracle.com/solaris11/support/ root@myfirstkz:~# pkg set-publisher -G '*' -g http://internal-repo.acme.com/solaris11/support/ solaris root@myfirstkz:~# pkg publisher PUBLISHER TYPE STATUS P LOCATION solaris origin online F http://internal-repo.acme.com/solaris11/support/

Listing 12

Note that in this example, we will use an internally created repository. You will be able to reproduce this for yourself with the repository images of Oracle Solaris as they become available on My Oracle Support. In Listing 12, we can see that we are running Oracle Solaris 11.3, and we have set our publisher to point to the support repository.

Before we update, let's look at what the kernel zone sees as its boot environment:

root@myfirstkz:~# beadm list BE Flags Mountpoint Space Policy Created -- ----- ---------- ----- ------ ------- solaris-2 NR / 3.39G static 2018-07-03 03:10

Now, let's update our kernel zone, as shown in Listing 13:

root@myfirstkz:~# pkg update --accept

------------------------------------------------------------

Package: pkg://solaris/release/notices@11.4,5.11-11.4.0.0.1.9.0:20180618T184616Z

License: lic_OTN

PLEASE SCROLL DOWN AND READ ALL OF THE FOLLOWING TERMS AND CONDITIONS CAREFULLY

This Oracle Solaris product is pre-GA (beta) Oracle Solaris software and is

intended for information and test purposes only. It is not a commitment to

deliver any material, code, or functionality and it should not be relied upon

in making purchasing decisions. The development, release, and timing of any

features or functionality for Oracle's products remains at the sole discretion

of Oracle.

By entering "accept" or the equivalent, or by downloading, installing or using

this pre-GA Oracle Solaris software, you indicate your acceptance to comply

with the license terms set forth at

http://www.oracle.com/technetwork/licenses/solaris-ea-license-4255446.html

for this pre-GA Oracle Solaris software. If you are not willing to be bound by

the License Agreement, do not enter "accept" or the equivalent and do not

download or access this pre-GA Oracle Solaris software.

Packages to remove: 67

Packages to install: 174

Packages to update: 369

Mediators to change: 7

Create boot environment: Yes

Create backup boot environment: No

Release Notes:

NOTICE: Oracle Solaris Point-to-Point Protocol (PPP) will be removed

in a future Update of Oracle Solaris. The PPP packages are no longer

installed by default: they have been renamed to be 'legacy' packages.

Requirements to transition from one version of OpenLDAP to another.

_Only required if the system is being used as an OpenLDAP server._

Ordinarily a system is being used as an OpenLDAP server when SMF service

instance ldap/server is enabled and online.

For further information refer to instructions in

/usr/share/doc/release-notes/openldap-transition.txt

DOWNLOAD PKGS FILES XFER (MB) SPEED

Completed 610/610 47387/47387 457.9/457.9 6.0M/s

PHASE ITEMS

Removing old actions 38292/38292

Installing new actions 45641/45641

Updating modified actions 38668/38668

Updating package state database Done

Updating package cache 436/436

Updating image state Done

Creating fast lookup database Done

Updating package cache 1/1

A clone of solaris-2 exists and has been updated and activated.

On the next boot the Boot Environment solaris-3 will be

mounted on '/'. Reboot when ready to switch to this updated BE.

Updating package cache 1/1

The following unexpected or editable files and directories were

salvaged while executing the requested package operation; they

have been moved to the displayed location in the image:

var/sadm/servicetag/registry -> /tmp/tmpUXeZeg/var/pkg/lost+found/var/sadm/servicetag/registry-20180703T042911Z

etc/nwam/loc/NoNet -> /tmp/tmpUXeZeg/var/pkg/lost+found/etc/nwam/loc/NoNet-20180703T042911Z

etc/nwam/loc -> /tmp/tmpUXeZeg/var/pkg/lost+found/etc/nwam/loc-20180703T042911Z

---------------------------------------------------------------------------

NOTE: Please review release notes posted at:

https://support.oracle.com/rs?type=doc&id=2045311.1

---------------------------------------------------------------------------

Listing 13

In the command shown in Listing 13, we use the --accept option to automatically accept any licenses. We can see in the output that a boot environment has been created. Let's look at what that is, as shown in Listing 14:

root@myfirstkz:~# beadm list BE Flags Mountpoint Space Policy Created -- ----- ---------- ----- ------ ------- solaris-2 N / 15.87M static 2018-07-03 03:10 solaris-3 R - 6.98G static 2018-07-03 04:28

Listing 14

In Listing 14, we can see from the R next to the solaris-3 boot environment that after a reboot, we will select this new environment.

Finally, let's reboot the zone.

root@myfirstkz:~# reboot [Connection to zone 'myfirstkz' pts/2 closed]

We are now back in the host global zone, and we can use zoneadm to check the status of our kernel zone, as shown in Listing 15:

root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared 2 myfirstkz running - solaris-kz excl

Listing 15

As shown in Listing 15, our kernel zone has already rebooted and is running again.

Let's log back in, as shown in Listing 16, and check what kernel version we are running:

root@global:~# zlogin myfirstkz [Connected to zone 'myfirstkz' pts/2] Oracle Corporation SunOS 5.11 Solaris_11/11.4/ON/production.build-11.4-26:2018-06-07 June 2018

Listing 16

Listing 16 shows we are running a completely different kernel, it's now running Oracle Solaris 11.4.

Let's run the command shown in Listing 17 to take a final look at the boot environments before we leave this kernel zone:

root@myfirstkz:~# beadm list BE Name Flags Mountpoint Space Policy Created --------- ----- ---------- ------- ------ ---------------- solaris-2 - - 676.31M static 2018-07-03 03:10 solaris-3 NR / 4.60G static 2018-07-03 04:28 root@myfirstkz:~# exit logout [Connection to zone 'myfirstkz' pts/2 closed]

Listing 17

In Listing 17, we can see that we are running in the new boot environment.

Installing a Kernel Zone from an ISO Image

Sometimes you might not want to do a direct installation with a kernel zone; you might want to install from an ISO image instead. This is supported for kernel zones, and this section will show how to do that. We'll also show that this works for a completely different version or Oracle Solaris and so will use an ISO for Oracle Solaris 11.4.

We will also use this opportunity to explore how to allocate some dedicated CPU resources to the kernel zone, as well as how to add some extra memory and increase the size of its boot disk.

Step 1: Configure Dedicated CPU Resources and More Memory

Let's create a new kernel zone similar to what we did before, but this time we will use the zonecfg command to add some dedicated CPU resources.

Let's start by checking how many CPU resources we have:

root@global:~# psrinfo -t

socket: 0

core: 0

cpus: 0-7

core: 1

cpus: 8-15

core: 2

cpus: 16-23

core: 3

cpus: 24-31

core: 4

cpus: 32-39

...

core: 28

cpus: 224-231

core: 29

cpus: 232-239

core: 30

cpus: 240-247

core: 31

cpus: 248-255

Now, let's create a new kernel zone called iso-kz and then add eight CPU's worth of dedicated CPU resources to it. Note we also need to increate the amount of virtual CPU resources you'll assign to the kernel zone for it to fully utilize the extra resources:

root@global:~# zonecfg -z iso-kz create -t SYSsolaris-kz root@global:~# zonecfg -z iso-kz zonecfg:iso-kz> add dedicated-cpu zonecfg:iso-kz:dedicated-cpu> set ncpus=8 zonecfg:iso-kz:dedicated-cpu> end zonecfg:iso-kz> select virtual-cpu zonecfg:iso-kz:virtual-cpu> set ncpus=8 zonecfg:iso-kz:virtual-cpu> end zonecfg:iso-kz> verify zonecfg:iso-kz> commit zonecfg:iso-kz> exit

We can check that the zone creation and resource configuration worked by using the zonecfg command:

root@global:~# zonecfg -z iso-kz info dedicated-cpu dedicated-cpu: ncpus: 8 cpus not specified cores not specified sockets not specified

On a kernel zone you can set virtual CPUs and dedicated CPUs. The difference between the two types is basically about sharing.

- With a virtual CPU, the CPU resource is shared with the rest of the system or other zones and it can be leveraged in cases where the kernel zone is not busy. This is what is set by default.

- With dedicated a CPU, the CPU resource is exclusive to the kernel zone and will never be used by anything other than that specific kernel zone. This means that the system sets these CPU resources aside only for that kernel zone.

If you want you can choose to set the virtual CPU to be higher than the dedicated CPU if you want to overcommit but this is generally not advised. For more information about these topics please see the Oracle documentation.

We can also use the zonecfg command to add some extra memory to the kernel zone:

root@global:~# zonecfg -z iso-kz zonecfg:iso-kz> select capped-memory zonecfg:iso-kz:capped-memory> set physical=8g zonecfg:iso-kz:capped-memory> end zonecfg:iso-kz> verify zonecfg:iso-kz> commit zonecfg:iso-kz> exit root@global:~# zonecfg -z iso-kz info capped-memory capped-memory: physical: 8G pagesize-policy: largest-available

Step 2: Install the Kernel Zone with a Bigger Disk

It's now time to install our zone. We will use the Oracle Solaris 11.4 ISO image to do this and we will also increase the size of the install disk. The default is a 16 GB disk, so let's increase that to 24 GB. Listing 18 shows how you do this at installation time:

root@global:~# zoneadm -z iso-kz install -b /root/sol-11_4-9-text-sparc.iso -x install-size=24g

Progress being logged to /var/log/zones/zoneadm.20180703T044712Z.iso-kz.install

[Connected to zone 'iso-kz' console]

...

[NOTICE: Zone rebooting]

[Connection to zone 'iso-kz' console closed]

Done: Installation completed in 1711.493 seconds.

Listing 18

In Listing 18, you can see that this time, the image we used is using the text installer.

Once we have answered the usual installation questions, and have had a look at zonestat to check allocations, we can log in to our zone, as shown in Listing 19:

root@global:~# zoneadm list -cv

ID NAME STATUS PATH BRAND IP

0 global running / solaris shared

3 myfirstkz running - solaris-kz excl

5 iso-kz running - solaris-kz excl

root@dcsw-t52-1:~# zonestat 1 1

Collecting data for first interval...

Interval: 1, Duration: 0:00:01

SUMMARY Cpus/Online: 256/25 PhysMem: 255G VirtMem: 258G

----------CPU---------- --PhysMem-- --VirtMem-- --PhysNet--

ZONE USED %PART STLN %STLN USED %USED USED %USED PBYTE %PUSE

[total] 0.14 0.05% 0.00 0.00% 26.9G 10.5% 29.5G 11.4% 294 0.00%

[system] 0.00 0.00% - - 14.4G 5.65% 28.4G 10.9% - -

global 0.10 0.04% - - 473M 0.18% 840M 0.31% 294 0.00%

iso-kz 0.01 0.20% 0.00 0.00% 8213M 3.14% 12.7M 0.00% 0 0.00%

myfirstkz 0.01 0.00% 0.00 0.00% 4154M 1.59% 253M 0.09% 0 0.00%

root@dcsw-t52-1:~# zonestat -r psets 1 1

Collecting data for first interval...

Interval: 1, Duration: 0:00:01

PROCESSOR_SET TYPE ONLINE/CPUS MIN/MAX

pset_default default-pset 248/248 1/-

ZONE USED %USED STLN %STLN CAP %CAP SHRS %SHR %SHRU

[total] 0.85 0.34% 0.00 0.00% - - - - -

[system] 0.02 0.00% - - - - - - -

global 0.81 0.32% - - - - - - -

myfirstkz 0.01 0.00% 0.00 0.00% - - - - -

PROCESSOR_SET TYPE ONLINE/CPUS MIN/MAX

iso-kz dedicated-cpu 8/8 8/8

ZONE USED %USED STLN %STLN CAP %CAP SHRS %SHR %SHRU

[total] 0.01 0.21% 0.00 0.00% - - - - -

[system] 0.00 0.00% - - - - - - -

iso-kz 0.01 0.21% 0.00 0.00% - - - - -

root@global:~# zlogin iso-kz

[Connected to zone 'iso-kz' pts/2]

Last login: Tue Jul 3 08:20:26 2018 on kz/term

Oracle Corporation SunOS 5.11 Solaris_11/11.4/ON/production.build-11.4-26:2018-06-07 June 2018

root@solarisiso-kz:~# psrinfo -t

socket: 0

core: 0

cpus: 0-7

root@:~# exit

logout

[Connection to zone 'iso-kz' pts/2 closed]

Listing 19

In Listing 19, we can see the eight dedicated CPUs we assigned and we can see that we are running a release different than that of the host global zone.

Before we move on, let's shut down our two kernel zones:

root@global:~# zoneadm -z myfirstkz shutdown root@global:~# zoneadm -z iso-kz shutdown

Converting a Native Zone to a Kernel Zone

The final operation to try is converting a native zone to a kernel zone, which is made especially easy through the use of Oracle Solaris Unified Archives.

In this example, we will use a native zone that has already been created. If you are not sure how to create a native zone, see "How to Get Started Creating Oracle Solaris Zones in Oracle Solaris 11."

Let's start by having a look at the native zone (conveniently named native-zone), which we are going to convert, as shown in Listing 20:

root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared 8 native-zone running /system/zones/native-zone solaris excl - myfirstkz installed - solaris-kz excl - iso-kz installed - solaris-kz excl root@global:~# zlogin native-zone [Connected to zone 'native-zone' pts/3] Oracle Corporation SunOS 5.11 11.3 May 2018 root@native-zone:~# touch my_special_files root@native-zone:~# zonename native-zone root@native-zone:~# exit logout [Connection to zone 'native-zone' pts/3 closed] root@global:~# zoneadm -z native-zone shutdown root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared - myfirstkz installed - solaris-kz excl - iso-kz installed - solaris-kz excl - native-zone installed /system/zones/native-zone solaris excl

Listing 20

In Listing 20, we can see our native-zone is already up and running, and we have logged in and created a file called my_special_files. This example is just to reflect any configuration that we might have done when taking a zone from a real environment. Finally, we checked the zone name, logged out, and shut down the native zone.

Note: One of the big advantages of using a Unified Archive to capture a zone is that you can do the capture on a running zone, which means you can avoid outages to end users. In this case, because we want to convert our native zone to a kernel zone (rather than clone the native zone), we shut down the native zone.

Now let's create a Unified Archive of our native zone:

root@global:~# archiveadm create -z native-zone ./native-zone-archive.uar Initializing Unified Archive creation resources... Unified Archive initialized: /root/native-zone-archive.uar Logging to: /system/volatile/archive_log.13462 Executing dataset discovery... Dataset discovery complete Creating install media for zone(s)... Media creation complete Preparing archive system image... Beginning archive stream creation... Archive stream creation complete Beginning final archive assembly... Archive creation complete

Once we have created the archive, we can examine it to see what it contains:

root@global:~# ls -l

total 4340781

-rw-r--r-- 1 root root 1206958080 Jul 3 00:17 native-zone-archive.uar

-rwxr-xr-x 1 root root 4726 Jul 2 18:10 set_user_reserve.sh

-rw-r--r-- 1 root root 1013821440 Jun 18 13:08 sol-11_4-9-text-sparc.iso

root@global:~# archiveadm info -v ./native-zone-archive.uar

Archive Information

Creation Time: 2018-07-03T07:12:37Z

Source Host: dcsw-t52-1

Architecture: sparc

Operating System: Oracle Solaris 11.3 SPARC

Recovery Archive: No

Unique ID: 34af80ee-8759-44fe-b2c7-64edd4bc8e44

Archive Version: 1.0

Deployable Systems

'native-zone'

OS Version: 0.5.11

OS Branch: 0.175.3.33.0.4.0

Active BE: solaris

Brand: solaris

Size Needed: 1.9GB

Unique ID: e968e488-7a8a-4f1f-9b81-af57ccf5e7fe

AI Media: 0.175.3.33.0.5.0_ai_sparc.iso

Root-only: Yes

Before we go any further we're going to use the sysconfig command to pre-create a sysconfig profile file. This will save us from having to run through the sysconfig process after the bits are installed. The result is a file called sc_profile.xml:

root@global:~# sysconfig create-profile -o /root

Next, let's configure a new kernel zone and when we are ready to install it, we will pass in the archive, as shown in Listing 21:

root@global:~# zonecfg -z converted-zone-kz create -t SYSsolaris-kz

root@global:~# zoneadm -z converted-zone-kz install -c /root/sc_profile.xml -a ./native-zone-archive.uar

Progress being logged to /var/log/zones/zoneadm.20180704T044420Z.converted-zone-kz.install

[Connected to zone 'converted-zone-kz' console]

NOTICE: Entering OpenBoot.

NOTICE: Fetching Guest MD from HV.

NOTICE: Starting additional cpus.

NOTICE: Initializing LDC services.

NOTICE: Probing PCI devices.

NOTICE: Finished PCI probing.

SPARC T5-2, No Keyboard

Copyright (c) 1998, 2015, Oracle and/or its affiliates. All rights reserved.

OpenBoot 4.38.1, 4.0000 GB memory available, Serial #1940989492.

Ethernet address 0:0:0:0:0:0, Host ID: 73b12634.

Boot device: disk1 File and args: - install aimanifest=/system/shared/ai.xml profile=/system/shared/sysconfig/

-

WARNING: Can not verify signature on unsupported binary format

WARNING: Signature verification of boot-archive bootblk failed

SunOS Release 5.11 Version 11.3 64-bit

Copyright (c) 1983, 2018, Oracle and/or its affiliates. All rights reserved.

Remounting root read/write

Probing for device nodes ...

Preparing image for use

Done mounting image

Configuring devices.

Hostname: solaris

Using specified install manifest : /system/shared/ai.xml

Using specified configuration profile(s): /system/shared/sysconfig/

solaris console login:

Automated Installation started

The progress of the Automated Installation will be output to the console

Detailed logging is in the logfile at /system/volatile/install_log

Press RETURN to get a login prompt at any time.

04:45:06 Install Log: /system/volatile/install_log

04:45:06 Using XML Manifest: /system/volatile/ai.xml

04:45:06 Using profile specification: /system/volatile/profile

04:45:06 Starting installation.

04:45:06 0% Preparing for Installation

04:45:06 100% manifest-parser completed.

04:45:06 100% None

04:45:06 0% Preparing for Installation

04:45:07 1% Preparing for Installation

04:45:07 2% Preparing for Installation

04:45:07 3% Preparing for Installation

04:45:07 4% Preparing for Installation

04:45:08 5% archive-1 completed.

04:45:08 6% install-env-configuration completed.

04:45:09 9% target-discovery completed.

04:45:10 Pre-validating manifest targets before actual target selection

04:45:10 Selected Disk(s) : c1d0

04:45:11 Pre-validation of manifest targets completed

04:45:11 Validating combined manifest and archive origin targets

04:45:11 Selected Disk(s) : c1d0

04:45:11 9% target-selection completed.

04:45:11 10% ai-configuration completed.

04:45:11 10% var-share-dataset completed.

04:45:16 10% target-instantiation completed.

04:45:16 10% Beginning archive transfer

04:45:16 Commencing transfer of stream: 60d8aed2-da6c-45d7-b8c2-d1b316da972b-0.zfs to rpool

04:45:24 24% Transferring contents

04:45:28 31% Transferring contents

04:45:35 51% Transferring contents

04:45:41 52% Transferring contents

04:45:45 63% Transferring contents

04:45:47 69% Transferring contents

04:45:49 73% Transferring contents

04:45:55 76% Transferring contents

04:45:59 Completed transfer of stream: '60d8aed2-da6c-45d7-b8c2-d1b316da972b-0.zfs' from file:///system/shared/uafs/OVA

04:46:03 Archive transfer completed

04:46:19 89% generated-transfer-962-1 completed.

04:46:19 89% Beginning IPS transfer

04:46:19 Setting post-install publishers to:

04:46:19 solaris

04:46:20 origin: http://10.134.6.21/solaris11/support/

04:46:20 89% generated-transfer-962-2 completed.

04:46:20 Changing target pkg variant. This operation may take a while

04:52:27 90% apply-pkg-variant completed.

04:52:28 Setting boot devices in firmware

04:52:28 Setting openprom boot-device

04:52:28 91% boot-configuration completed.

04:52:28 91% update-dump-adm completed.

04:52:28 92% setup-swap completed.

04:52:29 92% device-config completed.

04:52:31 92% apply-sysconfig completed.

04:52:31 92% transfer-zpool-cache completed.

04:52:36 98% boot-archive completed.

04:52:41 98% update-filesystem-owner-group completed.

04:52:41 98% transfer-ai-files completed.

04:52:42 98% cleanup-archive-install completed.

04:52:42 100% create-snapshot completed.

04:52:43 100% None

04:52:43 Automated Installation succeeded.

04:52:43 You may wish to reboot the system at this time.

Automated Installation finished successfully

The system can be rebooted now

Please refer to the /system/volatile/install_log file for details

After reboot it will be located at /var/log/install/install_log

[NOTICE: Zone halted]

[Connection to zone 'converted-zone-kz' console closed]

Done: Installation completed in 495.842 seconds.

root@global:~# zoneadm list -cv

ID NAME STATUS PATH BRAND IP

0 global running / solaris shared

- myfirstkz installed - solaris-kz excl

- iso-kz installed - solaris-kz excl

- native-zone installed /system/zones/native-zone solaris excl

- converted-zone-kz installed - solaris-kz excl

Listing 21

As we can see in Listing 21, the install process completed successfully and we have an installed kernel zone.

Let's boot up our newly converted zone and have a look at it, as shown in Listing 22:

root@global:~# zoneadm -z converted-zone-kz boot root@global:~# zoneadm list -cv ID NAME STATUS PATH BRAND IP 0 global running / solaris shared 27 converted-zone-kz running - solaris-kz excl - myfirstkz installed - solaris-kz excl - iso-kz installed - solaris-kz excl - native-zone installed /system/zones/native-zone solaris excl root@global:~# zlogin converted-zone-kz [Connected to zone 'coverted-zone-kz' pts/2] Oracle Corporation SunOS 5.11 11.3 May 2018 root@unknown:~# ls my_special_files

Listing 22

In Listing 22, we can see that the contents of our native zone have been preserved.

Conclusion

In this article, we explored how to create, install, boot, and configure a kernel zone. You learned that Oracle Solaris Kernel Zones have the ability to run kernel versions that are different from the kernel version running on the host. We also saw how to do a direct installation and an installation based on an ISO image. Finally, we saw how to convert a native zone to a kernel zone using Unified Archives.

See Also

- Creating and Using Oracle Solaris Kernel Zones

- "How to Get Started Creating Oracle Solaris Zones in Oracle Solaris 11"

- "How to Consolidate Zones Storage on an Oracle ZFS Storage Appliance"

- Using Unified Archives for System Recovery and Cloning in Oracle Solaris 11.4

- Resource Management, Oracle Solaris Zones Developer's Guide

Also see these additional resources:

- Download Oracle Solaris 11

- Access Oracle Solaris 11 product documentation

- Access all Oracle Solaris 11 how-to articles

- Learn more with Oracle Solaris 11 training and support

- See the official Oracle Solaris blog

- Follow Oracle Solaris on Facebook and Twitter

About the Author

Duncan Hardie is an Oracle Solaris Product Manager with responsibility for Oracle Solaris cloud and virtualization technologies. Joining Oracle as part of the Sun Microsystems acquisition, he started in engineering working on device drivers for fault-tolerant products and moved into customer-facing roles related to monitoring, high-performance computing, and virtualization. In his current role, Duncan works to help define, deliver, and position Oracle Solaris offerings.

| Revision 1.2, 07/05/2014 |

| Revision 1.1, 12/10/2014 |

| Revision 1.0, 07/28/2014 |