Setting Up, Configuring, and Using an Oracle WebLogic Server Cluster

by Yuli Vasiliev - Published October 2012

Learn to take advantage of multiple Oracle WebLogic Server instances grouped into a cluster to maximize scalability and availability.

The key characteristics of a successful online system today include high availability, reliability, and scalability. Put simply, your online system must be ready to process a user request quickly and at any time, even when the number of requests grows abruptly. Strictly speaking, a growing number of user requests is a good thing, because that makes it clear that your online business is booming and opening up new opportunities for you. The ability to act on opportunities is a key characteristic of many successful businesses today.

To meet the requirements for greater availability and scalability, more and more companies are moving toward clustered infrastructures for their online solutions. Oracle WebLogic Server supports clustering, allowing an Oracle WebLogic Server cluster to be composed of multiple Oracle WebLogic Server instances running on different servers at the same time, so that application deployed to the cluster can take advantage of the combined processing power of multiple servers, failover, and load balancing.

To end users and applications, multiple Oracle WebLogic Server instances grouped into a cluster appear as a single Oracle WebLogic Server instance. But beneath the surface, the cluster uses an infrastructure that enables interconnecting clustered Oracle WebLogic Server instances to make them work as a single unit. It's important to realize, though, that setting up and using that infrastructure involves some configuration work on the part of a cluster administrator.

Key Benefits of Clustering

The idea behind clustering is pretty simple: Multiple server instances are grouped to work together to achieve increased scalability and reliability. In particular, the benefits of an Oracle WebLogic Server cluster include the following:

- High availability—You can deploy your applications on multiple server instances within an Oracle WebLogic Server cluster. In this case, if a server instance that is running your application fails, application processing will continue (transparently to the application users) on another instance.

- Scalability—New server instances can be dynamically added to a cluster to match consumer demand, without interrupting application processing.

- Improved performance—Another advantage to deploying on multiple server instances in a cluster is that it provides several alternatives for application processing. The load balancing mechanism that clustering enables can significantly improve performance of your clustered application due to the efficient distribution of jobs and communications across the resources within the cluster.

To provide the benefits above, an Oracle WebLogic Server cluster supports the following features:

- Load balancing

- Application failover

- Migration of a server instance

To grasp how things work in an Oracle WebLogic Server cluster, however, you first need to get at least a cursory understanding of how server instances interact with one another in a cluster and what mechanisms enable the deployment of an application on multiple server instances. The next section covers these topics, providing a brief overview of the Oracle WebLogic Server cluster technology.

How Clustering Works

It's fairly obvious that Oracle WebLogic Server instances have to communicate with one another in order to appear to clients as a single Oracle WebLogic Server instance. Server instances in an Oracle WebLogic Server cluster communicate using the following network technologies:

- IP multicast or IP unicast for one-to-many communications in the cluster, such as broadcasting heartbeat messages and cluster-wide JNDI updates

- IP sockets for peer-to-peer communications, such as replicating object states between a primary and secondary server instance

When configuring your cluster, you can select either IP multicast or IP unicast cluster messaging mode for one-to-many communication. The default cluster messaging mode can vary depending on the tool you use to create a cluster. Thus, when creating a cluster with the Configuration Wizard, the default mode is set to unicast. With WSLT, however, multicast is the default. For details on the pros and cons of each messaging mode, consult Using Clusters for Oracle WebLogic Server. It's interesting to note, however, that Oracle recommends unicast for messaging within a cluster when creating new clusters.

As mentioned earlier, IP multicast or unicast is used to, among other things, maintain the cluster-wide JNDI tree, which plays a key role in application deployment. In particular, the JNDI tree used in a clustered environment holds information about the cluster-wide deployed objects and appears to clients as a single, global tree. However, the cluster-wide JNDI tree is replicated across Oracle WebLogic Server instances within a cluster using the cluster-wide JNDI updates mechanism. Thus, each server instance in a cluster maintains its local copy of the cluster-wide JNDI tree, updating that local JNDI tree as the information about new clustered application components comes in through the multicast (or unicast) channel.

Figure 1 provides a graphical depiction of how JNDI is used when a clustered object is deployed.

Figure 1. The process of deployment on multiple server instances in an Oracle WebLogic Server cluster.

The process of deploying a clustered object shown can be explained as follows. First, the clustered object's implementation is bound into the local JNDI tree of a single server instance that then offers this object to the other server instances in the cluster by sending broadcast messages to those instances, causing them to update their local JNDI trees accordingly.

Of course, JNDI is not the only thing worth mentioning when it comes to application deployment on multiple server instances in a cluster. Continuing with this topic, it would be interesting to look at how you might configure the rules for the deployment process and identify the requirements for the availability of server instances in the cluster to which you're deploying your application.

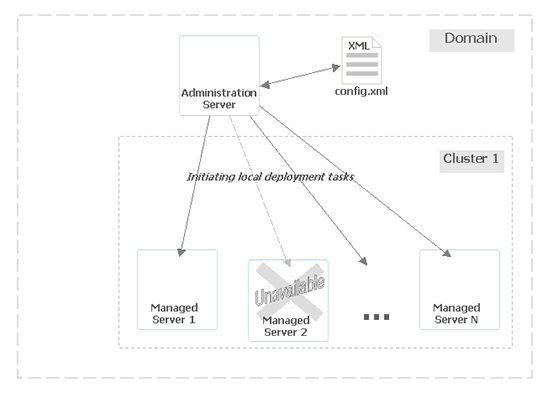

It's important to realize that the deployment process is managed by the domain's Administration Server, which, therefore, must be up and running. The Administration Server communicates with the managed servers during the entire process, making sure the deployment goes on in accordance with the default deployment behavior as well with as the options specified in the domain's config.xml configuration file and the startup arguments used upon starting the Administration Server.

For example, when starting the domain, you might set the ClusterConstraintsEnabled option to change the default deployment behavior, enforcing strict deployment for all managed servers in a cluster. The default behavior does not require the availability of all managed servers in a cluster for the deployment process to complete successfully. This default scenario is shown in Figure 2.

Figure 2. The domain's Administration Server manages the process of deployment to managed servers in a cluster.

For further details on how applications are deployed to an Oracle WebLogic Server cluster, refer to Using Clusters for Oracle WebLogic Server.

Once you have your clustered application deployed, failover and load balancing become available for the objects comprising it. It's important to note, though, that these mechanisms are handled differently for different types of objects. For example, load balancing and failover for Enterprise JavaBeans (EJB) and remote method invocation (RMI) objects are based on the replica-aware stub technology, while these same mechanisms for Servlets and JavaServer Pages are based on replicating the HTTP session state of clients within a cluster using either an Oracle WebLogic Server proxy plug-in or external load balancing hardware.

Figure 3 illustrates using replica-aware stubs to locate instances of an EJB object deployed to the cluster.

As you can see in Figure 3, a clustered EJB object is deployed to all server instances in the cluster, thus making available several instances of the object, which are also known as replicas. When a client accesses a replica, it gets instead a replica-aware stub, which contains all the information needed to locate an EJB class on any server instance in the cluster. Also, the stub contains the load balancing algorithm allowing the client to choose between replicas on each call.

The Oracle WebLogic Server cluster also supports the failover capabilities. The idea behind failover is pretty simple: If a server instance on which an application is running fails, another server instance within the cluster continues application processing. Turning back to the example in Figure 3 of a clustered EJB object, the replica-aware stub technology enables failover. Since a client accesses a clustered service through a replica-aware stub—instead of making a direct call—the client will be redirected to another replica if the stub detects the failure of the original replica.

Setting Up an Oracle WebLogic Server Cluster

Clustered or not, Oracle WebLogic Server instances are grouped into a domain or domains. So, a cluster is always part of a domain. It's interesting to note that a domain can include more than one cluster as well as non-clustered Oracle WebLogic Server instances. On the other hand, all server instances within a particular cluster must belong to the same domain. If you have more than one cluster in a domain, each cluster will have the same Administration Server, because a domain must always have one server instance acting as the Administration Server.

To set up a cluster, you can use the same tools you normally use to set up a domain. Actually, you can choose between several tools when it comes to creating an Oracle WebLogic Server domain and an Oracle WebLogic Server cluster. The simplest and recommended one is the Configuration Wizard.

Below are the general steps to carry out when creating a new Oracle WebLogic Server cluster with the Configuration Wizard. This procedure assumes you have already installed the exact same installation package of Oracle WebLogic Server software on each machine where you want to create clustered instances. On one of those machines, launch Configuration Wizard by running the WLHOME/common/bin/config.sh script, and then perform the following steps:

- On the first screen of the wizard, select Create a new WebLogic domain.

- Walk through the next four screens of the wizard, setting options if necessary.

- On the Select Optional Configuration screen, select the Administration Server and the Managed Servers, Clusters and Machines options.

- On the Configure the Administration Server screen, specify the configuration details for the Administration Server.

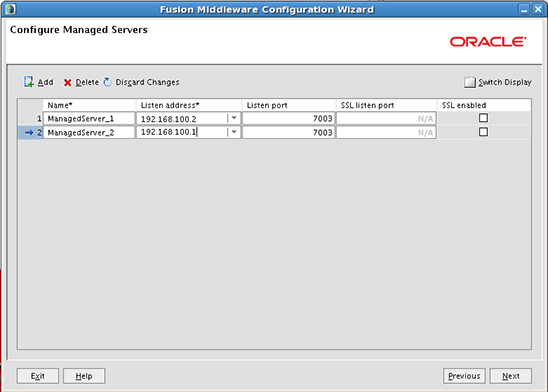

- On the Configure Managed Servers screen, add managed servers and specify the settings for them. Make sure you specify the actual IP address of the physical machine where you want to host a managed server that is being added.

The screen might look like Figure 4.

- On the Configure Clusters screen, add a new cluster and then configure the settings for it.

In particular, you'll need to set the cluster address. To do that, you can specify a comma-separated list of IP addresses (or DNS names) and ports for the managed servers you added in the previous step. If you've changed unicast to multicast, you'll also need to provide the multicast address and port for the cluster.

- On the Assign Servers to Clusters screen, move the managed servers in the left pane to the right pane, thus assigning them to the cluster.

- On the Configure Machines screen, add the machines that will host the managed servers.

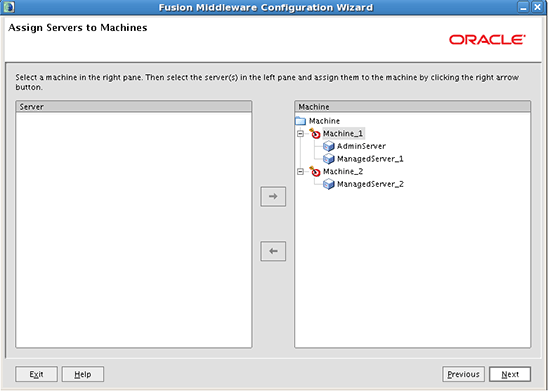

- On the Assign Servers to Machines screen, assign the Administration Server and managed servers in the left pane to the appropriate machines in the right pane, as illustrated in Figure 5.

- On the Configuration Summary screen, look through the summary of the settings chosen in the wizard and then, if everything is OK, click Create to start the process of domain creation.

At this point, you have created a clustered Oracle WebLogic Server domain configuration on one of the machines to be used in the cluster. The next step is to create a managed server domain directory on the other machine for the managed server that will run on that machine. To do this, you can create a managed server template containing the files of the domain that are needed to create a managed server domain directory on a remote machine. Then, you can unpack this template on the other machine to create the managed server domain directory structure on it.

These tasks can be accomplished with the Oracle WebLogic Server command-line utilities pack and unpack, respectively. So, navigate to the WL_HOME/common/bin directory on the machine where you set up the domain, and issue the following command:

pack.sh -managed=true -domain=/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain

-template=/home/oracle/Oracle/temp/clustered_domain.jar -template_name=clustered_domain

Your actual paths might differ from those above, of course. The output of the command above should look like this:

<< read domain from "/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain"

>> succeed: read domain from "/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain"

<< set config option Managed to "true"

>> succeed: set config option Managed to "true"

<< write template to "/home/oracle/Oracle/temp/clustered_domain.jar"

......................................................................

>> succeed: write template to "/home/oracle/Oracle/temp/clustered_domain.jar"

<< close template

>> succeed: close template

Now you can move the clustered_domain.jar template file that was just generated to the other machine, and then unpack it there. To unpack, navigate to the WL_HOME/common/bin directory on that other machine and issue the following command:

unpack.sh -template=/home/oracle/Oracle/temp/clustered_domain.jar

-domain=/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain

If everything is OK, you should see the following output:

>> succeed: read template from "/home/oracle/Oracle/temp/clustered_domain.jar"

<< set config option DomainName to "clustered_domain"

>> succeed: set config option DomainName to "clustered_domain"

<< write Domain to "/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain"

.......................................................................

>> succeed: write Domain to "/home/oracle/Oracle/Middleware/user_projects/domains/clustered_domain"

<< close template

>> succeed: close template

If your cluster spans multiple machines, you'll need to unpack the template on each machine.

Starting a Cluster

Now that you have an Oracle WebLogic Server cluster configured and ready to go, you can start it. To do this, you need to start each server instance included in the cluster. After you start the Administration Server, you'll be able to start the managed servers. Oracle WebLogic Server provides several alternatives for doing that. The simplest one is probably to use the Administration Console. Start the Administration Server with the startWebLogic.sh script located in the root directory of your domain, and then proceed with the following steps:

- To access the Administration Console, point your browser to the following address:

http://your_domain_host_IP_address:7001/console

You'll be prompted to enter the username and password used to start the Administration Server (you provided them during the Oracle WebLogic Server software installation).

- On each machine hosting a managed server, navigate to the

WL_HOME\server\bindirectory and launch thestartNodeManager.shscript to start the node manager.

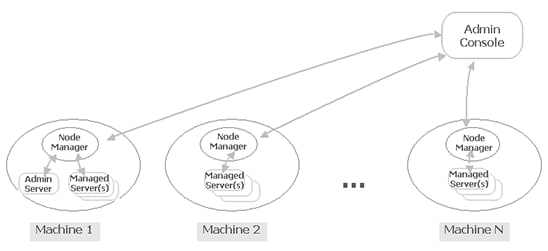

A node manager process makes it possible to start and control server instances on the machine where it's running from a remote location (from within the Administration Console, in our example). Diagrammatically, this might look like Figure 6:

- Before going any further, make sure that the node managers you just started are reachable. To do that, in the Administration Console, check the Your_Domain->Environment->Machines->Machine_->Monitoring page for each machine.

If the status of the node manager is not reachable, look at the Problem description field on the Monitoring page to get some clue as to what is causing the problem. The most common problems at this stage are caused by firewall or SSL communication. So, make sure that communication between the hosts comprising your cluster is not blocked by the firewall.

To avoid SSL communication problems, you may use a plain connection to interact with the node manager, which is recommended for testing and development purposes. To do this, you need to edit the

WL_HOME/common/nodemanager/nodemanager.propertiesfile, setting theSecureListenerproperty tofalse. Also be sure to edit the node manager settings in the Administration Console, setting the Type field to Plain in the Machine_->Node Manager tab. - Once the status of the node manager for each machine is reachable, you can start the managed servers. In the Administration Console, move to the Your_Domain->Environment->Servers->Control page. Select all the managed servers in the Servers table and then click the Start button.

As a result, the startup tasks will be delegated to the corresponding node managers. It will take a while before you see the status of the managed servers change to RUNNING.

Monitoring and Reconfiguring a Cluster

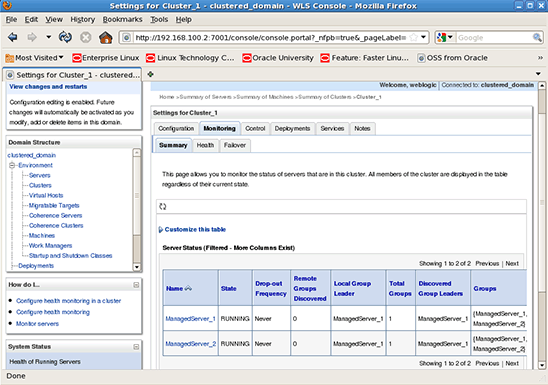

Both tasks can be accomplished from within the Administration Console. For example, you can monitor the status of the managed servers comprising the cluster from within the Your_Domain->Environment->Clusters->Your_Cluster->Monitoring page of the Administration Console. Figure 7 shows what this page might look like:

If you need to revise the settings of your cluster, you can do that from within the Your_Domain->Environment->Clusters->Your_Cluster->Configuration page of the console. This page contains several tabs to simplify the process of changing settings.

On the General tab shown in Figure 8, you can edit the general settings of the cluster, such as the cluster address and default load algorithm. By editing the latter, for example, you specify how to balance the load among instances within the cluster. It's interesting to note that you can override the default load algorithm method for a specific object.

Figure 8. The Configuration page in the Administration Console, which allows you to redefine the current settings of a cluster.

You can perform more cluster configuration on the other tabs of the Configuration page. For example, you can add managed servers to or remove managed servers from the cluster on the Servers tab, enable migratable servers for automatic migration on the Migration tab, and so on.

Deploying Applications

Before deploying your application to an Oracle WebLogic cluster, you might need to perform some configuration work at the application level. The exact configuration depends on what type of application you have and what type of features you want to utilize.

For example, to enable replication of the session data across the clustered servers for a Web application that you want to deploy to a cluster, you'll need to edit the weblogic.xml file, setting the persistent store method to replicated or replicated_if_clustered, as follows:

<!-- Insert session descriptor element here -->

<session-descriptor>

<persistent-store-type>replicated_if_clustered</persistent-store-type>

</session-descriptor>

After modifying the settings of your application and repackaging it, you can proceed to deployment.

There are several tools you can use to deploy applications to an Oracle WebLogic Server cluster, including the following:

weblogic.Deployer- The WebLogic Scripting Tool (WLST)

- The Administration Console

Listed below are the steps for deploying an application to a cluster using the Administration Console.

- In the Administration Console, open the Your_Domain->Deployments page and click the Install button to launch Install Application Assistant.

- On the first screen of the wizard, in the Current Location field, specify the location in which you have your application archive, and then click to select that archive in the list below.

- On the next screen, make sure that the Install this deployment as an application option is selected.

- On the Select deployment targets screen, select the cluster in the Clusters table, and then make sure that the All servers in the cluster option is selected to secure homogeneous deployment, as shown in Figure 9:

- On the next screen, you click Finish to complete the wizard and start the deployment process.

After completing the above steps, you'll be directed to the settings for the your_app page, where you'll be able to view the installed configuration of your application and test the deployment.

Conclusion

Your application may slow down, becoming less responsive as the server it is deployed to takes longer and longer to react to too many requests. The power of an Oracle WebLogic Server cluster lies in its ability to dynamically increase the capacity of a deployed application without interruption of service, even if a particular server instance in the cluster fails.

This article should give you a good grasp of the ideas behind the Oracle WebLogic Server cluster technology as well as how to set up, configure, and use a cluster. In particular, we looked at a simple cluster configuration and learned how the standard Oracle WebLogic Server tools can be used for setting up and configuring a cluster and then deploying applications to it.

See Also

Using Clusters for Oracle WebLogic Server

About the Author

Yuli Vasiliev is a software developer, freelance author, and consultant currently specializing in open source development, Java technologies, business intelligence (BI), databases, service-oriented architecture (SOA), and—more recently—virtualization. He is the author of a series of books on Oracle technology, the most recent one being Oracle Business Intelligence: An introduction to Business Analysis and Reporting (Packt, 2010).