Looking "Under the Hood" at Networking

in Oracle VM Server for x86

Published November 2012 (updated July 2013) ,by Gregory King and Suzanne Zorn

This article looks "under the hood" of Oracle VM Server for x86, exploring the underlying logical network components that are created by Oracle VM Manager.

Normally, you configure Oracle VM Server for x86 networking with the Oracle VM Manager GUI. The GUI simplifies administration, speeds deployment, and reduces the chance of configuration errors. Hiding the implementation details using the GUI has its benefits, but here on the Oracle Technology Network, we like to expose the heart of the machine; it's not only interesting, but it can help you troubleshoot problems that might arise.

Note: This article assumes you are familiar with basic Oracle VM Server for x86 concepts and terminology and general network-related concepts, such as bonding and virtual LANs (VLANs). For an overview, refer to Chapter 2, "Introduction to Oracle VM," in the Oracle VM User's Guide.

About the Oracle VM Server for x86 Networking Model

Oracle VM Server for x86 is based on the Xen kernel and underlying hypervisor technology, and it capitalizes on the Xen paradigm for management of Oracle VM guests. The Oracle VM Server for x86 networking implementation follows the basic Xen networking approach, with some modifications, and supports standard networking concepts such as network interface bonding and VLANs.

Oracle VM Server for x86 networks are logical constructs that you create in the Oracle VM Manager GUI by combining a variety of individual components, or building blocks, into whatever network infrastructure you need. The building blocks are physical Ethernet ports, bonded ports (optional), VLAN segments (optional), virtual MAC addresses (VNICs), and network channels. These networks are used by the Oracle VM servers and guests to communicate with each other.

Networking Roles (Channels)

A key concept of the Oracle VM Server for x86 networking model is its use of networking roles (also called channels) that are assigned to each network in the virtualized environment. These roles are implemented as flags that tell Oracle VM Manager how the server pool is configured and determine which type of agent traffic is sent over which individual network. Administrators can create separate networks for each role to isolate traffic, or they can configure a single network for multiple roles. The network roles include the following:

- Server Management—Used to designate on which network the Oracle VM Manager will communicate with the agents on physical Oracle VM servers within any given server pool. This allows IP addresses to be assigned to interfaces on the Oracle VM servers.

- Cluster Heartbeat—Used to send Oracle Cluster File System 2 heartbeat messages between the Oracle VM servers in a server pool to verify that the Oracle VM servers are present and running. Assigning this role to multiple networks in a single server pool does not accomplish anything since Oracle Cluster File System 2 supports the use of only one subnet for heartbeat traffic. This allows IP addresses to be assigned to interfaces on the Oracle VM servers.

- Live Migrate—Used when migrating virtual machines from one Oracle VM server to another in the same server pool. This allows IP addresses to be assigned to interfaces on the Oracle VM servers.

- Storage—This can be used to enable a network port for any kind of network traffic on the Oracle VM servers and does not actually limit or restrict any particular network port to NFS or iSCSI traffic only; any enabled network port can be used for NFS or iSCSI traffic whether or not you assign this channel to an Oracle VM network. This allows IP addresses to be assigned to interfaces on the Oracle VM servers.

- Virtual Machine—Used for communication among the Oracle VM guests in the server pool and also between Oracle VM guests and external networks. This instructs the Oracle VM servers to create a Xen bridge that acts like a network switch to get network traffic to and from Oracle VM guests. You can assign this role to multiple Oracle VM networks as needed. This does not allow IP addresses to be assigned to interfaces on the Oracle VM servers.

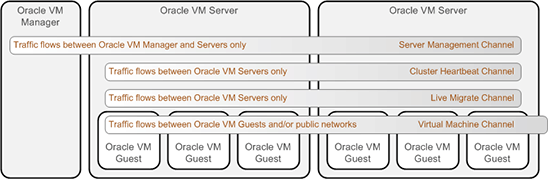

Figure 1 shows the network channels used by Oracle VM Server for x86 and illustrates the varying scope for each channel type. The Server Management channel includes communication between Oracle VM Manager and the Oracle VM servers in the server pool. Both the Cluster Heartbeat channel and the Live Migrate channel carry traffic among only the Oracle VM servers. For these channels, traffic can be on private, non-routed subnets as communication flows between only the servers in the pool. The traffic doesn't require access to routers; it requires access to only the switches that connect the servers and manager. In contrast, a Virtual Machine channel includes traffic between Oracle VM guests as well as communication with external public networks.

Figure 1. Oracle VM Server for x86 network channels.

When you use the command line interface to explore the networks configured by Oracle VM Manager, commands executed on the Oracle VM server will show network devices for all channel types. In contrast, commands executed on an Oracle VM guest will show only network devices for networks assigned to the Virtual Machine channel.

Note: Oracle VM Server for x86 supports a wide range of options in network design, varying in complexity from a single network to configurations that include network bonds, VLANS, bridges, and multiple networks connecting the Oracle VM servers and guests. Network design depends on many factors, including the number and type of network interfaces, reliability and performance goals, the number of Oracle VM servers and guests, and the anticipated workload. Discussion of best practices for Oracle VM Server for x86 network design is outside the scope of this paper.

How Oracle VM Server for x86 Implements the Network Infrastructure You Configure

During the initial installation of Oracle VM Server for x86, a logical interface named bond0 is created using a single physical network interface, typically the eth0 interface (although it can be anything you choose during the installation). During the server discovery phase, this bond is located and automatically assigned three of the network roles: Server Management, Cluster Heartbeat, and Live Migrate. You can later add an additional physical interface to bond0, or you can create other networks to be used for Cluster Heartbeat and Live Migrate roles. (The Server Management role cannot be reassigned after installation.)

Networks assigned the Server Management, Cluster Heartbeat, and Live Migrate roles carry traffic among the Oracle VM servers in a server pool (but they do not communicate with Oracle VM guests). Like all Oracle VM Server for x86 networks, they can be created using network ports, bonded devices, or VLAN segments. Oracle VM Manager is used to create any bonded devices or VLAN segments that are needed by a network; the requisite logical devices needed to implement those networks are automatically created and configured on the Oracle VM servers.

Networks assigned the Virtual Machine role carry traffic among the Oracle VM guests and to/from external networks. These networks can also be built using network ports, bonds, or VLAN segments, and Oracle VM Server for x86 creates the underlying logical network devices. A logical bridge device is created for each network assigned the Virtual Machine role. In addition, Oracle VM Server for x86 (based on the Xen model) uses a set of paired front-end and back-end drivers to handle network traffic between the Oracle VM guests and the physical network devices on the Oracle VM server. The front-end driver (netfront) resides in the guest domain, while the back-end driver (netback) resides in Dom0. The required logical devices are created automatically by Oracle VM Server for x86 when a guest domain runs on an Oracle VM server, and they are deleted when the domain is shut down.

These logical devices differ slightly for a hardware virtualized guest (which uses an unmodified kernel whose instructions to network devices are trapped and emulated when needed) and a paravirtualized guest (whose kernel is aware of the virtualized environment). Additional logical devices are also created to support VLANs. The following example illustrates these differences.

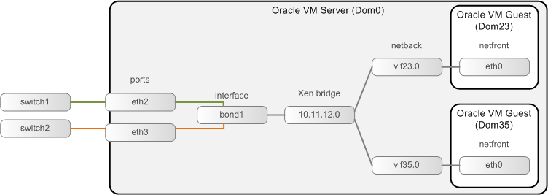

How Oracle VM Server for x86 Works with Paravirtualized Guest Operating Systems

The first example (see Figure 2) illustrates networking with paravirtualized guests or with hardware virtualized guests that use paravirtualized network drivers. Network devices such as eth0 on Oracle VM guests communicate through a device driver known as the netfront driver. Paired logical devices on Dom0, the netback devices (such as vif23.0), catch traffic from the netfront drivers and pass the packets along to a Xen bridge. The Xen bridge is tasked with passing network traffic from the netback devices to network devices on the Oracle VM server, which can be logical devices, such as the bonded device shown in Figure 2, or physical devices, such a single physical Ethernet port. A single Xen bridge communicates with the netback drivers from all Oracle VM guests that belong to that network.

Figure 2. Network devices used by paravirtualized guests (or hardware virtualized guests with paravirtualized drivers).

In the example in Figure 2, the Oracle VM server has two physical network interfaces, eth2 and eth3, that are used for this network. You first use Oracle VM Manager to create a bond using these two physical interfaces. Oracle VM Server for x86 creates the logical bond interface and assigns the name bond1. Next, you use Oracle VM Manager to create a new network with a Virtual Machine role, and you use the newly created bonded device on this Oracle VM server. Because this network is assigned the Virtual Machine role, Oracle VM Server for x86 automatically creates a bridge device. Netback devices will be automatically created for each Oracle VM guest that runs on this Oracle VM server. The netback devices created for each Oracle VM guest are named vifXX.Y, where XX is the domain ID currently used by that guest and Y indicates which netfront (eth) device is associated with this netback device. For example, a netback device name of vif23.0 indicates that the virtual interface is associated with eth0 within the guest that has a domain ID of 23.

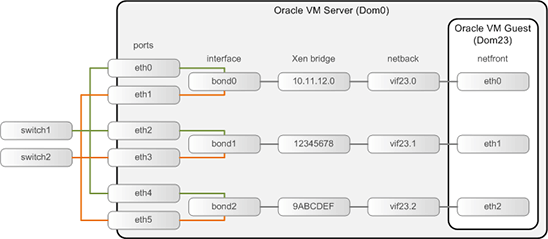

There can be more than one network created with the Virtual Machine role, and an Oracle VM guest can belong to more than one network, as shown in Figure 3. Oracle VM Server for x86 creates separate logical bridge devices for each network. Each bridge is assigned a name that matches the Oracle VM Server for x86 network ID. This network ID is displayed in the Oracle VM Manager interface in the Networking tab. The network created on bond0 is the only network that uses the subnet address as the network ID and, consequently, the name of the bridge. Other networks are assigned a random hexadecimal name as their network ID. In the example shown in Figure 3, the bridge created on bond0 is named 10.11.12.0; the bridge created on bond2 is named 9ABCDEF.

Figure 3. Multiple-network configuration for paravirtualized guests (or hardware virtualized guests with paravirtualized drivers).

A separate netback device is created for each network. The network interfaces within the guest operating system are named sequentially, for example, eth0, eth1, and so on. The netback devices are named vifXX.Y (for example, the three netback devices in this example are named vif23.0, vif23.1, and vif23.2). In this naming convention, XX denotes the current domain ID, and Y indicates which netfront (eth) device is associated with this netback device. For example, a netback device name of vif23.0 indicates that the virtual interface is associated with eth0 within the guest that has a domain ID of 23. Similarly, the name vif23.1 indicates that the virtual interface is associated with eth1 inside that same domain.

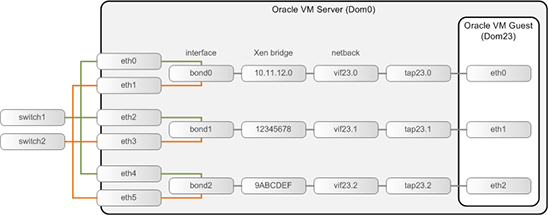

How Oracle VM Server for x86 Works with Hardware Virtualized Guest Operating Systems

Figure 4 shows a similar configuration for hardware virtualized guests without paravirtualized drivers. The logical network devices are the same as for paravirtualized guests, with an additional tapio Quick EMUlator (QEMU) device that is created on Dom0. The QEMU (tapio) device fools the hardware virtualized guest operating system into thinking network communication is flowing directly to and from physical hardware.

This tapio device in turn communicates with the vif netback device, which communicates with the logical bridge device. The tapio devices are assigned names of tapXX.Y, where XX is the current domain ID and Y indicates the corresponding network interface (eth) on the guest operating system that is associated with this netback device. For example, the device name tap23.1 indicates that the virtual interface is associated with eth1 within the guest that has the domain ID of 23.

Figure 4. Network configuration for hardware virtualized guests.

How Oracle VM Server for x86 Works with VLANs

Oracle VM Server for x86 networks can also be configured using multiple VLAN segments on a physical network port or bonded network interface. VLANs provide multiple independent logical networks that are typically used to isolate traffic on a single interface for increased data security or performance reasons.

Although Oracle VM Server for x86 can use VLANs, the actual VLAN creation occurs outside of Oracle VM Server for x86: Network administrators create VLANs and assign VLANs to switch ports on Ethernet switches. Oracle VM Server for x86 can then take advantage of the created VLANs. Using Oracle VM Manager, an administrator first creates a VLAN group for a network port or bond. Then, one or more VLAN segments can be created in that VLAN group.

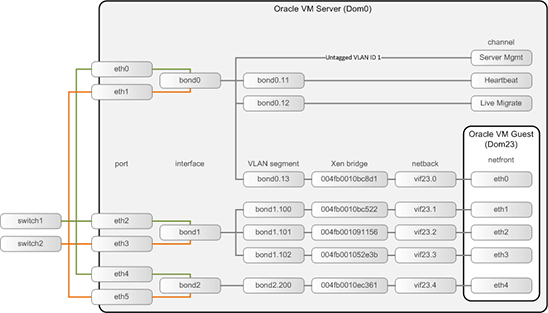

Figure 5 shows an example Oracle VM Server for x86 network configuration that includes VLANs. In this example, there are six physical network devices on the Oracle VM server (eth0 through eth5). These devices are used to create three bonded network interfaces (bond0, bond1, and bond2). VLAN groups are created on each of these bonded interfaces.

Figure 5. Example Oracle VM Server for x86 network configuration that includes VLANs.

Four VLANs are created in the first VLAN group (bond0) shown in Figure 5:

- The first (untagged) is assigned the Server Management role.

- The second (

bond0.11) is assigned the Cluster Heartbeat role. - The third (

bond0.12) is assigned the Live Migrate role. - The last (

bond0.13) is assigned the Virtual Machine role. This VLAN segment has a logical bridge device associated with it for communication with the Oracle VM guests.

Similarly, three VLANs are created on the VLAN group created for bond1, and a single VLAN is created on bond2. The networks built on these VLANs are all assigned the Virtual Machine role. Therefore, all of these VLAN segments have an associated logical bridge device that is used for communication with the Oracle VM guests.

Tying It All Together

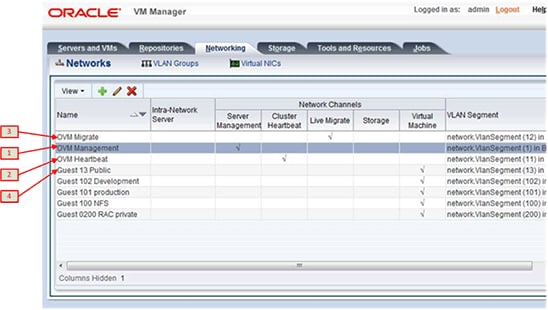

This final section takes an example Oracle VM Server for x86 network configuration and illustrates the relationship among three different perspectives of it: the Oracle VM Manager view, the logical view, and the command line view.

Figure 6 shows the network from the Oracle VM Manager perspective. Separate networks are created for the Server Management, Cluster Heartbeat, and Live Migrate roles, and multiple Virtual Machine networks are created. VLANs are used for all networks in this example. Labels (in red) on this diagram correspond to the networks shown in the logical network perspective in Figure 7.

Figure 6. Perspective from Oracle VM Manager.

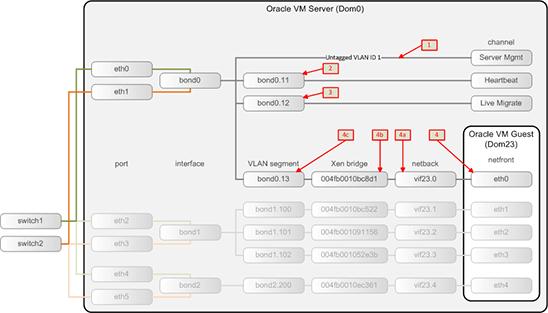

Figure 7 shows the logical perspective of the network devices that are created by Oracle VM Server for x86. For simplicity, this figure emphasizes only the networks associated with bond0. Labels (in red) on this diagram correspond to the networks shown in the Oracle VM Manager perspective in Figure 6.

Figure 7. Logical perspective of network devices.

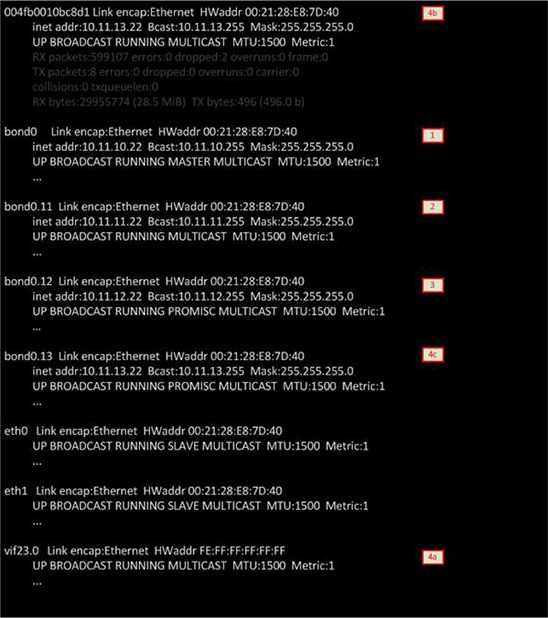

Figure 8 shows the network from the perspective of the command line interface. This figure shows partial output from the ifconfig command run on the Oracle VM server. (For simplicity, only devices related to bond0 are shown.) Labels (in red) in this figure correspond to the networks shown in Figure 6 and Figure 7.

Figure 8. Perspective from the command line interface.

The devices eth0 and eth1 shown in the command output in Figure 8 correspond to the physical network interfaces on the Oracle VM server (Dom0). The logical device bond0, created from these two physical devices, is assigned the IP address of 10.11.10.22. The three tagged VLAN segments created on this bonded network interface are named bond0.11, bond0.12 and bond0.13, and they are assigned the IP addresses 10.11.11.22, 10.11.12.22, and 10.11.13.22, respectively. This last VLAN is assigned the Virtual Machine role. As such, a logical bridge device (labeled 4b in the output) is created. Note that this bridge is assigned the same IP address as the associated VLAN segment. The netback device vif23.0 (labeled 4a in the output) corresponds to the Oracle VM guest running with the domain that has a domain ID of 23. If additional Oracle VM guests run on this virtual machine network, additional netback devices would be created and would appear in the ifconfig command output with a different device name (for example, vif34.0 or vif18.0).

See Also

The following resources are available for Oracle VM Server for x86:

- Oracle Virtualization Website: http://www.oracle.com/virtualization

- Oracle's Virtualization blog: http://blogs.oracle.com/virtualization/

About the Authors

Gregory King joined the Oracle VM product management team as a senior best practices technical consultant specializing in Oracle VM Server. Greg has a significant amount of hands-on experience with high availability, networking, storage, and storage networking, including SAN boot technology and server automation in production data centers, since 1993. His primary responsibility with the team is to develop and document solutions including best practices as well as foster a deeper understanding of the inner workings of Oracle VM with the goal of helping people become more successful with the product.

Suzanne Zorn has over 20 years of experience as a writer and editor of technical documentation for the computer industry. Previously, Suzanne worked as a consultant for Sun Microsystems' Professional Services division specializing in system configuration and planning. Suzanne has a BS in computer science and engineering from Bucknell University and an MS in computer science from Renssalaer Polytechnic Institute.