MDM and SOA: Be Warned!

by Jürgen Kress, Berthold Maier, Hajo Normann, Danilo Schmeidel, Guido Schmutz, Bernd Trops, Clemens Utschig-Utschig, Torsten Winterberg

December 2013

Introduction

At least one respected analyst organization suggests that investment in service oriented architecture (SOA) is wasted without enterprise information that can be exploited by SOA. That firmly held opinion vividly describes the relationship between SOA and master data management (MDM). An essential principle behind SOA is the reuse of components and the flexibility of using these components to support new functionalities or processes. MDM provides universal components (or services) for consistent data maintenance and distribution. Here the architecture concepts and principles of SOA run into MDM.

This article begins by giving a brief motive for using MDM and a conceptualization. It will then go on to present typical variants for possible MDM architecture concepts, and illustrate the interplay of MDM and SOA with reference to the architecture pattern.

Motive

Increasing pressure from competition means that business models and underlying business processes have to be adapted in ever shorter cycles. At the same time, globalization and the digital networking of companies are making interaction with external business partners even more complex. Securely exchanging high-quality data is crucial for increasing efficiency in processes. This is where the central issue, the quality of information, and therefore its security in transactions, evaluations, and reports, all stem from. Once a company is no longer in a position to provide a consistent and consolidated view of its central business objects, implementing a company-wide policy for master data management becomes a good idea.

Unfortunately, in many companies today it is common for IT systems to be unable to keep up with fast changes in organization, business, and even technology. As a result, on the companies' side, a vast, ever-growing web of IT systems with known integration problems comes into being. This heterogeneity accounts for a variety of challenges when using master data that include differences in:

- data structures and formats in master data

- specifications and understanding of the master data values in the participating organizational units

- validations and plausibilities (data quality)

- processes and responsibilities concerning data sovereignty (data governance)

- business processes with partially conflicting functionalities in the application systems

- organizational units that have different systems for master data maintenance

The result of these problems is inconsistent information about underlying business objects, the master data. As we have already mentioned, data is essential input for all processes. If this source of information runs dry or costs for the interaction based on the data become too high, the value of the information becomes questionable and the company becomes faced with serious concerns. MDM on the business side and the SOA approach on the IT side can counteract this problem together.

BARC's analysts assert: "Over half of all IT experts believe data quality … to be the biggest challenge". BARC goes on to say that "75 % of participants think master data management is the most important trend for their company" [REF-1].

Conceptualization

In general use of the IT language, there is a common, basic understanding of master data and its importance for the company and the IT systems concerned. Master data form the basis of all business activities in a company and its business processes. Master data describe the basic business objects of a company, and should therefore be considered as a company's "virtual capital" [REF-2]. In his presentation at the 7th Stuttgarter Softwaretechnik Forum in September 2011, Professor Dieter Spath even claimed that information, and therefore also the master data, should be considered assets similar to a company's equipment and be subject to asset management [REF-3].

MDM organizes how the master data is handled, something that every company needs in order to carry out its business processes.

In the literature, there is a broad range of definitions for master data and master data management [REF-4] [REF-5] [REF-6]. This article uses the following definitions:

- Master Data Management – Master Data Management (MDM) is management to ensure the quality of the master data. Its purpose is to guarantee that master data objects are suitable for use in all added value processes in the company. MDM includes all the necessary operative and controlling processes that encompass a quality-assured definition and guarantee that master data objects will be maintained and managed. In addition, MDM provides the IT components to map this process. MDM consequently assumes a supporting role, and its contribution to improving the added value takes place implicitly in two directions. The first is that data quality management continuously improves the data quality of master data and thereby the value of the information, with the second being the suitability of the master data objects for use in all core processes, in turn leading to improved added value through the optimized core processes.

- Master Data Object – Master data objects are official, underlying business objects within the company that are used in the added value processes. A master data object describes the structure (blueprint) and the quality requirements (such as validations, permissible values within the structure). Talking with users, they frequently understand reference data (value lists) to be the actual master data of the company. A typical example is standardized value lists, such as ISO country codes and ISO currency codes. Master data use these lists as the foundation for forming groupings, hierarchy, and classifications. In this article, master data are not only reference lists but all official, underlying business objects.

Procedure

MDM is implemented, in-line with the definition, company-wide. For this to work, companies reassess the business processes and IT systems with regard to the changed use of the master data. Furthermore, the initiatives and projects of MDM itself are monitored and controlled via a management system. Finally, the strategy for the MDM is embedded into the general company strategy, and thereby contributes indirectly to improving added value. The introduction of MDM and its sustainable operation is a business transformation [REF-7].

For this reason, MDM is not an IT project, but rather a development plan for business. IT provides an infrastructure for this business transformation and plays a part in the MDM development plan, although control and initiation should be conducted through business.

Generally, reference frameworks are used for structuring and communicating complex correlations. The reference framework presented here structures the essential elements of the MDM development plan and subsequently serves as the framework for the procedure. The following explanations are based on business engineering, an approach that was developed by the Institute of Information Management at the University of St. Gallen for designing business transformations based on the strategic use of IT systems [REF-8].

Within its reference framework, business engineering considers three levels: the strategy, the organization, and the system architecture (data and applications). These areas have to be designed to successfully execute a business transformation in general, and therefore also within the framework (Figure 1) of the planning and performance of the MDM development plan [REF-9].

Figure 1: The reference framework for the MDM development plan.

MDM Strategy

The instruments for formulating the vision and strategy are used in most cases to communicate and control medium or long-term development plans with organizational changes. The MDM team creates an overall concept or vision for organizing and managing MDM. This concept conveys the purpose of the MDM, explains the reasons for the change, outlines the goals, and describes guidelines for its use. It must be ensured that the MDM concept does not contradict the established company goals.

The operationalization at an abstract level stems from this vision, through the formulation of the strategy with initiatives for the MDM. The strategy specifies the fields of activity and mirrors the wishes and ideals of specific decision-makers. In connection with the vision, the strategy describes the expectations of the future situation. Finally, as part of the MDM strategy, the road map and milestones are developed and initiatives for the accompanying change management are defined.

MDM Organization

The MDM development plan is a subject that embraces the whole company. MDM activities, processes, functionality, and structures must be coordinated across the different business areas of a company. To achieve this, the MDM requires a management system and process and structural organization to sustainably guarantee its success. The MDM requirements defined in the functional architecture form the basis for designing the process, structural organization, and the necessary IT support. The MDM management system operationalizes the development plan for the MDM strategy. It determines the point of departure for establishing the MDM, defines the processes and organization, and matches the assignment of key data to the processes.

At the heart is the adaptation of the existing process organization required for use as part of the MDM. The standards and parameters associated with the master data must be integrated into the company's operating and recurring work cycles. On the one hand, this affects the operating core processes and their activities, which users perform as part of their line function or roles. On the other hand, MDM-specific administrative processes and data governance must be implemented to ensure operational capability and continuous improvements in how master data are used.

A suitable structural organization forms the basis for processes. Employees are hierarchically included in the structural organization, according to their roles in the processes. This may be in their original line function or in a business reporting line, such as in the form of a matrix organization. The functional architecture structures the business requirements for the MDM, and acts as a basis for architecture decisions and the planning of necessary MDM processes and IT components.

MDM System Architecture

As a business transformation, MDM pursues the goal of implementing master data management across the company. To enable this at justifiable operating costs, IT must support the process. This applies, on the one hand, to the manually supported processes of the MDM itself, and, on the other, to the automated processes of data processing and distribution.

For this, a clear system architecture that includes the interdependencies of the systems is necessary. The system architecture for the MDM describes both the current situation and the planned target architecture. If the enterprise architecture approaches are followed [REF-10], the following results are meaningful for the MDM development plan:

- an IT master plan for the MDM development plan, with focus on infrastructure

- a map of master data with data models and data storage

- an overview of cross-company information flows (flow of values and commodities)

- a process map of operative processes that affect the MDM development plan and IT application systems required to support MDM

The design areas include the application architecture with the necessary MDM-specific systems, supporting IT components, the integration architecture for master data logistics, and the underlying system infrastructure. The application systems and candidates for MDM are checked to ensure they provide the functionalities, and assessed with appropriate criteria. The application and integration components are based on an infrastructure platform that is considered separately from the infrastructure architecture. The information architecture performs a special role with MDM. The information architecture describes the master data objects, associations, and their attributes, in addition to cross-company information flows (flow of values and commodities).

The importance of the MDM's data and metadata means that this must be anchored as a design area within the framework [REF-11]. The information architecture models support the other design areas:

- At a strategic level, the subject matter concerning the master data objects and domains to be considered are defined.

- At an organizational level, the information model describes the organizational relationships. The operative processes use master data objects and their attributes to define these dependencies. Furthermore, the information model also records the rules for validating master data and its quality criteria. On the organization side, organizational competence or responsibility for the master data segments is necessary for the DQM.

- At the system architecture level, the information model describes the physical data models that underlie the master data objects. These include, in connection with the integration architecture, the description of the data transformation and distribution processes.

In the meantime, the organization and execution of an MDM development plan in the form of a program represents a best practice approach:

"Therefore the greatest challenges to success are not technical—they are organizational. It is largely because of these issues that MDM should be considered as a program and not as a project or an application" [REF-12].

From this point forward, this article limits itself to considering the system architecture and the technical aspects of using SOA and MDM.

SOA & MDM

An essential advantage to SOA is the loose coupling of the IT components. This promotes component reuse and makes it simpler and more flexible to use them to support new functionalities or processes [REF-13].

MDM should be based on service-oriented concepts and provide universal components (or services) for consistent data maintenance and retrieval of master data. Here the architecture concepts SOA are again incorporated into MDM. There are two different views supported by this claim:

- MDM Business Service – reusable business logic for maintaining and validating master data

- MDM Information Service – reusable information for use in the business processes

MDM Business Service

Accessing master data objects such as products, customers, or business partners is necessary throughout all areas of the company, and thereby across the function and management areas: "Their high reusability and the comparable ease with which standardization takes place mean that access to master data objects creates ideal service candidates." [REF-7 14].

This opinion is also held by the Masons of SOA, who regard accesses to master data objects as "business entity services," and highlight that master data services amount to a considerable proportion of the developed services in SOA [REF-11 15].

A trend can also be detected here as well:

"The bundling of observed master data services into independent application domains anticipates the development of application architectures. In the future, central master data systems which offer services for accessing global master data objects will play an even more important role in application architectures" [REF-16].

Figure 2 demonstrates the transformation from uncontrolled to controlled management of master data:

Figure 2: The transformation to controlled management of master data.

This results in MDM becoming a fundamental component of SOA. Establishing an SOA in the company increases the frequency of the use of central master data services, and thereby implicitly their reuse. Establishing central, managed services for accessing and processing data is therefore a sensible next step.

Performing a service decomposition leads to the discovery that, as part of the reusable services, services that undertake central tasks for managing the lifecycle of master data across the service domains are also required.

In this context, typical difficulties with managing and maintaining the master data are also encountered at the data access level, even independently from an MDM development plan. They include:

- challenges with data protection and data security, depending on the lifecycle stage of data

- problems with the standardized checking of the quality of master data

- performance issues with propagating data to subsystems

In summary, the service-oriented concepts act as leverage for MDM through the:

- use of design principles (frameworks and patterns) from SOA when forming MDM business services to support MDM

- reuse of existing services to manage the lifecycle of master data

- use of enterprise service bus (ESB) concepts and infrastructure for "push" approaches when integrating and distributing the master data within the framework of master data logistics

MDM Information Services

Service decomposition requires central master data services. These support the secondary, more strategic approach to implementing an Information-as-a-Service (IaaS) [REF-17].

Figure 3 demonstrates their support of IaaS:

Figure 3: MDM supports IaaS approaches.

The underlying idea is very simple. A facade which delegates access to the IaaS is used so that single applications or services do not have to individually implement access to the data. This can be considered as a virtualization of data access, as the data sources are now transparent at the layer to be accessed. This provides central control over the typical CRUD operations on the required master data. Control over validations is now guaranteed, and any inconsistencies in the maintenance of different applications and services are resolved.

If this approach is taken as part of MDM, the three underlying challenges related to the "information-as-a-service (IaaS)" approach can be managed through technical and organizational measures.

From the consumer's point of view, this means:

- Definition – The meaning (semantics) of master data and their attributes must be implemented uniformly and consistently. This also includes the availability of the definition and its uniqueness.

- Quality – Checks of the data quality can now be performed on a "virtualized" platform with complete transparency for the consumer, who can change the master data. The IaaS repository secures common semantics and a system of rules for the validation. This also prevents inconsistencies.

- Governance – The final point is IaaS services lifecycle management. This can be directly covered through the established governance approach for the management of services in the SOA environment.

In Table 1, the central services of MDM are contrasted with the eight factors that determine master data value:

| Factor | Explanation | MDM Central Service |

|---|---|---|

| Quality | Guarantee of the required data quality | Data quality management |

| Consistency | Guarantee of consistent semantics in structured and unstructured information | Metadata management, hierarchy management |

| Security | Secure handling of internal and external (from business partners, customers) data | Use of central services for authorization and authentication |

| Stability | Management of the version control and variants of the data for outdated and long-running processes | Routines for maintaining master data and its versioning/history |

| Granularity | Management of information at all levels of granularity through structures | Metadata management, hierarchy management |

| Currentness | Guarantee of up-to-date master data | Routines for maintaining and distributing master data and its versioning/history |

| Context Dependency | Guarantee of consistent semantics in structured or unstructured information | Metadata management, hierarchy management |

| Origin | Management/tracking of origin and distribution of master data | Metadata management |

Table 1 – MDM central services are contrasted against the eight factors.

This eliminates the two problems that are typically encountered when managing heterogeneous master data in different applications that have varying functionalities and decreasing data quality:

- Inconsistencies when retrieving the "same" master data for different services or applications are avoided.

- Inconsistencies when validating and checking data are avoided.

Data Storage Architecture Pattern

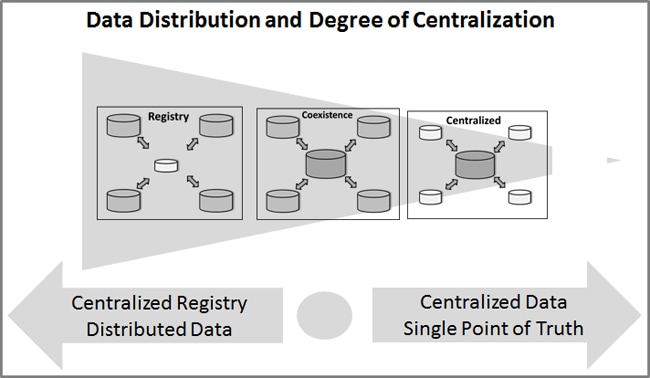

The type of architecture that is selected for data storage has a far-reaching impact on MDM. There are two main approaches for storing and managing master data [REF-18]:

- System of Reference – central directory with decentralized data storage

- System of Record – central data storage with a SPoT

SPoT stands for Single Point of Truth, which is sometimes referred to as a "golden record" [REF-19]. Ultimately it means the existence of a unique, valid master data object.

However, both approaches seldom exist in a "pure" form in practice. They are more likely to be used in combination with other technologies. In this respect, systematization occurs as is demonstrated in Figure 4, in three different approaches to data storage:

- Centralization – MDM with a central database or central business applications

- Registry – MDM with distributed data and a central directory

- Coexistence – MDM with a hybrid approach with the coexistence of different data sources

Figure 4: The underlying architecture pattern for MDM.

Centralization

In this architecture pattern, master data is maintained through one or several of the leading business applications for master data. Here, an MDM solution that is implemented as something of an independent, central application, should also be understood as a managing business application. The global master data, the guarantee of the master data quality, and the processes for maintenance are determined through the managing business applications. Master data is propagated to the various, mostly local, applications by a system for master data logistics. This guarantees that the master data remains consistent.

This approach is widespread in practice, and allows for centralized control which standardizes the master data company-wide. The data is consistently changed through one or more business applications, and underlies the identical master data quality management or methods for guaranteeing quality.

The approach of consolidating master data on a platform that is so frequently described in literature corresponds to this approach, whereby the managing application in this case corresponds to the data management process. The master data model is harmonized, as it is central. This approach is extremely good for company units that can agree jointly on global master data. The definition of a SPoT is through the managing system or the central database.

Registry

This approach assumes a high level of autonomy for the different system worlds, and decentralized implementation. As with database systems that are based on the "inverted lists" principle, there is a central list with references ("registry") which are required for the unique identification of a master data object specification. The actual pieces of information (master data values) are distributed between the different systems. In this concept, reading master data is connected to a high network load per retrieval instance, and is therefore recommended more for MDM approaches that provide a low degree of overall master data views.

A central problem can occur in this approach, in the area of data quality management. Since the information is managed and stored in the decentralized systems, distributed governance is needed. This circumstance almost inevitably leads to greater autonomy for the distributed units and a decline in centralized control. Only the consistency of the attributes for identification has to be guaranteed to be standardized through all closed systems.

Coexistence

One weakness of the registry-based approach is the high network load. One weakness of the centralized approach is the centralization of the functionality through managing business applications. A decentralized approach which follows federal principles and permits coexistence with the existing systems allows these weaknesses to be counterbalanced. It resembles the master/master and master/slave relationships with replications in a database.

In this case, local copies in the distributed MDM systems are also provided and reconciled using master data distribution logistics. The copies and replications can thereby be "subtly" adjusted to the necessary degree. As with the registry approach, DQM takes place locally but with global starting points, as all relevant data are kept in the master in the "middle" (similar to the consolidated approach).

The weak points of this concept are already known from the replication environments. There are problems with control through successful handshaking as well as with the restart points during interruptions. The security of the data in the network cluster is also not sufficiently guaranteed. From our point of view, this approach requires a considerable number of interventions in the existing infrastructure.

Scenarios for Use

The use of SOA approaches will now be considered in light of the different architecture patterns, with different scenarios for use discussed individually.

Centralization

The SOA leverages are lowest for this architecture approach. Data management is through a business application that is often encapsulated. This application indicates the necessary processes for maintaining the master data and contains the routines for guaranteeing data quality. Data distribution mostly takes place as an ETL process, or as the conventional and established EAI approaches (Table 2).

| Criteria | Specifications of Centralization Architecture Pattern |

|---|---|

| Data Responsibility | The process and structural organization of the individuals responsible for data quality can be organized in any way. However, responsibility for updating data is borne by the managing business application |

| Data Storage / Redundancy | Using copied data makes redundancy high, whereby the distribution and administration is governed by clear responsibility. Redundancies within the central master dataset should be low |

| Data Distribution | Unidirectional distribution in the direction of local business applications from the dataset of the managing system Central control of distribution |

| Data Consistency & Harmonization | Both the central dataset and the business applications are consistent Harmonization is very high, as it is central |

| Data Currentness & Availability | Availability for read access is very high, as all systems can access the central dataset Currentness can be adjusted optimally using the master data logistics, although it is mostly batch-oriented |

| Integration | Integration falls back on state-of-the-art data integration approaches from the BI/DWH environment, and is robust |

| Data Access | Access is only read through the local business applications, and only managing business applications can change master data |

| Relevance of the SOA | Medium to low The managing business applications that use master data jointly should function with a standardized business logic. Here, SOA MDM services can be used to good effect In practice, this approach is implemented through standard solutions from the developer, with the result that their system architecture is vital |

| Use Scenario | Robust approach for ERP/CRM centralized approaches Platform or staging area for BI/DWH solutions Implementation of managing business applications, particularly when using standard software |

| Advantages | Clear and robust technologies used Secure master data for further processing |

| Disadvantages | Heavy limitation and dependence on the managing business applications Distributed processing of global master data through different systems is not supported |

Table 2 – The specifications of the Centralization architecture pattern.

Registry

Using SOA is vitally important with this architecture approach (Table 3). The propagation of master data is in a distributed environment, and generally uses the transaction or dataset-oriented procedure for propagating changes here. To display the information, different services need to be activated through the network. These services read the information that belongs to the identifying data from the registry in the specific operational systems. This data then arrives back at the starting point via the network, where the read data is compiled into a master dataset.

An integration procedure based on loose coupling offers advantages here. Each change to the structure or to a version of the master data from the local systems must be reported to the central registry in a similar manner.

| Criteria | Specifications of Registry Architecture Pattern |

|---|---|

| Data Responsibility | Remains completely local except for the governance concerning the attributes for identification |

| Data Storage / Redundancy | Very low, and limited to the values for identification |

| Data Distribution | Not necessary (except for the global attributes for identification) |

| Data Consistency & Harmonization | Not necessary (except for the global attributes for identification) |

| Data Currentness | Very high through the realtime reading access |

| Integration | Crucial Not possible without integration platform, and therefore a real necessity for a stable and robust distribution. Mostly optimized for reading operations which take place in a distributed system |

| Data Access | Access to the attributes is always through the directory as a switchpoint for access |

| SOA Relevance | Very high Identification and compilation of the master data is through a centralized and shared access layer. This uses the specific adapter for the connected applications in a transparent manner |

| Use Scenario | Large distributed amount of master data with local autonomy (in some circumstances, due to legal constraints, see also data protection (DBSG)) Exchange platforms for a joint e-commerce marketplace |

| Advantages | Simple form of implementation for infrequent need for Web access |

| Disadvantages | High network load and extremely time-consuming governance for the DQ due to local autonomy Guaranteeing data consistency is problematic |

Table 3 – The specifications of the Registry architecture pattern.

Coexistence

The use of SOA is essential for this architecture approach as well (Table 4). Data storage is in distributed systems and also in distributed data storage. Consequently, the maintenance and management of master data takes place in a distributed environment, and each system starts the propagation of master data logistics. Replication itself can take place asynchronously or synchronously to enable different approaches even in the SOA on the side of implementing the replication, including conflict resolution (similar to the "registry approach").

| Criteria | Specifications of Coexistence Architecture Pattern |

|---|---|

| Data Responsibility | Partially global and therefore under central responsibility, but also a high degree of local autonomy |

| Data Storage / Redundancy | The master data globally required is available redundantly, as the calibrated node ultimately acts as a central data hub |

| Data Distribution | Bidirectional distribution of master data with all the problems of a master-master or master-slave replication in distributed systems |

| Data Consistency & Harmonization | Data consistency and harmonization primarily depend on the business logic of the different business applications. The centralized DQ has the right to change the local data. This can lead to consistency problems in the business applications in question |

| Data Currentness & Availability | High, as the business applications only access their datasets |

| Integration | Costly and complex, as the essential load is on the master data logistics that have to distribute the master data to the different systems |

| Data Access | There is no direct access to the calibrated node. Instead, the systems work on the local updated datasets |

| SOA Relevance | High Overall routines for checking the master data quality must be created, including tools for the data stewards. Moreover, the master data logistics use the MDM services for the CRUD operations for replication |

| Use Scenario | The implementation of the central solution is not possible with local autonomy. The calibrated node serves as a transition to a central solution with managing systems or to the transaction server approach |

| Advantages | Local autonomy is optimally connected with centralized master data management |

| Disadvantages | High degree of complexity for master data logistics, and stringent governance to secure a uniform system of rules in the business application |

Conclusion

In conclusion, the question still remains as to whether you could pursue a consistent SOA approach without any MDM. Some analysts believe that is not possible, even going so far as to predict significant failure rates for SOA projects that do not include MDM.

We will close with that judgment.

Takeaways

- An MDM initiative is performed by business and IT together, with the responsibility lying with business. The MDM endeavor should be viewed as a program, not a project.

- A clear data sovereignty definition, data structures, and the required maintenance processes for data quality are the foundation for success.

- The use of a reference architecture makes introducing and maintaining MDM systems easier.

- SOA will only achieve the desired results in combination with MDM.

- The reference architecture should be independent from the technology chosen.

References

- [REF-1] BARC: "Data Warehousing 211 – Status Quo, Herausforderungen und Nutzen, White Paper," BARC Institut, p. 28, Würzburg, July 2011. p. 28, 35

- [REF-2] M. Kaufman: "Master Data Management"

- [REF-3] D. Spath: "Unternehmensgut: Stammdaten" in the conference transcript from the Stuttgarter Softwaretechnik Forums 2011, Stuttgart, Frauenhofer Verlag, 2011. p. 29

- [REF-4] A. Berson, L. Dubov: "Master Data Management and Customer Data Integration for a Global Enterprise," McGraw Hill, 2007

- [REF-5] A. Dreibelbis, E. Hechler, I. Milman, M. Oberhofer, P. Van Run, D. Wolfson: "Enterprise Master Data Management: An SOA Approach to Managing Core Information," 2008, IBM Press. p. 23

- [REF-6] B. Otto: "Funktionsarchitektur für Unternehmensweites Stammdatenmanagement, Bericht Nr.: BE HSG/CC CDQ / 14," Institute of Information Management at the University of St. Gallen, 2009

- [REF-7] J. W. Schemm: "Zwischenbetriebliches Stammdatenmanagement," Springer, 2009. p. 79 et seq.

- [REF-8] H. Österle, R. Winter, F. Höning, S. Kurpjuweit, P. Osl: "Business Engineering: Core-Business-Metamodell," in: "WISU – Das Wirtschaftsstudium," 2007

- REF-9] B. Otto: "Funktionsarchitektur für Unternehmensweites Stammdatenmanagement, Bericht Nr.: BE HSG/CC CDQ / 14," Institute of Information Management at the University of St. Gallen, 2009. p. 14

- [REF-10] Staehler et al.: "Enterprise Architecture, BPM und SOA für Business-Analysten," Hanser, 2009

- [REF-11] J. W. Schemm: "Zwischenbetriebliches Stammdatenmanagement," Springer, 2009. p. 79 et seq.

- [REF-12] M. Kaufman: "Master Data Management". p. 16

- [REF-13] T. Erl: "SOA Design Patterns," Prentice Hall Service-Oriented Computing Series. p. 756-757

- [REF-14] J. W. Schemm: "Zwischenbetriebliches Stammdatenmanagement," Springer, 2009. p. 223

- [REF-15] B. Maier, H. Naumann, B. Trops, C. Utschig-Utschig, T. Winterberg: "SOA Spezial Ready for Change," Software und Support Verlag, 2009. p. 35 et seq.

- [REF-16] J. W. Schemm: "Zwischenbetriebliches Stammdatenmanagement," Springer, 2009. p. 223

- [REF-17] Allen Dreibelbis, Eberhard Hechler, Ivan Milman, Martin Oberhofer, Paul Van Run, Dan Wolfson: "Enterprise Master Data Management, An SOA Approach to Managing Core Information," 2008, IBM Press. p. 86 et seq.

- [REF-18] A. Dreibelbis, E. Hechler, I. Milman, M. Oberhofer, P. Van Run, D. Wolfson: "Enterprise Master Data Management, An SOA Approach to Managing Core Information," 2008, IBM Press. p. 23

- [REF-19] M. Kaufman: "Master Data Management"