Oracle Multitenant on SPARC Servers and Oracle Solaris

by Ritu Kamboj

Published September 2013

This article describes the advantages of using the Oracle Multitenant option of Oracle Database, Enterprise Edition 12c on SPARC servers running Oracle Solaris. It also describes the steps to enable thin provisioning and instantaneous cloning features of Oracle Database 12c when it is deployed on Oracle ZFS Storage Appliance.

Table of Contents

About Oracle Multitenant

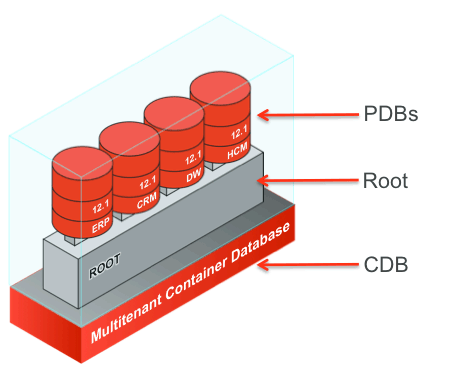

Oracle Multitenant—an Oracle Database, Enterprise Edition 12c option—introduces a new architecture that enables in-database virtualization for database consolidation, which delivers rapid provisioning and cloning as well as rapid database patching and upgrades. It enables administrators to manage many databases as one and allows resource management between databases. This new architecture allows a multitenant container database (CDB) to hold many pluggable databases (PDBs), as shown in Figure 1.

Figure 1

In addition to Oracle Multitenant, Oracle's SPARC servers with Oracle Solaris provide three main types of layered virtualization at the infrastructure level. These virtualization technologies can be used with Oracle Multitenant on Oracle's SPARC servers, making them the best platform for database and application consolidation and database clouds.

SPARC and Oracle Solaris Virtualization Technologies

Three types of layered virtualization, shown in Figure 2, are available on Oracle's SPARC servers with Oracle Solaris at no extra cost:

- Physical domains: Allow the partitioning of a server into independent isolated servers

- Oracle VM Server for SPARC: Allows multiple OS instances to coexist on the same physical infrastructure with dynamic reallocation of hardware resources

- Oracle Solaris Zones: Allows multiple application coexistence and resource management within a single OS instance

Figure 2

Each server virtualization technique provides different benefits. In general, Oracle Solaris Zones provide the highest flexibility and dynamic usage of resources, but they provide lower resource isolation and less-granular serviceability. Physical domains provide the greatest amount of resource isolation, but they provide less flexibility.

Unlike competing virtualization technologies, Oracle VM Server for SPARC and Oracle Solaris Zones scale extremely well with near-zero overhead.

One common benefit of using any of these three virtualization technologies is that they are recognized as Oracle-approved hard partitioning for database and application licensing purposes. They can provide huge licensing cost savings when only a subset of cores on a large system is needed for the database. A SPARC M5-32 server from Oracle, for example, has 32 six-core processors. If not all of these cores are needed for Oracle Multitenant running on the system, a subset can be created using any of these technologies, greatly reducing database-related licensing costs.

Another benefit is that these virtualization technologies enable the consolidation of different versions of Oracle Database on the same server. Different operating systems versions are also supported on the same system, enabling even older versions of Oracle Database to run on the same system as the latest Oracle Database version.

One more consideration is that while the set up and management of Oracle Multitenant will likely be done by a DBA, the management of Oracle Solaris Zones, Oracle VM Server for SPARC, or physical domains will be done by a system administrator.

Oracle Multitenant works with all these available server virtualization options and provides another layer of database virtualization.

Figure 3 shows an example of using all these virtualization technologies together on Oracle's SPARC M5-32 server, consolidating databases of different versions while having other zones available for application consolidation.

Figure 3

The most appropriate deployment model is likely to be a mixture of some of these technologies, based upon compliance, isolation, and flexibility needs. In most cases, of course, not all these technologies would be needed and a subset would suffice.

Many other articles and white papers have discussed these technologies in detail. For more information please check out the resources listed in the "See Also" section.

Oracle Multitenant Unique Features

The following are the most compelling reasons for adopting a multitenant architecture on SPARC platforms:

- High consolidation density: An Oracle Database instance consists of a group of Oracle Database background processes and shared memory. In a single multitenant container database, the memory and background process are shared by all the pluggable databases inside that container database. This enables you to operate many pluggable databases on a particular platform with less overhead. This is the same benefit that schema-based consolidation brings. However, schema-based consolidation has several disadvantages, such as weak namespace isolation and an extremely weak security model. The new architecture removes all the shortcomings of schema-based consolidation and provides each pluggable database with complete namespace isolation as well as the security model of a standalone database.

In all high-consolidation architectures, the consolidated workloads share the same physical infrastructure. Hence, it is important to consolidate on a server that has high reliability, availability, and serviceability (RAS) features. Oracle's SPARC T5 and SPARC M5 processor-based servers are based on Oracle's S3 core, which has built in RAS. Refer to "Maximizing Application Reliability and Availability with SPARC T5 Servers" for additional information on SPARC T5 RAS functionality. Refer to "Maximizing Application Reliability and Availability with the SPARC M5-32 Server" for additional information on SPARC M5-32 RAS features.

- Rapid provisioning and cloning using SQL: A pluggable database can easily be unplugged from one multitenant container database and plugged into another. Alternatively, you can clone a pluggable database from one multitenant container database to another. These operations, together with creating a pluggable database, are done using SQL commands. Since provisioning and cloning are done using SQL commands, they can be executed from any client machine and, hence, access privileges to the server machine are not needed. In addition, the SQL commands can be run in parallel thereby reducing execution times. They can also be programmed in PL/SQL to automate the process of provisioning and cloning.

Oracle Multitenant is integrated with Oracle ZFS Storage Appliance, which provides unlimited snapshots and cloning services. Any Oracle Database client can issue a SQL command to clone a PDB using the Oracle ZFS Storage Appliance snapshot feature, which is based on copy-on-write, by just adding a

snapshot copyclause to the SQL statement. The clone creation on Oracle ZFS Storage Appliance is instantaneous and no space is initially allocated to that clone. This drastically reduces clone creation time and also results in storage savings by enabling thin provisioning of the database. It also eliminates the heavy I/O overhead involved in traditional full-copy approaches to cloning. - New paradigms for rapid patching and upgrades: You can upgrade the CDB, thereby upgrading at once all the PDBs plugged into it. If you want to upgrade just a subset of the PDBs, you can just unplug that subset of PDBs from the existing CDB and plug them into a CDB with the upgraded version. Similarly, to upgrade the service level agreement (SLA) for a single application, you can unplug its PDB from a CDB managed at a low SLA and plug it into a CDB managed at a higher SLA.

- Ability to manage many databases as one: By consolidating existing databases as PDBs, administrators can manage many databases as one. For example, tasks such as backup and disaster recovery are performed at the level of the CDB. The multitenant architecture also provides the flexibility to take backups and perform point-in-time recovery at the PDB level, if required.

- Dynamic resource management between PDBs: In Oracle Database 12c, the Resource Manager feature is extended with specific functionality for controlling the competition for resources between the PDBs within a CDB. The resource manager CDB-level plan controls the following:

- Number of concurrent sessions

- CPU

- Ability to use Oracle Database parallel server processes

However, in Oracle Database 12.1, the Resource Manager feature does not control the use of system global area (SGA) memory. If a PDB needs complete, isolated access to the SGA, you could deploy it in a virtualized environment, such as Oracle Solaris Zones or Oracle VM Server for SPARC. You could also choose to run it in Oracle Real Application Clusters (Oracle RAC) by opening just one PDB on each node, thereby providing each PDB isolated access to the node's memory.

Efficient In-Database Virtualization

An experiment was conducted to determine whether the new multitenant architecture has any overhead. We created one big database as a lone CDB and ran the OLTP workload on it. We then sliced this single database into several small databases using the multitenant architecture and ran the OLTP workload on each one of them. We ensured that the total load on the server in all tests was identical and that no server- or database-related parameter was altered during the tests. We observed that the total throughput of the server remained the same as the number of PDBs increased from one to eight.

The tests were run on Oracle's SPARC T5-4 server with a 3.6-GHz clock rate and 1 TB of memory. The database was created in memory; however, it was not fully cached. The OLTP runs resulted in one read per transaction and around two writes per transaction. Linear scalability was observed as PDBs were scaled from 1 to 16, keeping the overall load constant.

Table 1 specifies the load on individual PDBs. The total load on the server was always constant, while the number of PDBs changed from 1 to 16.

Table 1| Number of PDBs | Warehouses per PDB | Clients per PDB |

|---|---|---|

| 1 | 2000 | 512 |

| 2 | 1000 | 256 |

| 4 | 500 | 128 |

| 8 | 250 | 64 |

| 16 | 125 | 32 |

A total of 512 clients were serviced across various numbers of PDBs, from a single PDB supporting all 512 clients to 16 PDBs supporting 32 clients each. As the number of sessions per PDB was reduced, the "buffer busy waits" were reduced, thereby increasing the throughput of the buffer cache.

The graph in Figure 4 shows the relative throughput as PDBs were scaled from 1 to 16.

Figure 4

Rapid Provisioning and Cloning

The new multitenant architecture enables faster cloning of a database. This section summarizes the architectural changes that enable rapid cloning of a PDB.

At a physical level, an Oracle database consists of a bunch of files: data files as well as bootstrap files, such as control files, spfile, and so on. The physical data files implement the logical entity tablespace, which in turn implements tables. Tables hold only three kinds of data:

- Metadata that describes the Oracle system

- Metadata that describes the user schema

- Actual user data

Prior to Oracle Database 12c, the metadata that describes the Oracle system and the metadata that describes the user schema were stored collectively in a set of tables that are referred to as the data dictionary. The data dictionary tables were stored in dedicated tablespaces, namely SYSTEM and SYSAUX.

Initially, when you create the database, the data dictionary tables have only Oracle system-related metadata. Later on, when a user creates data, the data dictionary tables contain user and system metadata. The user tablespace contains only user data.

The intermingling of Oracle-supplied metadata and user metadata is an issue, because if you need to copy user data from one location to another, you have to not only copy the user data, but you must also go through the data dictionary tables, identify user metadata, and copy user metadata. If the user data was stored completely in its own tablespace, then copying the user data would involve just copying the user files from one location to another.

Hence, in Oracle Database 12c the data dictionary tables are horizontally partitioned: one subset containing the Oracle system metadata is stored in the Root database and another subset containing user metadata is stored in the PDB. Now, transporting an application database from one database to another means Oracle processes just copy the files containing user data and user metadata. The user metadata is stored in the SYSTEM and SYSAUX tablespaces of the PDB, and the system metadata is stored in the SYSTEM and SYSAUX tablespaces of the CDB, as shown in Figure 5.

Figure 5

Thin Provisioning and Instantaneous Cloning: The Oracle ZFS Storage Appliance Advantage

The Oracle Multitenant architecture is integrated with Oracle ZFS Storage Appliance, which provides unlimited snapshots and cloning services.

A snapshot is a read-only point-in-time copy of a file system. It is instantaneously created and no space is allocated initially. Blocks are allocated as changes are made to the base file system. Any reads to the snapshot blocks are served by the base file system's block. When changes happen to the base file system, the older block is then referenced by the snapshot and the new, changed block is referenced by the file system.

A clone is an instantaneously created read-writable copy of a snapshot. One or more clones can be created from a single snapshot. These clones are presented to users as a normal file system. Similar to snapshots, when a clone is created, no space is allocated. The reads of the clone are served by the base file system's blocks. The changed blocks are allocated only when the blocks are changed in the clone. Since space is shared between snapshots and clones, and since a snapshot may have multiple clones, a snapshot cannot be destroyed without also destroying any active clones.

You can use the CREATE PLUGGABLE DATABASE statement to clone a PDB. This technique clones a source PDB and plugs the clone into the CDB. To use this technique, you must include a FROM clause that specifies the source PDB.

The source PDB is the existing PDB that is copied. The target PDB is the clone of the source PDB. The CREATE PLUGGABLE DATABASE statement copies the files associated with the source PDB to a new location and associates the files with the target PDB. The source PDB needs to be in read-only mode in order to be cloned.

If your source PDB is on Oracle ZFS Storage Appliance, you can create a snapshot clone of that PDB by merely adding a snapshot copy clause to the CREATE PLUGGABLE DATABASE command. When an Oracle Database client issues a CREATE PLUGGABLE DATABASE SQL statement with a snapshot copy clause, Oracle Database logs in to Oracle ZFS Storage Appliance and creates a snapshot of the file system on which the source PDB resides, and it promotes that snapshot as a clone on Oracle ZFS Storage Appliance. The clone creation on Oracle ZFS Storage Appliance is instantaneous and no space is initially allocated to that clone.

When you use the snapshot copy clause to create a clone of a source PDB, the following restrictions apply to the source PDB as long as any clones exist:

- It cannot be unplugged.

- It cannot be dropped.

Storage clones are named and tagged using the new PDB GUID. You can query the CLONETAG column of the DBA_PDB_HISTORY view to view clone tags for storage clones.

Table 2 shows the time saved when Oracle databases of different sizes are cloned using snapshot cloning.

Table 2| Database Size | Full-Copy Clone | Snapshot Cloning with Oracle ZFS Storage Appliance | % Time Saved |

|---|---|---|---|

| 24 GB | 9 min: 22 sec | 1 min: 52 sec | 80.00% |

| 216 GB | 1 hr: 21 min | 2 min: 11 sec | 97.00% |

| 1.3 TB | 9 hr: 7 min | 5 min: 55 sec | 99.00% |

The graph in Figure 6 represents the data above graphically.

Figure 6

Table 3 shows the space saved when Oracle databases of different sizes are cloned using snapshot cloning.

Table 3| Regular Clone Database Size | Snapshot Clone Database Size | % Space Saved |

|---|---|---|

| 24 GB | 140 KB | 99.99% |

| 216 GB | 142 KB | 99.99% |

| 1300 GB | 551 KB | 99.99% |

Configuring Snapshot Cloning with Oracle ZFS Storage Appliance

In order to set up snapshot cloning with Oracle ZFS Storage Appliance, you have to enable the SNMP service on Oracle ZFS Storage Appliance and store an Oracle ZFS Storage Appliance administrator username and password in the Oracle Database Transparent Data Encryption (TDE) keystore. This section explains the steps for creating a PDB (targetDB) by snapshot cloning the source PDB (srcDB).

- The storage administrator performs the following configuration on Oracle ZFS Storage Appliance:

- Set the following properties and enable the SNMP service:

SNMP community name: public Authorized network: 0.0.0.0/0 Appliance contact: <admin e-mail ID> Trap destination: 127.0.0.1 - (Optional) On the database server, issue the following command and ensure that the output recognizes Oracle ZFS Storage Appliance:

snmpget -v1 -c public <Appliance IP address> 1.3.6.1.4.1.42.2.225.1.4.2.0 SNMPv2-SMI::enterprises.42.2.225.1.4.2.0 = STRING: "Sun ZFS Storage 7420" - Identify a username and password on Oracle ZFS Storage Appliance that have the privileges required to create and destroy snapshots on the volumes that host the source PDB database files.

For this example, let's assume that the username is

storage-rootand the password isstorage-password.

- Set the following properties and enable the SNMP service:

- The DBA performs the following configuration on the database server:

- Store the Oracle ZFS Storage Appliance username and password in the Oracle Database TDE keystore.

- Issue the following SQL command to create a password-enabled software keystore if the Oracle Database installation does not have any keystore:

SQL> ADMINISTER KEY MANAGEMENT CREATE KEYSTORE '<keystore-location>' identified by <keystore-password>; - Issue the following SQL command to add storage user information to the keystore after opening the keystore:

SQL> ADMINISTER KEY MANAGEMENT SET KEYSTORE OPEN IDENTIFIED BY <keystore-password>; SQL> ADMINISTER KEY MANAGEMENT ADD SECRET '<storage-password>' for client '<Appliance IP address>' using tag '<storage-root>' identified by <keystore-password> with backup; - Ensure that Direct NFS is enabled on the server.

The alert file should have a message similar to this:

bash-4.1$ grep -i NFS alert* Oracle instance running with ODM: Oracle Direct NFS ODM Library Version 3.0 Direct NFS: channel id [0] path [10.129.195.171] to filer [10.129.195.171] via local [] is UP - Open the source PDB,

srcDB, in read-only mode by issuing the following SQL command:alter pluggable database srcDB open read only force; close; - Create the new PDB,

targetDB, by snapshot cloningsrcDB:create pluggable database targetDB from srcDB snapshot copy ; - Open the source PDB as well as the target PDB in read-write mode:

alter pluggable database srcPDB open; alter pluggable database targetDB open;

See Also

- "Hard Partitioning with Oracle VM Server for SPARC" (PDF)

- "Implementing Root Domains with Oracle VM Server for SPARC" (PDF)

- "Virtualization Options for Oracle Database Deployments on Sun SPARC T-Series Systems" (PDF)

- "How to Consolidate, Optimize, and Deploy Oracle Database Environments with Flexible Virtualized Services" (PDF)

- "Best Practices for Running Oracle Databases in Oracle Solaris Containers" (PDF)

- "Maximizing Application Reliability and Availability with the SPARC M5-32 Server" (PDF)

- "Maximizing Application Reliability and Availability with SPARC T5 Servers" (PDF)

- "Oracle Multitenant" (PDF)

- "Plug into the Cloud with Oracle Database 12c" (PDF)

About the Author

Ritu Kamboj is a principal software engineer at Oracle. She has over 15 years of experience in software development with expertise in database design, performance, and high availability. She has worked extensively on Oracle Database, Sybase, and MySQL and databases. Recently her primary focus has been to make Oracle Database run best on the Oracle Solaris platform.

Revision 1.1, 12/16/2013