Configuring Oracle Solaris Cluster Software With Oracle RAC/CRS and Sun ZFS Storage Appliance 7000 Systems

This document describes the installation steps for a 3-node Sun Cluster 3.2 01/09 or Oracle Solaris Cluster 3.3 (or later) configuration running with Oracle Real Application Clusters (RAC) / Cluster Ready Services (CRS) 11.1.0.7 and a Sun ZFS Storage 7120 System.

Note: A Sun ZFS Storage 7120 was used in this configuration, but the information in this document should be applicable to the entire Sun Storage 7000 Unified Storage series of products.

This document covers the following topics:

- Introduction

- Initial Preparation on the Sun ZFS Storage 7120 Using the CLI

- Creating NFS Filesystems Using the Sun ZFS Storage 7120 CLI

- Accessing the NFS Filesystems From the Cluster Nodes

- Accessing the iSCSI LUNs From the Cluster Nodes

- Adding a Quorum Device

- Configuring Oracle RAC / CRS in the Cluster

- Author-Recommended Resources

Introduction

This document makes the following assumptions:

- The Oracle Solaris Cluster software has already been installed on the nodes.

- A Sun ZFS Storage 7120 System is connected to the same subnet as the cluster nodes and is reachable (that is, "ping-able") from each of the nodes.

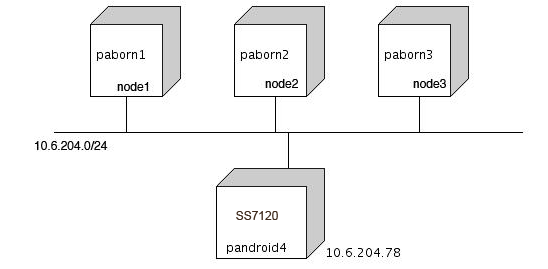

In the following figure, the cluster nodes are paborn1, paborn2, and paborn3, and they are running the Oracle Solaris 10 10/09 Operating System. The Sun ZFS Storage 7120 name is pandroid4, and this system is running the 2010.Q3.3.1 software.

In this configuration, a Sun ZFS Storage 7120 is employed for two purposes:

- To provide NFS filesystems for the CRS Oracle Cluster Registry (OCR) and voting files, as well as for RAC database files

- To provide some iSCSI LUNs that will be used as quorum devices (or, more precisely, as software quorum devices)

Initial Preparation on the Sun ZFS Storage 7120 Using the CLI

In the Sun ZFS Storage 7120, filesystems and LUNs are referred to generically as shares. These shares are created within the context of a project. The same project can contain a mix of filesystems and LUNs. The creation of a new project is straightforward.

To perform the following steps, connect via ssh to the Sun Storage 7210 to access the Command Line Interface (CLI):

1. Create a project (crs-project is used for this example):

pandroid4:> shares

pandroid4:shares> project crs-project

pandroid4:shares crs-project (uncommitted)> commit

The newly created project can then be used to create the NFS filesystems and the iSCSI LUNs that will be accessed by the Sun Cluster.

2. Create an iSCSI target:

Note: in this example, the target's IQN is automatically generated by the system.

pandroid4:> configuration san targets iscsi

pandroid4:configuration san targets iscsi> create

pandroid4:configuration san targets iscsi target (uncommitted)> set alias="Tgt4RAC"

alias = Tgt4RAC (uncommitted)

pandroid4:configuration san targets iscsi target (uncommitted)> set auth=none

auth = none (uncommitted)

pandroid4:configuration san targets iscsi target (uncommitted)> set interfaces=nge0

interfaces = nge0 (uncommitted)

pandroid4:configuration san targets iscsi target (uncommitted)> commit

pandroid4:configuration san targets iscsi> list

...

TARGET ALIAS

target-000 Tgt4RAC

|

+-> IQN

iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37

...

The created target - more specifically the target's IQN - will be referenced later when accessing the iSCSI LUNs from the Cluster nodes.

3. Create an iSCSI target group (Grp4RAC in this example), and include the previously created target in that group:

pandroid4:configuration san targets iscsi groups> create

pandroid4:configuration san targets iscsi group (uncommitted)> set name="Grp4RAC"

name = Grp4RAC (uncommitted)

pandroid4:configuration san targets iscsi group (uncommitted)> set targets=iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37

targets = iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37 (uncommitted)

pandroid4:configuration san targets iscsi group (uncommitted)> commit

pandroid4:configuration san targets iscsi groups> list

...

group-002 Grp4RAC

|

+-> TARGETS

iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37

...

This target group will be referenced later when the iSCSI LUNs are created (see step 6 below)

4. Create the iSCSI initiators:

a. on the host side, determine the IQN of each initiator (i.e each node) :

root@paborn1 # iscsiadm list initiator-node

Initiator node name: iqn.1986-03.com.sun:01:00144f971d46.paborn1 <===

Initiator node alias: -

Login Parameters (Default/Configured):

Header Digest: NONE/-

Data Digest: NONE/-

Authentication Type: NONE

RADIUS Server: NONE

RADIUS access: unknown

Tunable Parameters (Default/Configured):

Session Login Response Time: 60/-

Maximum Connection Retry Time: 180/-

Login Retry Time Interval: 60/-

Configured Sessions: 1

b. (back on the 7210) Using the IQN info obtained in step a., create an initiator:

pandroid4:configuration san initiators iscsi> create

pandroid4:configuration san initiators iscsi initiator (uncommitted)> set alias="paborn1"

alias = paborn1 (uncommitted)

pandroid4:configuration san initiators iscsi initiator (uncommitted)> set initiator=iqn.1986-03.com.sun:01:00144f971d46.paborn1

initiator = iqn.1986-03.com.sun:01:00144f971d46.paborn1 (uncommitted)

pandroid4:configuration san initiators iscsi initiator (uncommitted)> commit

pandroid4:configuration san initiators iscsi> list

NAME ALIAS

initiator-000 paborn1

|

+-> INITIATOR

iqn.1986-03.com.sun:01:00144f971d46.paborn1

...

Repeat Step a and b for each node.

5. Create an initiator group (rabornGrp in this example), and include the previously created initiators - referenced by their IQN - in that group:

pandroid4:configuration san initiators iscsi groups> create

pandroid4:configuration san initiators iscsi group (uncommitted)> set name=abornGrp

name = abornGrp (uncommitted)

pandroid4:configuration san initiators iscsi group (uncommitted)> set initiators=iqn.1986-03.com.sun:01:00144f971d46.paborn1

,iqn.1986-03.com.sun:01:00144fac24ac.paborn2

,iqn.1986-03.com.sun:01:00144f97ed34.paborn3

initiators = iqn.1986-03.com.sun:01:00144f971d46.paborn1,iqn.1986-03.com.sun:01:

00144fac24ac.paborn2,iqn.1986-03.com.sun:01:00144f97ed34.paborn3 (uncommitted)

pandroid4:configuration san initiators iscsi group (uncommitted)> commit

pandroid4:configuration san initiators iscsi groups> list

GROUP NAME

group-001 abornGrp

|

+-> INITIATORS

iqn.1986-03.com.sun:01:00144f971d46.paborn1

iqn.1986-03.com.sun:01:00144fac24ac.paborn2

iqn.1986-03.com.sun:01:00144f97ed34.paborn3

...

This initiator group will be referenced later when the iSCSI LUNs are created (see step 6 below). For a given LUN, it will define which initiators (i.e nodes) are allowed to access it.

6. Create the iSCSI LUNs:

a. Select the project that was created in Step 1.

pandroid4:shares> select crs-project

b. Create LUNs (crslunA in this example) in that project, and associate them with the target group and the initiator group previously created:

pandroid4:shares crs-project> lun crslunA

pandroid4:shares crs-project/crslunA (uncommitted)> set volsize=20G

volsize = 20G (uncommitted)

pandroid4:shares crs-project/crslunA (uncommitted)> set targetgroup=Grp4RAC

targetgroup = Grp4RAC (uncommitted)

pandroid4:shares crs-project/crslunA (uncommitted)> set initiatorgroup=abornGrp

initiatorgroup = abornGrp (uncommitted)

pandroid4:shares crs-project/crslunA (uncommitted)> commit

pandroid4:shares crs-project> list

LUNs:

NAME SIZE GUID

crslunA 20G 600144F0FB360BAD00004C73C7E90001

...

Repeat step b. for each LUN, as needed.

At this point, the iSCSI LUNs you created can be acquired as iSCSI targets by the cluster nodes (the iSCSI initiators).

Creating NFS Filesystems Using the Sun ZFS Storage 7120 CLI

1. Select the project where the filesystem will be created ( crs-project in this example)

pandroid4:shares> select crs-project

2. Create a filesystem (nfs-for-crs in this example) :

pandroid4:shares crs-project> filesystem nfs-for-crs

pandroid4:shares crs-project/nfs-for-crs (uncommitted)> set mountpoint=/export/crs

mountpoint = /export/crs (uncommitted)

pandroid4:shares crs-project/nfs-for-crs (uncommitted)> set root_user=root

root_user = root (uncommitted)

pandroid4:shares crs-project/nfs-for-crs (uncommitted)> set sharenfs="anon=0"

sharenfs = anon=0 (uncommitted)

pandroid4:shares crs-project/nfs-for-crs (uncommitted)> set root_permissions=744

root_permissions = 744 (uncommitted)

pandroid4:shares crs-project/nfs-for-crs (uncommitted)> commit

pandroid4:shares crs-project> list

Filesystems:

NAME SIZE MOUNTPOINT

nfs-for-crs 79.6K /export/crs

...

Repeat these steps for each filesystem, as needed.

Accessing the NFS Filesystems From the Cluster Nodes

At this point, the filesystems you just created are usable and can be accessed and mounted from any node.

As a quick verification, you can try the following command on a node using the IP address of the Sun ZFS Storage 7120 (10.6.204.78 for this example):

# cd /net/10.6.204.78/export/crs

# mount -v|grep crs

10.6.204.78:/export/crs on /net/10.6.204.78/export/crs type nfs

remote/read/write/nosetuid/nodevices/intr/retrans=10/retry=3/xattr/dev=5580006

on Mon Oct 5 18:30:32 2009

Accessing the iSCSI LUNs From the Cluster Nodes

To make the created LUNs visible and usable from the nodes, execute the following additional steps on each node:

1. Enable the static discovery method for iSCSI:

# iscsiadm modify discovery -s enable

# iscsiadm list discovery

Discovery:

Static: enabled <--- good!

Send Targets: disabled

iSNS: disabled

2. Using the IQN of the target created in Step 2 of "Initial Preparation on the Sun ZFS Storage 7120":

# iscsiadm add static-config iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37,10.6.204.782

3. To make sure the dev and devices tree gets updated with the LUNs newly added, run the following command:

# devfsadm -i iscsi

4. Now the LUNs should be visible. You can use the iscsiadm list target command to see the new LUNs and their associated device name (cXtYdN):

# iscsiadm list target -S

Target: iqn.1986-03.com.sun:02:57a9b940-6df5-c127-8175-fd49d7c9fa37

Alias: pool-0/local/crs-project/crslunA

TPGT: 1

ISID: 4000002a0000

Connections: 1

LUN: 0

Vendor: SUN

Product: SOLARIS

OS Device Name: /dev/rdsk/c6t600144F04AC9EB700000144FA6E77400d0s24AC9EB700000144FA6E77400d0s2

...

5. If necessary, use the format command to change the label, the partitioning, or both, as needed.

6. Once you're done adding all the iSCSI LUNs you created in the Sun ZFS Storage 7120 on each node, update the Sun Cluster DID name space with the newly added devices. From one node, run this command:

# cldevice populate

Configuring DID devices

did instance 24 created.

did subpath paborn1:/dev/rdsk/c6t600144F04AC9EB700000144FA6E77400d0

created for instance 24.

Configuring the /dev/global directory (global devices)

obtaining access to all attached disks

#

7. Check that all odes are seeing the newly added LUNs:

# cldevice status

...

/dev/did/rdsk/d24 paborn1 Ok <--

paborn2 Ok <-- good!

paborn3 Ok <--

...

Note: The sequence of Solaris commands listed previously is not specific to the Sun ZFS Storage 7120. The very same steps are applicable to any iSCSI target array.

At this stage, the LUNs created and configured in the Sun ZFS Storage 7120 are ready to be used. They can be used as any other shared storage devices configured in the cluster.

Adding a Quorum Device

You can pick one of the iSCSI LUNs to be used as a quorum device.

For example:

# clquorum add d24

# clquorum show d24

=== Quorum Devices ===

Quorum Device Name: d24

Enabled: yes

Votes: 2

Global Name: /dev/did/rdsk/d24s2

Type: shared_disk

Access Mode: scsi3>

Hosts (enabled): paborn2, paborn3, paborn1

Configuring Oracle RAC / CRS in the Cluster

1. Edit the /etc/vfstab file on each node.

For this example, two NFS filesystems have been created in the Sun ZFS Storage 7120 for Oracle RAC/CRS: /export/oracrs and /export/oradb. (See the Creating NFS Filesystems From the Sun ZFS Storage 7120 GUI for information on how to create NFS filesystems.)

a. Add the following vfstab entries. Note the options so the NFS filesystems that were created in the Sun ZFS Storage 7120 get mounted automatically at boot time.

# vi /etc/vfstab

...

10.6.204.78:/export/crs - /data/crs nfs 2 yes

rw,bg,forcedirectio,wsize=32768,rsize=32768,hard,noac,nointr,proto=tcp,vers=3

10.6.204.78:/export/oradb - /data/db nfs 2 yes

rw,bg,forcedirectio,wsize=32768,rsize=32768,hard,noac,nointr,proto=tcp,vers=3

b. For now, manually mount these filesystems:

# mount /data/crs

# mount /data/db

2. Change the ownership of the filesystems to oracle/dba:

# chown oracle:dba /data/crs

# chown oracle:dba /data/db

3. Configure the RAC Framework Resource Group.

From one node, run the following commands:

Note: Adjust the nodelist to match your configuration, and also adjust Desired_primaries and Maximum_primaries as well.

# clrg create -S rac-framework-rg

# clrs create -t SUNW.rac_framework:4 -g rac-framework-rg rac-framework-rs

# clrs create -t SUNW.rac_udlm -g rac-framework-rg -p resource_dependencies=rac-framework-rs rac-udlm-rs

# clrg online -emM rac-framework-rg

4. Install Oracle CRS and the Oracle binaries, and then create the database.

Note: This document doesn't describe how to perform this in detail; it provides only an outline of what needs to be done for this task.

a. Install the CRS OCR and the VOTE devices on the NFS filesystems that were previously created on the Sun ZFS Storage 7120 and mounted on the nodes (mounted on /data/crs).

Note: A minimum of five devices of 256MB each need to be created, and the owner must be changed to oracle:dba.

One way to accomplish this is to use the mkfile command:

# mkfile 256m ocrdev1

# chown oracle:dba ocrdev1

# ls -l ocrdev1

-rw------T 1 oracle dba 268435456 Nov 2 16:56 ocrdev1

Alternatively, you can use iSCSI LUNs as CRS configuration devices, and you can create slices on the iSCSI LUN using the format command, as you do on a physical disk. Please refer to Sun Cluster release documentation for Oracle RAC configuration for details.

b. Install Oracle CRS and the rdbms binaries on local disks. For this example, we'll use CRS_HOME=/install/oracle/crs and ORACLE_HOME=/install/oracle/10g.

c. Add crs_framework resource to rac-framework-rg after CRS binary installation completion.

# clrs create -t SUNW.crs_framework -g rac-framework-rg \

-p resource_dependencies=rac-framework-rs crs-framework-rs \

# clrs enable crs-framework-rs

d. Create Oracle listeners.

e. Create the Oracle RAC database. (We'll refer to this as testdb in this example.)

5. Create and configure a scalable_rac_server_proxy resource group (rac-proxy-rg in this example). note: Adjust the variables to your configuration.

# clrt register SUNW.scalable_rac_server_proxy

# clrg create -S -p rg_affinities=++rac-framework-rg rac-proxy-rg

# clrc create -t SUNW.scalable_rac_server_proxy -g rac-proxy-rg \

-p Resource_dependencies=rac-framework-rs \

-p CRS_HOME=/install/oracle/crs -p DB_NAME=testdb \

-p ORACLE_HOME=/install/oracle/10g \

-p ORACLE_SID{paborn1}=testdb1 \

-p ORACLE_SID{paborn2}=testdb2 \

-p ORACLE_SID{paborn3}=testdb3 \

-p resource_dependencies_offline_restart=crs-framework-rs rac-proxy-rs

#clrg online -emM rac-proxy-rg

Author-Recommended Resources

- Oracle Solaris Cluster on Oracle Technology Network

- Oracle Solaris Cluster Administration

- Oracle Solaris Cluster Data Service for Oracle Real Application Clusters Guide

- Sun ZFS Storage 7000 System Administration Guide

- Note: Online help for the Sun Storage 7000 series products isavailable by clicking the Help button in the GUI

Revision 1, 06/10/2011