How Oracle Solaris Makes Oracle Database Fast

Published June 2013

Optimizations in Oracle Solaris and in the components of the Oracle stack can have a large impact on application performance as well as system security and reliability.

Is choosing an operating system all that's important any more? After all, virtualization lets sysadmins choose the OS that best matches the workload they plan to put on each virtual machine. And Red Hat Linux on Intel is cheap and ubiquitous enough to work for most anything. Right?

We don't think so.

About Operating Systems and Performance

Operating systems were once the glue between the hardware and the applications, parsing out the underlying hardware resources to the application. Over time they evolved to support the entire Infrastructure-as-a-Service (IaaS) stack, including server and network virtualization, resource management, advanced file systems, and storage management. While operating system capabilities have broadened in many respects, one aspect has remained the same: the OS can still have a dramatic impact on application performance. Whether deployed on bare metal or in a virtual environment, the OS is a critical factor in boosting or impeding application performance and data center resource efficiency.

For applications that rely on Oracle Database, a high-performance operating system translates into faster transactions, better scalability to support more users, and the ability to support larger capacity databases. When deployed in virtualized environments, multiple Oracle Database servers can be consolidated on the same physical server, allowing IT departments to improve system utilization, simplify administration, and lower TCO for database deployments.

Since Oracle acquired Sun Microsystems in 2010, it has continued to invest in optimizations for the Oracle Solaris operating system. Each optimization squeezed more performance out of Oracle Database, and they added up.

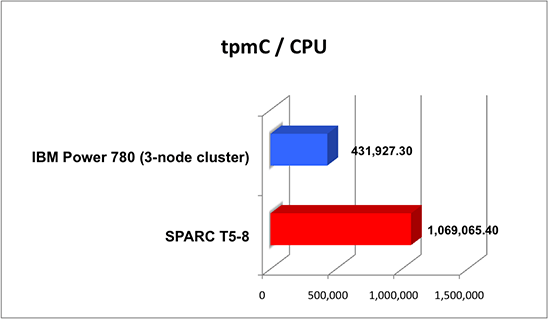

In a recent TPC-C benchmark the new SPARC T5-8 server achieved a single-system world record of 8,552,523 tpmC (with a price performance of $0.55/tpmC) on Oracle Database 11g Release 2. Compared to the IBM Power 780 three-node cluster for TPC-C tpmC, these results show the SPARC T5-8 server to be 2.4 times faster per chip, as shown in the Figure 1.

Figure 1

Note: TPC Benchmark C, tpmC, and TPC-C are trademarks of the Transaction Processing Performance Council (TPC). Oracle's SPARC T5-8 server (8/128/1024) with Oracle Database 11g Release 2 Enterprise Edition with Oracle Partitioning, 8,552,523 tpmC, US$0.55/tpmC. IBM Power 780 three-node cluster result (24/192/768) with DB2 ESE 9.7, 10,366,254 tpmC, US$1.38/tpmC. Source: results as of 3/26/2013.

The rest of this article describes the optimizations in Oracle Solaris that contributed to the terrific performance of Oracle Database.

Optimization #1: Memory

Memory is a key resource that operating systems control on behalf of application workloads. Oracle Solaris features several memory optimizations that help to accelerate Oracle Database queries. Here's a synopsis.

Non-Uniform Memory Access (NUMA) Optimization and Memory Placement

Both Oracle Solaris 10 and Oracle Solaris 11 run faster on NUMA architecture machines such as Oracle's SPARC T4, T5, and M5 servers. In these multiprocessor systems, each processor manages memory and memory coherence between processors, using these Oracle Solaris technologies:

- Memory Placement Optimization (MPO)—Oracle Solaris 10 uses MPO to improve memory placement decisions across physical server memory. In this way, Oracle Solaris 10 places memory as close as possible to the processors that access it while still maintaining balance within the system. As a result, Oracle Database applications are often able to run considerably faster since memory latencies are lower.

- Hierarchical Lgroup Support (HLS)—In Oracle Solaris 11, HLS takes MPO memory placement a step further. HLS helps Oracle Solaris optimize performance for systems with advanced memory latency hierarchies. It allows the operating system to distinguish between degrees of memory remoteness, allocating resources with the lowest possible latency. If local resources are not available by default, HLS helps Oracle Solaris allocate the nearest remote resources to minimize latency.

Large Memory Pages

Because of scalability enhancements to the virtual memory system in Oracle Solaris 11, Oracle Database can allocate 2-GB page sizes to its System Global Area (SGA), the group of shared memory areas that is dedicated to Oracle Database. Large page sizes reduce costly Transaction Lookaside Buffer (TLB) misses in the CPU architecture that lead to additional paging. Enhancements to memory prediction in Oracle Solaris also let you monitor memory page use and adjust the page size to match application and database needs.

Support for ISM (Intimate Shared Memory) and Dynamic ISM (DISM)

Oracle Database uses shared memory to store frequently used data, such as the database buffer cache and shared pool. For this reason, Oracle Solaris includes support for specially tuned variants of shared memory known as ISM and DISM. (Similar to ISM, DISM is a shared memory region that can grow dynamically.) ISM and DISM shared memory segments take advantage of large pages locked in by the Oracle Solaris kernel that can't be paged out, making them always available and reducing context switches and overhead.

Multithreaded Shared Memory Operations

Oracle Solaris 11 features a new multithreaded kernel process called vmtasks that accelerates the creation, locking, and destruction of pages in shared memory. Since the vmtasks process is multithreaded, it parallelizes the creation and destruction of shared memory segments, which helps to speed up database server startups and shutdowns. Decreasing server startup and shutdown times yields improved database availability and service levels.

Optimization #2: Critical Threads

Oracle Solaris 10 and 11 have the ability to permit either an administrator or developer to identify a "critical thread" for the process scheduler by raising its priority to 60 or above. An administrator can do this through the command line interface. An application can do it by invoking system calls to the related function. When a thread is deemed critical, it will run by itself on a single core, garnering all the resources of that core for itself. To prevent resource starvation of other threads, this feature is disabled when there are more runnable threads than there are available CPUs.

Critical threads have important performance and scalability implications for Oracle Database workloads. The log writer process (LGWR), which logs transactions for recovery purposes, can often be a limitation for transaction throughput in Oracle Database 11g. Common practice today is to statically bind LGWR to a processor core. Critical threads, however, can assign LGWR much more dynamically, improving resource utilization.

In a similar fashion, the Lock Management System (LMS) for Oracle Real Application Clusters (Oracle RAC) can sometimes inhibit scalability. The LMS lock manager is a user-level distributed lock that mediates requests for database blocks between processes on cluster nodes. Using a critical thread for the LMS lock manager can help Oracle RAC to scale more effectively.

Optimization #3: Kernel Acceleration for Oracle RAC

Another enhancement in Oracle Solaris helps to accelerate performance of the LMS lock manager for Oracle RAC deployments. Fulfilling a request for database blocks across nodes requires traversing and copying data across the user/kernel boundary on the requesting and serving nodes, even for requests for blocks with uncontended locks. Oracle Solaris includes a kernel accelerator to filter database block requests destined for LMS processes, directly granting requests for blocks with uncontended locks, eliminating user-kernel context switches, associated data copying, and LMS application-level processing for those requests.

Optimization #4: Built-In Virtualization and Resource Management

Oracle Solaris includes the lightweight virtualization technology of Oracle Solaris Zones, allowing multiple Oracle Database servers to run safely within an OS instance on a single physical machine. Oracle Database instances are completely isolated within zones, preventing processes in one zone from affecting processes running in another. Available in the Oracle Solaris 10 and 11 releases without any additional licensing fees, Oracle Solaris Zones permit effective resource controls. They allow sysadmins to allocate compute, memory, and I/O resources and to create virtual network interfaces (VNICs) that share a single physical network interface with defined bandwidth allocations. In addition, zones can be used in conjunction with Oracle RAC or Oracle Solaris Cluster to build highly available database services.

For Oracle Database deployments that use Oracle's Sun ZFS Storage Appliances, Oracle engineers have developed a process that can automate the database cloning process, allowing administrators to duplicate databases and the supporting Oracle Solaris Zones up to 50 times faster than manual methods. The entire duplication process can be executed using a single script that creates, configures, and provisions independent Oracle Solaris Zones and copies the database. The script uses Oracle Recovery Manager (Oracle RMAN) backup technology, the CloneDB feature of Direct NFS (dNFS) Client introduced in Oracle Database 11g, Oracle Solaris Zones, and Sun ZFS Storage Appliance snapshots and cloning. The paper "How to Accelerate Test and Development Through Rapid Cloning of Production Databases and Operating Environments" describes the process in greater detail.

Optimization #5: Enterprise Reliability and Management Efficiency

The Fault Management Architecture of Oracle Solaris monitors hardware components and reports failures. The built-in Service Management Facility (SMF) controls and restarts failed services automatically. Both provide enterprise-class reliability.

Oracle Enterprise Manager Ops Center 12c, part of the Oracle Enterprise Manager portfolio, manages Oracle Solaris operating system instances, virtual environments created with Oracle Solaris Zones, OS administrative rights, patching, and updates. This management interface is available with every Oracle system or operating system support contract. You can expand it to manage application and cloud infrastructure by purchasing additional modules.

Optimizations Across the Oracle Stack

Beyond the Oracle Solaris optimizations described above, Oracle has made considerable engineering investments to add value when deploying multiple Oracle products together. These cross-product integrations and optimizations deliver advantages in areas such as performance, security, system reliability, and simplicity in data center operations.

While Oracle Database releases are available for a number of commercially available hardware platforms, operating systems, and virtualization technologies, there are distinct advantages to running Oracle Database services on an end-to-end Oracle infrastructure. Oracle's engineered systems—Oracle Database Appliance, Oracle Exadata Database Machine, and Oracle SuperCluster, for example—exemplify how Oracle's investment in optimizations between stack layers has paid off, improving performance, simplifying data center operations, and enhancing availability. Some of these are described below.

Built-In Acceleration of Oracle Transparent Data Encryption in SPARC T-Series and M5 Servers

Oracle's SPARC T-Series and M5 servers—including the recently announced SPARC T5 servers—include an on-core cryptography engine that can accelerate encryption and decryption operations, including secure database queries from Oracle Database. When using the Transparent Data Encryption feature of Oracle Database to encrypt sensitive data, SPARC T-Series and M5 processors can perform encryption and decryption without the need to add any additional hardware. In Transparent Data Encryption testing, the earlier-generation SPARC T4 processor even proved to be 43% faster on secure database queries in comparison to Intel Xeon X5600 processors using AES/NI technology. (for further information on Transparent Data Encryption with Oracle Database, see "High Performance Security for Oracle Database and Fusion Middleware Applications Using SPARC T4.") Because on-chip encryption can occur at high speeds, organizations that must protect sensitive data can take advantage of built-in encryption and use it more pervasively to reduce risk.

Built-In Oracle VM for SPARC Virtualization Featuring Live Migration

In addition to Oracle Solaris Zones, Oracle VM Server for SPARC is a virtualization technology built into all Oracle chip multithreading (CMT) servers, including SPARC T4, T5, and M5 processor-based servers. Oracle VM Server for SPARC leverages an integrated hypervisor to subdivide system resources down to the processor thread, cryptographic processor, memory, and PCI bus, creating virtual partitions called logical domains (LDoms). Each logical domain runs in one or more dedicated CPU threads and hosts a separate instance of the Oracle Solaris operating system. Administrators can assign and dynamically reallocate system resources to domains that host critical database workloads, allowing them to align resources based on business priorities. PCIe direct I/O functionality assigns either individual PCIe cards or entire PCIe buses to a domain, enabling native I/O throughput even in a virtualized environment.

Of note, Oracle VM Server for SPARC supports "secure live migration"—that is, the migration of active domains to other systems while maintaining the availability of production database and application services to users. By taking advantage of on-chip cryptographic accelerators in SPARC T-Series and M5 servers, domain migrations can be fully encrypted and decrypted at wire speeds. This capability makes it easy to migrate domains quickly and safely for load balancing, disaster recovery, or when performing upgrades, even across insecure networks such as the internet.

Optimizations for Oracle Storage

Exclusive to Oracle's Sun ZFS Storage Appliances and Pillar Axiom storage systems, Hybrid Columnar Compression (HCC) technology is a technique for organizing data within a database block. As the name implies, it uses a hybrid approach—a combination of both row and columnar methods—for storing data. The approach achieves compression benefits of columnar storage but avoids performance shortfalls associated with a pure columnar format.

Compressing data in this way before sending it to storage delivers two positive outcomes: less data capacity is required and higher performance is achieved (since there is less data to move). Enterprises with existing Oracle databases and in-database archives for OLTP, data warehousing, or mixed workloads have seen as much as 10x to 50x reductions in data volumes by using Hybrid Columnar Compression on the Pillar Axiom 600 or Sun ZFS Storage Appliance products.

Oracle's Pillar Axiom storage system adjusts Quality of Service (QoS) for SAN data storage according to defined priorities, making it easy to optimize I/O performance for business-critical databases. QoS policies can be modified dynamically to reflect changes in business conditions and data value. The storage system's QoS manager interprets these policy settings and makes decisions about where to physically place data, based on the service level requested as well as the actual devices in the system. Migrating database storage across different devices is a simple one-click process of changing the associated storage class. The system then migrates the data while continuing to provide database access.

Integrated Infrastructure Management

Oracle Enterprise Manager Ops Center 12c is capable of managing all Oracle hardware, firmware, virtual systems, operating system instances, and updates. By adding other modules in the Oracle Enterprise Manager portfolio, sysadmins can also control Oracle Database instances and Oracle Applications across the enterprise. That's much easier and cheaper than cobbling together a bunch of different tools from different vendors.

One Riot, One Ranger

Oracle Database on Oracle Solaris runs faster because Oracle has engineered so many cross-product optimizations. But deploying Oracle Database on a complete Oracle stack provides another form of performance: one vendor to call. Instead of spending hours trying to figure out which vendor's product is the cause of an issue, you can simply dial Oracle support and let them start solving the problem right away.

If you are considering an "Oracle-on-Oracle" deployment, you have a number of options. You can build the solution stack out yourself. Or you can take advantage of an engineered system such as Oracle Database Appliance, Oracle Exadata Database Machine, or Oracle SuperCluster.

Oracle Exadata is specifically designed to accelerate Oracle Database 11g services for data warehousing and OLTP applications. Oracle SuperCluster is optimized for consolidating mission-critical applications and cloud services.

For implementations that need greater flexibility than these engineered systems provide, Oracle also defines multiple configurations in its Oracle Optimized Solution for Oracle Database. This solution provides a set of validated configurations and proven best practices that drive compelling performance and scalability for Oracle Database.

See Also

Here are additional resources:

- Oracle Solaris 11

- "Oracle Solaris Optimizations for the Oracle Stack" (PDF)

- Oracle Enterprise Manager 12c

- Oracle Enterprise Manager Ops Center 12c

- Oracle Exadata Database Machine

- Video: Why Is the Operating System Still Relevant?

- Video: An Engineer's Perspective: Why the OS Is Still Relevant

- Video: Chris Baker Explains Why the OS Is Still Relevant

Also see the following resources:

- Download Oracle Solaris 11

- Access Oracle Solaris 11 product documentation

- Access all Oracle Solaris 11 how-to articles

- Learn more with Oracle Solaris 11 training and support

- See the official Oracle Solaris blog

- Follow Oracle Solaris on Facebook and Twitter

About the Author

Ginny Henningsen has worked for the last 15 years as a freelance writer developing technical collateral and documentation for high-tech companies. Prior to that, Ginny worked for Sun Microsystems, Inc. as a Systems Engineer in King of Prussia, PA and Milwaukee, WI. Ginny has a BA from Carnegie-Mellon University and a MSCS from Villanova University.

Revision 1.0, 06/18/2013